HPC clusters first emerged in universities and research centers that required extra compute power but had limited budgets. The development of open source Linux-based operating systems and management tools was a natural evolution.

However, because open source tools are created by a variety of different organizations, no one solution offers a complete all-in-one product. Organizations therefore often need to tap into multiple open source projects to obtain everything they need to manage a cluster environment. They then need to get everything working together and educate their users on how to use each component.

According to IDC, software remains the biggest roadblock for HPC users because parallel software is lacking and many applications run into issues such as scalability. The number of cores per processor and per cluster continues to increase and new programming paradigms are needed to increase efficiency. As clusters grow larger and more complex, sophisticated management tools are required. Setting up and monitoring a cluster can become very difficult – this is especially true for heterogeneous environments that are dependent on multiple generations of technology that support a variety of applications and multiple user groups. The complexity further increases when organizations deploy multiple clusters — whether they are within the same datacenter or across a global organization – or move to a cloud-based model.

When addressing open source software for HPC

Despite the pervasiveness and benefits of open source software, it is not without its pitfalls for those organizations that lack the expertise needed to integrate, maintain and operate a stack of open source software. The associated costs can manifest in many ways – such as increased time spent on system administration, troubleshooting problems due to lack of formal support channels, or the cost of regression testing in-house. Administrators may also experience reduced productivity due to cluster downtime, support issues and issues due to low utilization of resources.

Organizations often experience additional education expenses associated with maintaining an open source environment. They will likely also experience creeping operational costs as they find themselves not only in the research business, but in the software maintenance business.

Comparisons between open source and commercial software often assume the alternatives have equal merit

This can be true for database software or scripting languages in areas where open source software is more widely recognized. However, in more specialized areas, open source software may not exist at all or provides less functionality and requires more effort to install and integrate. Some needed capabilities that emerge as critical elements include Web management consoles and portals, software provisioning tools, reporting and analysis tools, and tools to facilitate application integrations.

Often additional factors contribute to cost and risk when using open source software. This includes a lack of reliable technical roadmaps or the task of important software maintenance such as performing updates or applying security.

So how do you weigh the benefits of open source software?

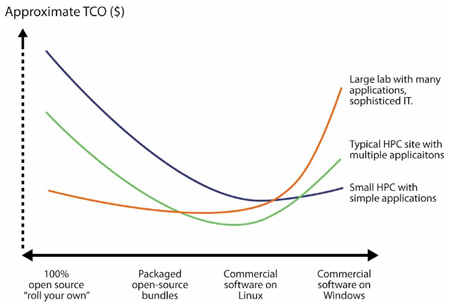

Total cost of ownership (TCO) varies based on unique requirements for each organization. Measuring your TCO is about finding the right balance. Clearly open source software is here to stay and organizations should consider many factors when deciding on the degree to which to deploy commercial or open source software. Some of these considerations include the cost of software, risk associated with deploying a variety of solutions, the complexity of the environment, and the expertise of the staff. Often these considerations are interrelated – such as in the case of selecting open source software for lower cost reasons, but in doing so creating a higher element of risk and added complexity in the environment.

The decision whether to deploy open-source or commercial software for HPC is sometimes painted as a either/or choice. In practice, however, organizations have a range of different options. The figure below illustrates a range of alternatives between purely open and commercial solutions. The shape of the TCO curve will vary depending on the environment. For most organizations, being at one extreme or the other is likely to be expensive and limits their downstream flexibility.

Productivity is Key

This is particularly true when evaluating the total cost of ownership. If we purely focused on acquisition cost, it’s easy to overlook other factors such as putting measures in place to track throughput and utilization of workloads. Utilization is crucial to getting the most from your infrastructure investment. Improving utilization minimizes additional resource acquisition costs and helps ensure that wait times for resources are minimal so that results are achieved in less time. Another consideration related to productivity is the time it takes to install a complete cluster environment, have it ready for full productive use and educate users on how to use the cluster so they can run their simulations and analyses productively.

Future proofing is very important

As a cluster environments evolve, we need to ensure that extensibility exists in the environment to support future requirements. As user requirements and applications evolve, features such as system monitoring and alerting tools, workload management systems, support for an increasing number of specialized workload types, and ease-of-use features including user-centric web portals become increasingly important.

For more information

TCO estimates will vary based on many factors including the nature of the installation, in-house capability, types of applications and cost of down-time. As you choose between open-source and commercial alternatives, there are many different costs related to administration and productivity.

IBM Platform Computing has developed a whitepaper and recorded Webcast that guide you through evaluating the true cost of deploying and managing an HPC environment. If you are interested in receiving a TCO evaluation of your HPC environment, please contact IBM.