If ideas are what will help students to survive outside the classroom, creative use of technologies like cloud computing will help them to change the world.

We at universities are not only engaged in research but also take a great responsibility shaping the next generations or even better, provide them with the tools to shape their own futures.

We at universities are not only engaged in research but also take a great responsibility shaping the next generations or even better, provide them with the tools to shape their own futures.

The Master Thesis project is an important stage in which students tackle a specific problem and do so using all available technologies and methodologies learned during their studies.

One of the best features of cloud computing is its high accessibility. In this way, it opens up a world of research possibilities and engenders a fast learning process, allowing the students to develop in a reasonable time projects like the ones that were outlined one year ago and those shown in the present article.

This time the effects of the financial crisis turned to be the center of gravity of this year’s Master Thesis projects, which are always proposed by the students themselves.

CygnusCloud (2012-2013, ongoing)

The three members of the CygnusCloud team (named in honor of the swam of Complutense University coat of arms) observed that many computational resources of the computer labs spread across the UCM campus were underutilized. On the other hand, computers from our faculty labs are often insufficient to meet the demand.

CygnusCloud Team: Adrian Fernandez, Samuel Guayerbas and Luis Barrios at one the UCM computer labs.

Turning each campus PC into a Computer Science lab computer would be one way to increase overall computing power, but in reality this isn’t a workable solution given the multitude of software requirements and subsequent administrative overhead this would create.

This project then aims to provide virtual lab machines that can be accessed from any available campus PC in which the both hardware and software requirements are minimal.

An on-demand and centralized distribution of these services like that proposed by CygnusCloud reduces the effects of budget cuts in education as students could use cheaper computers with less energy consumption. The proposed solution increases the academic progress as it optimizes the use of non-specialized computer labs and reduces costs as it relies totally on open source software.

Diagram showing CygnusCloud functionalities.

This academic year, our university reached an agreement with Google for outsourcing the e-mail services. Also, all campus members are provided with a Google Drive account. This will be exploited by CygnusCloud as it allows data ubiquity.

Moreover, the CygnusCloud team is one of the 85 participating at the Spanish Open-Source University Contest.

Next >> SmartCloud

SmartCloud (2012-2013, ongoing)

This project is focused on getting the most value out of existing infrastructure, as well as providing a service to UCM researchers or any member of an academic environment.

SmartCloud Team: César Cayo, Javier Bachrachas and Ailyn Baltá at the Monument to the Spanish Constitution at Madrid.

The main idea is to host virtual machines on computers in a LAN that aren’t currently in use. These machines don’t need to reside in a computer laboratory. Users from the administrative staff also qualify to be in the SmartCloud resource pool.

These machines could handle several guest types, which would serve as processing nodes inside a computing cluster or even separate machines for interactive access. One of the approaches is to rely on iPython for providing the researchers access to a powerful Matlab-style web notebook, which would be linked to their Google Drive accounts, sharing their data easily.

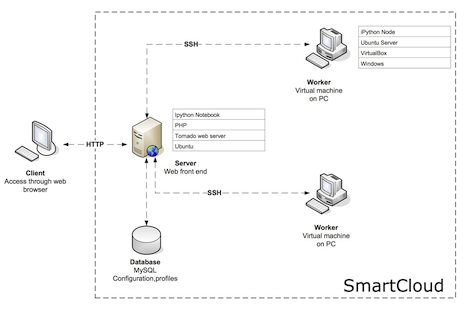

Diagram showing SmartCloud functionalities.

All technologies used in this project are open source and the outcome will represent a direct save for the adopting institution when it comes to licenses and hardware.

Both CygnusCloud and SmartCloud Master Thesis projects are co-advised by my colleague Dr. Jose Antonio Martin H., who is a Python expert and whose research interests are mainly Soft-Computing and Computational Intelligence. Indeed, this collaboration has increased the interdisciplinary nature and the quality of the solutions provided.

HORADRIM (2012-2013, ongoing)

Only one member comprises the team behind this project because he was my student at our Master in Bioinformatics and Computational Biology, which was rated as the best in its area for Spain last year.

This student is currently working at Sistemas Genómicos, an SME offering genetic analysis and bioinformatic services. He saw that this company had a limited computing environment due to the high demand of running applications. Unfortunately, this demand is not continuous and in the current economic situation scaling through the purchase of new equipment was not a valid option.

HORADRIM: Guillermo Marco at Sistemas Genómicos Datacenter

As cloud computing allows among other things the providing of resources on demand – pay only for what you use – he saw a great opportunity when his employer signed a partnership with T-Systems.

Diagram showing HORADRIM functionalities.

The first objective of the project is to implement a computing prototype that will expand the local cluster infrastructure into the cloud. The second objective is to calculate both economic and computational costs of certain bioinformatic applications in the cloud, in order to maximize the efficiency of the chosen cloud configuration.

Our best asset

Students are our best asset right now. The more we invest in them, the more we get in return. The next generation is aware of the harsh times we are currently living and they are willing “to lift a cloud.” We only have to give them an opportunity!

The three projects I have described are very representative because they affect three different areas affected by the financial crisis (teaching, research and SMEs). A feasible solution exists thanks to cloud computing and open source tools. If students can produce these ideas in just one academic year, what could they do over their academic careers and beyond!

If you are curious about past student projects, feel free to visit http://dsa-research.org/jlvazquez/students/.

About the Author

Dr. Jose Luis Vazquez-Poletti is Assistant Professor in Computer Architecture at Complutense University of Madrid (UCM, Spain), and a Cloud Computing Researcher at the Distributed Systems Architecture Research Group. He is (and has been) directly involved in EU funded projects, such as EGEE (grid computing) and 4CaaSt (PaaS Cloud), as well as many Spanish national initiatives.

From 2005 to 2009 his research focused in application porting onto grid computing infrastructures, activity that let him be “where the real action was.” These applications pertained to a wide range of areas, from fusion physics to bioinformatics. During this period he achieved the abilities needed for profiling applications and making them benefit of distributed computing infrastructures. Additionally, he shared these abilities in many training events organized within the EGEE Project and similar initiatives.

From 2005 to 2009 his research focused in application porting onto grid computing infrastructures, activity that let him be “where the real action was.” These applications pertained to a wide range of areas, from fusion physics to bioinformatics. During this period he achieved the abilities needed for profiling applications and making them benefit of distributed computing infrastructures. Additionally, he shared these abilities in many training events organized within the EGEE Project and similar initiatives.

Since 2010 his research interests lie in different aspects of cloud computing, but always having real life applications in mind, especially those pertaining to the high Performance computing domain.

Website: http://dsa-research.org/jlvazquez/

Linkedin: http://www.linkedin.com/in/jlvazquezpoletti/