[Editor’s Note: This article was modified to address a comment from Oak Ridge National Laboratory. It no longer says that the Titan supercomputer “failed” its acceptance test. The comment from ORNL is at the end of the article.]

According to recent news reports, Titan, the Cray XK7 located at the Department of Energy’s Oak Ridge National Laboratory and currently sitting at the top of the Top500 List, “isn’t working like it should”. This has puzzled many folks in the HPC community. How could Titan win the Top500 race last November, but be reported in February to have “bugs” that have “prevented users from getting access to the full Titan so far“? Some details are beginning to emerge, and the folks at Oak Ridge do expect Titan to pass its acceptance testing after Cray finishes repairing it. However, this situation does serve to raise some interesting questions about the Top500 List – and, in particular, about some pieces of the Top500 puzzle that are requested by the List keepers but are absent from the List. Let’s take a closer look.

Top500 Project

Top500 Project

The Top500 Project is very clear about its objectives and the methodology it uses to accomplish them:

The main objective of the TOP500 list is to provide a ranked list of general purpose systems that are in common use for high end applications.…

As a yardstick of performance we are using the `best’ performance as measured by the LINPACK Benchmark. LINPACK was chosen because it is widely used and performance numbers are available for almost all relevant systems.…

The benchmark used in the LINPACK Benchmark is to solve a dense system of linear equations. For the TOP500, we used that version of the benchmark that allows the user to scale the size of the problem and to optimize the software in order to achieve the best performance for a given machine.…

Since the problem is very regular, the performance achieved is quite high, and the performance numbers give a good correction of peak performance.

…

By measuring the actual performance for different problem sizes n, a user can get not only the maximal achieved performance Rmax for the problem size Nmax but also the problem size N1/2 where half of the performance Rmax is achieved. These numbers together with the theoretical peak performance Rpeak are the numbers given in the TOP500.

They’ve been collecting data for more than 20 years and, through the Top500 Lists, they provide a valuable information resource to the HPC community.

Some relevant data for the top ten computers on the Fall 2012 Top500 List are presented in the Table 1 below.

Table 1 – Selected Data from the November 2012 Top500 List

Missing Puzzle Pieces

Acceptance Testing

The conditions for the Top500 competition are spelled out in its Call for Participation. Among them are these statements (the emphases are ours):

The authors of the Top500 reserve the right to independently verify submitted LINPACK results, and exclude systems from the list which are not valid or not general purpose in nature. By general purpose system we mean that the computer system must be able to be used to solve a range of scientific problems. Any system designed specifically to solve the LINPACK benchmark problem or have as its major purpose the goal of a high Top500 ranking will be disqualified.

…

The systems in the Top500 list are expected to be persistent and available for use for an extended period of time. Any system assembled to run a LINPACK benchmark only, and set up specifically to gain an entry in the Top500 will be excluded from the list. The TOP500 authors will reserve the right to deny inclusion in the list if it is suspected that the system violates these conditions.

If a general purpose computing system hasn’t successfully completed its acceptance testing, the rules of the Top500 competition could be interpreted as precluding it from the competition. So, it’s probably worth waiting until after acceptance to compete. Otherwise, the case that a computer system is “general purpose” and “persistent and available for use for an extended period of time” would appear to be weak.

Unreported Data

Recall that, as cited above, the objectives of the Top500 Project include reporting not only Rmax but also Nmax, the problem size where Rmax is achieved, and Nhalf, where half of the performance Rmax is achieved. These numbers, together with the theoretical peak performance Rpeak, are to be reported in the TOP500 List.

From Table 1, we see that all top ten systems have reported their Rmax. Since without this datum there is no basis for being on the List, this is not a surprise. What is surprising however is that four of the top ten systems do not show an entry for Nmax and nine of the top ten have no entry for Nhalf. Note that among those reporting neither value is the number one system: Titan.

This begs a couple of obvious questions:

If the missing data were not reported, why were those systems included in the Top500 List?

If the missing data were reported, why are they not disclosed in the Top500 List?

If the answers have something to do with “confidentiality”, we note that all of the top ten systems appear to have been acquired with public money – and complete reporting and disclosure are clearly in the public interest.

Furthermore, incomplete reporting and/or disclosure serve to limit the utility of the Top500 List and erode public confidence in it. Given the sustained value that the List has provided over the past couple of decades, this would be a shame.

Time to Completion

Computers are for solving problems – not just running fast. Even in automobile racing it’s not just about maximum speed – it’s about crossing the finish line first. So, wouldn’t it be a good idea to add a couple of data points to the Top500 List:

Tmax – the time required to complete the Linpack Rmax run

Thalf – the time required to complete the Linpack Rhalf run

In fact, we suspect that some folks in the HPC community would be more interested in these numbers than in the maximum speed ones.

We strongly suspect that Tmax and Thalf data are available for the machines on the current Top500 List. Some number of people in our HPC community have these numbers (you know who you are ). So, how about providing them – and also filling in the blanks in the Rmax and Rhalf columns?

To seed the process of supplementing the List, we’ve provided Table 2 below. In it we’ve included some anecdotal and unverified – but presumed roughly accurate – data for a few of the top ten systems. The times listed are given in hours. If you can improve on these rough estimates or fill in any of the other blanks, please send us the data.

Table 2 – Supplementary Data for the November 2012 Top500 List

Going Forward

The next Top500 List is scheduled to be released in June at the International Supercomputing Conference in Leipzig, Germany. The submission deadline is May 18th. By ensuring that: all competing systems have passed their acceptance tests; all data traditionally disclosed are complete; and perhaps adding the Tmax and Thalf data, the next release of Top500 List could be made even more valuable to the HPC community.

Postscript

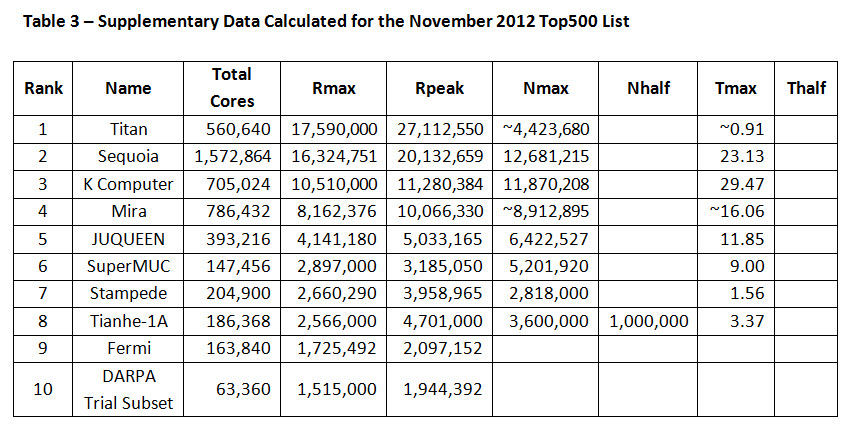

As noted in comments below, the times to completion, while not explicitly included in the published lists, may be calculated from Rmax and Nmax as follows:

Tmax = 2.0/3.0 * Nmax^3 / (Rmax * 10^9)

This yields Tmax in seconds. Table 3 below shows the results of this calculation, with Tmax converted to hours. Note that, since some of the Nmax data is approximate, so are the corresponding Tmax calculations. As mentioned above, you are invited to fill in the blanks and correct any errors you may find in this Table.

Table 3 – Supplementary Data Calculated for the November 2012 Top500 List

Comment from ORNL:

We are writing to address a factual error in Gary Johnson’s February 27th article in HPCwire “Top500: The Missing Puzzle Pieces.” In his article, Mr. Johnson states that “Titan, the Cray XK7 sitting at the top of the current TOP500 List, recently failed its acceptance test…” This statement is incorrect. Titan has not yet completed the full suite of acceptance tests but has successfully passed both the functionality and performance phases of acceptance testing. Moreover, Titan is within 1% of passing its stability test, the last component of the acceptance test suite. The original project schedule called for fully completing acceptance testing by June of 2013, a schedule we expect to meet. And, as we proceed through this complex testing procedure, users are making productive use of the system.

Thank you for the opportunity to correct the record.

James J. Hack

National Center for Computational Sciences, Director

Arthur S. Bland

Oak Ridge Leadership Computing Facility, Project Director

Related Articles

World’s Fastest Supercomputer Hits Speed Bump

Titan Knocks Off Sequoia as Top Supercomputer

DOE Labs Set Records with IBM Blue Gene/Q

Podcast: Accelerator Triple Play; TOP500 Results