The federal R&D budget figures for 2014 have been released and as many suspected following last year’s pushback on the exascale timeline, there was no room left in the government wallet for one quintillion FLOPS.

As John Holdren, Director of the Office of Science and Technology Policy briefly noted following questions about the future of the program, “the budget does not set goals for exascale.”

As John Holdren, Director of the Office of Science and Technology Policy briefly noted following questions about the future of the program, “the budget does not set goals for exascale.”

He did his best to soften the news by pointing to a 6% increase for the Department of Energy’s Advanced Scientific Computing Program, with a sweetener that the Networking and IT R&D inter-agency program will also get a 4.2% increase.

In this U.S. post-sequester reality, however, it is worth noting that programs to boost computational capabilities haven’t all been wiped away. And for now, there is still a great deal of funding that’s been tossed about to various labs and vendors seeking cures to exascale-class problems following last summer’s FastForward program.

The lack of push to exascale represents a competitive loss for the U.S., which is under renewed pressure to keep up with China’s ambitions on that front. But as we learned around last SC season, the timeline, which was once very aggressive, was clicked from fast forward to slow motion. The new, perhaps more realistic target is around 2020 as more pressing needs, including clean energy, climate change research, and advances in human health targets keep the nation more grounded. Then again, supercomputing is critical to all of the above. But does it have to be at exascale to be useful?

As NSF chief Cora Marrett said about the upcoming computer science investments from her camp, they will continue to “draw on decades of funding leading-edge computer science research…supporting a comprehensive portfolio of advanced infrastructure, programs and resources to facilitate research in computational and data-intensive science and engineering.” In an effort not to leave out the supercomputing folk, she pointed quickly to the recent massive investments in three main supers, including Yellowstone, Stampede and Blue Waters as continuing development areas.

This is all assuming, of course, the economy can at least find some steady footing. From the outside, it looks like the faltering for overall R&D programs has halted—but numbers aren’t always what they seem.

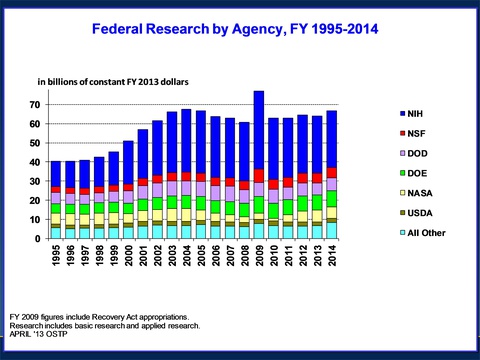

All told, 2014 will mark a $142.8 billion spend toward federal R&D programs, which marks an increase of $1.9 billion over what was doled out in 2012. But before you get excited about a renewed emphasis on research and development, remember that these figures are given without the all-important measure of inflation. Take that into account and you’re left with a decline in spending in “real dollar” terms, although slight.

Holdren says that this tricky decrease “is small, but represents a continuing commitment to avoid eating our seed core and protecting what we can of our R&D and STEM education budget.”

Three departments were identified by the Obama administration as being crucial to the long-term economic, social and research goals of the nation—the National Science Foundation (NSF), The White House’s Office of Science and Technology Policy (OSTP), and the National Institute of Standards and Technology (NIST).

Between the three of those, it almost sounds like the perfect storm for major supercomputing investment, but instead, key areas are focused on health, environmental and various other pressing areas, including space exploration. Naturally, these areas can’t be pushed forward without the aid of large-scale systems, but for now, the word “data-intensive” was bandied about in favor of exascale.

The National Institutes of Health is another area that will see some serious funding that will draw computational research into the funding fold. With the rise in electronic health records, genetic data goals and a slew of other research projects, including the recently-announced BRAIN Initiative, the agency is starting to see the appeal of the big data buzz. As NIH Director, Francis Collins noted, the health agency will be tapping into $41 million of its funding to launch the “Big Data to Knowledge” program to facilitate the sharing of large, complex biomedical datasets, new analytical methods and software, centers of excellence to support the research, and new resources dedicated to computational biology.

Holdren stressed that, “Throughout the budget, every new initiative is fully paid for, thus adding nothing to the deficit.” Further, he added, this goes a long way to achieving “another $1.8 trillion dollars in deficit reduction in a balanced way.”

He says that when combined with the deficit reduction already achieved, the president’s budget puts the nation on track to exceed the goal of $4 trillion in deficit reduction while growing economy and middle class through the various science, math and engineering (STEM) programs to create one million STEM nerds over the next decade.

And the term “nerds” is being used lovingly, you all know that… *sigh* …Just trying to make you feel better, America.