Why is more computing power a strategic asset for CAE users? The answer is pretty simple – HPC gives users insight into product performance that you can’t get quicker any other way – and that is extremely valuable to the product design process. It’s all about getting better insight into product behavior quicker! We can actually break the value out into 2 benefits of HPC:

- The first is the ability to do bigger, more challenging simulations – solve the unsolveable – and get high-fidelity insight into how your design is going to work in the real world.

- The second is the ability to run more simulations, to evaluate multiple design ideas, and reach an optimal design that works across a range of operating conditions.

In a recent ANSYS survey, when almost 3,000 respondents were asked to name the biggest pressures on their design activities, 52% cited “reducing the time required to complete design cycles.” At the same time, 28% of respondents named “producing more reliable products that result in lower warranty-related costs” as a chief concern. It is clear that HPC is an enabling technology to address these challenges.

HPC has become a software development imperative. As processor speeds have levelled off due to thermal constraints, hardware speed improvements are now delivered through increased number of computing cores. For ANSYS software to effectively leverage today’s hardware, efficient execution on multiple cores is essential. Software development to build and maintain parallel processing efficiency is therefore a major on-going focus at ANSYS!

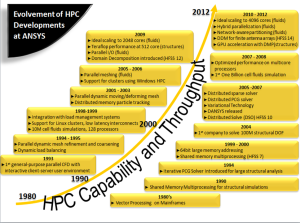

The above image is showing our history of delivering HPC technology, and reflects our sustained investment and achievement. Our HPC efforts – internally and with HPC hardware partners – lead to technology and performance leadership. But I guess also important for you, our HPC efforts ensure your return on overall HPC investment. This is not only for today but also for the future!

As can be noticed from the below image, the scalability of CFD at R13.0 was levelling off at around 1,500 cores or so. At R14, we could get good scalability up to 3 thousand cores. This was particularly possible through the implementation of hybrid parallelization, and network-aware partitioning. And I am very pleased to share with you some pre-release results of R15.0 showing ground-braking scale-out performance at 12 thousand cores!

Is this scaling sufficient? NO! We are constantly challenged to achieve next-generation scalability performance. Our top tier customers state to us: “[We’re] doubling our core count [for engineering simulation] every year…”, and “We’re planning to run [your CFD] in parallel this year on 10,000 cores and next year on 23,000 cores…” There is in our customer base clearly an increasing demand to run larger sized models (100 million cells and above) on thousands of cores. However, this is still a relatively small portion of customers demanding these hero-HPC simulations.

So, is there another reason why we should strive for the “best” parallel scalability or parallel efficiency?! YES! The main reason is here that we are able to demonstrate good scalability at the lowest number of computational cells per core as possible. A few years ago, this metric was about 100,000 cells per core. At R15, the scalability milestone is really 10,000 cells per core. So, what’s the real benefit of lowering this metric release by release?

The best way to explain this is by giving two examples.

- To perform a CFD simulation 10 times faster than a customer is doing today, the customer would need to buy and maintain 43% more hardware if using software that scales at 70% efficiency versus one that scales at near-ideal conditions.

- If CFD software can only scale down to about 100,000 cells per core, then a typical 2 million cell model can only run on 20 cores efficiently. Having more cores available does not make any sense because with more cores there is no further speedup! However, if the CFD software can scale to about 10,000 cells per core (like ANSYS Fluent at R15.0) the same 2 million cell model can then run on 200 cores resulting in an almost 10-fold faster turnaround!

Note that in the previously mentioned survey 34% of respondents cited to be constrained in terms of size/detail on nearly every simulation model! And, another 57% confirmed it for some of their models.

What’s the future of HPC in terms of scalability?

You don’t want to select a CAE application as your HPC solution just based on the past or present. Because HPC is so dynamic, the computing landscape changes so quickly, it’s critical that your CAE provider continues to focus on HPC so that you can take advantage of tomorrow’s technologies and expand the scope of what you can accomplish with simulation. ANSYS is doing just that. Some examples of that can be found below.

The first example is on-going optimization of our software. Here, we do much work with the processor vendors like Intel and NVIDIA to understand and optimize the mapping of the simulation workload to the compute resources (i.e. using new compiler options, setting processor affinities, and dynamically adjusting how the workload is balanced across multiple processors). ANSYS Mechanical customers can now interchangeably use CPU cores and multiple GPU’s to accelerate most simulation workloads. In addition, GPU support for the (3D AMG) coupled pressure-based solver in ANSYS Fluent demonstrates our commitment to allowing customers to leverage new and evolving technology, such as GPU, for faster simulation. Finally, we are excited to be supporting Intel’s Xeon Phi coprocessors in our upcoming 15.0 release of ANSYS Mechanical.

The second example speaks to solution scalability and the future of HPC: how we will need to continue enhancing our software in order to take customer’s use of simulation to the next level. While delivering ground-breaking scale-out performance at 15,000 cores for large (100M+ cell) CFD models at ANSYS 15.0, our target is to achieve parallel performance at 50,000 cores and more, and to continue to show great scaling as core counts per processor continue to increase and the distinction between CPUs and GPUs begins to blur.

A third example is the extension of parallelism and HPC performance across all aspects of the simulation process – from meshing and setup to file handling, visualization, and automated optimization techniques. For example, in our upcoming release 15.0 we will enable good scalability of parallel meshing when generating Tet/prism meshes. Although the performance is case dependent, 92% scalability has been observed on a 42 million cells mesh when using 8 cores.

A fourth example is the usability of our HPC solution. We are driving toward a unified environment – across solver components – for defining, submitting, and monitoring customer’s parallel workloads. In conjunction with our hardware partners, we are also exploring how our software can be more robust to hardware issues and how we can find and resolve those issues dynamically, in order to avoid interruptions or failed jobs. Finally, another example of improving the usability of our HPC solution is through simplifying cluster deployment. I am proud to mention our collaboration with IBM on pre-configured, and ANSYS optimized architectures, including support for remote 3D visualization and cluster/resource management.

I hope and trust that the above shows our commitment to delivering HPC performance and capability to take our customers to new heights of simulation fidelity, engineering insight and continuous innovation.

Register for the November 7 IBM hosted webinar: Simplified HPC Clusters for ANSYS Users:

https://engage.vevent.com/index.jsp?eid=556&seid=61257