This week has held plenty of news from the vendor and research sides, but revolved around the theme of large-scale simulations and their practical real-world value.

We kicked the week off with some fascinating news about a simulation that stretches back to the galactic past as it looked 13.8 billion years ago. Also on the massive simulation front, there was also some rather astonishing scaling work for engineering codes after a team based at the Barcelona Supercomputing Center pushed their homegrown multiphysics code to the 100,000 core mark on Blue Waters.

Continuing on the simulation theme, we touched on a great many examples of real-world applications on HPC centers across the globe this week, including how centers are examining everything from ozone pollution threats, crash tests and safety, electromagnetic interference, and brain-based networks.

Continuing on the simulation theme, we touched on a great many examples of real-world applications on HPC centers across the globe this week, including how centers are examining everything from ozone pollution threats, crash tests and safety, electromagnetic interference, and brain-based networks.

Rounding out the simulation focus for this early May edition was the podcast interview with Jim Ahrens, Visualization Team Leader at Los Alamos National Laboratory. We discussed these problems in the context of what Ahrens calls “cost per insight” and touch on some of the novel ways to make sure that cost is kept as low as possible using in situ analysis and storage, as well as other approaches.

With the practical value of large simulations foremost in mind, we launched into an effort to bring a more focused set of perspectives to HPCwire that kicked off this morning. We hope to complement the specific, focused nature of the many journals devoted to particular topics in HPC by filling out May through ISC with a sharing of research concepts from the community. There is a great deal of detail to be found here. The reception has already been overwhelming, especially from some of our European friends and we look forward to the review process with our own editors and advisory members.

This Week’s Top News Items

Panasas engineer Marcelo Cataldo has been recognized with the Allen Newell Award for Research Excellence from the School of Computer Science at Carnegie Mellon. Cataldo’s research has focused on developing and evaluating an analytical framework to assess how compatible the structure of a software development organization is with the technical architecture of the product being developed. The framework also identifies technical and organizational dependencies that may be detrimental to product quality and productivity and assesses how they can be reduced or eliminated.

Catalyst, a first-of-a-kind supercomputer at Lawrence Livermore National Laboratory (LLNL), is officially available to industry collaborators to test big data technologies, architectures and applications. Developed by a partnership of Cray, Intel and Lawrence Livermore, this Cray CS300 high performance computing (HPC) cluster is available for collaborative projects with industry through Livermore’s High Performance Computing Innovation Center (HPCIC).

Catalyst, a first-of-a-kind supercomputer at Lawrence Livermore National Laboratory (LLNL), is officially available to industry collaborators to test big data technologies, architectures and applications. Developed by a partnership of Cray, Intel and Lawrence Livermore, this Cray CS300 high performance computing (HPC) cluster is available for collaborative projects with industry through Livermore’s High Performance Computing Innovation Center (HPCIC).

A resource for the National Nuclear Security Administration’s (NNSA) Advanced Simulation and Computing (ASC) program, the 150 teraflop/s (trillion floating operations per second) Catalyst cluster has 324 nodes, 7,776 cores and employs the latest-generation 12-core Intel Xeon E5-2695v2 processors. Catalyst runs the NNSA-funded Tri-lab Open Source Software (TOSS) that provides a common user environment across NNSA Tri-lab clusters (Los Alamos, Sandia and Lawrence Livermore national labs).

Penguin Computing introduced its Icebreaker HPC family of scalable, high performance file-system solutions based on EMC VNX and EMC Isilon storage. Currently available with either Lustre or NFS file-systems, the Icebreaker HPC line integrates EMC VNX and EMC Isilon platforms with Penguins standard rack infrastructure in a form factor that Penguin says allows the easy addition of other HPC solutions.

Penguin Computing introduced its Icebreaker HPC family of scalable, high performance file-system solutions based on EMC VNX and EMC Isilon storage. Currently available with either Lustre or NFS file-systems, the Icebreaker HPC line integrates EMC VNX and EMC Isilon platforms with Penguins standard rack infrastructure in a form factor that Penguin says allows the easy addition of other HPC solutions.

The company also announced this week the immediate availability of MATLAB Distributed Computing Server on its HPC Cloud, POD. MATLAB Distributed Computing Server, providing access to multiple workers that can run computationally intensive MATLAB programs and Simulink models. MATLAB and Simulink users interact with MATLAB Distributed Computing Server through the Parallel Computing Toolbox. Users program parallel applications using the toolbox on their workstations, then push their programs to POD from their local MATLAB session. Application results are returned back to their MATLAB sessions once the parallel processing job has finished on POD.

Fujitsu has rolled out its Eternus DX200F all-flash array that uses solid-state drives for data storage. Fujitsu says it offers extremely low latency of 0.5 milliseconds (ms) or less for I/O-intensive applications, with the item to shorten batch process times and deliver the fast and stable performance. The new product will be available in Japan from May 8, and will be followed by subsequent availability worldwide.

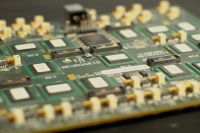

Pluribus Networks has announced a relationship with Super Micro to power its next-generation MicroBlade microserver platform switching blades with the Pluribus Netvisor network operating system. Netvisor is “the industry’s first and only bare-metal, distributed network hypervisor operating system”, enabling the convergence of compute, network, storage and virtualization into a programmable, open-standards-based platform. The Supermicro MicroBlade is a 6U 112 node Intel Atom-based compute and network platform with integrated 10/40 GbE Intel FM5224 Ethernet. The Pluribus Netvisor offers Layer 2+ features and high availability across multiple switch modules within the MicroBlade platform via Netvisor’s fabric-cluster capability.

Also, before we close out the week, take a moment to get geared up for ISC ’14 in Leipzig via an interview from the conference’s Nages Sieslack, who talked to Dr. Satoshi Matsuoka about the emerging role big data will play as the limits of extreme scale computing are pushed. On that note also, if you haven’t already booked a room for the event, it’s looking like there might be limited options available anywhere near the conference site. A few of our sources reported being over 10 miles away—just a heads up.

Also, before we close out the week, take a moment to get geared up for ISC ’14 in Leipzig via an interview from the conference’s Nages Sieslack, who talked to Dr. Satoshi Matsuoka about the emerging role big data will play as the limits of extreme scale computing are pushed. On that note also, if you haven’t already booked a room for the event, it’s looking like there might be limited options available anywhere near the conference site. A few of our sources reported being over 10 miles away—just a heads up.