If you were to come up with a list of transformative technologies to hit HPC in the last decade, cloud services and general-purpose GPUs would rank pretty high. While the idea of using virtual machines to run technical computing workloads was anathema to some, at least initially, the benefits were hard to argue with. Ease of use, scalability, elasticity, and pay-as-you-go pricing were all major draws, but there was still the matter of overhead, i.e., the virtualization penalty. In terms of sheer performance, bare metal had the advantage.

As cloud grew in popularity, so did something called GPGPU computing, that is using general purpose graphics processing units to accelerate computing jobs. Perhaps the two technologies, GPUs and virtualization, could be combined to create a cloud environment that would satisfy the needs of HPC workloads. A group of computer scientists set out to explore this very question.

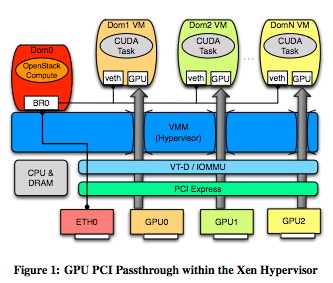

“We propose to bridge the gap between supercomputing and clouds by providing GPU-enabled virtual machines,” the team writes in a recently published paper. “Specifically, the Xen hypervisor is utilized to leverage specialized hardware-assisted I/O virtualization tools in order to provide advanced HPC-centric NVIDIA GPUs directly in guest VMs.”

It’s not the first time that GPU-enabled virtual machines have been tried. Amazon EC2 and a couple other cloud vendors have a GPU offering, however the approach is not without challenges and there are different ideas about how to best implement a GPU-based cloud environment, which the paper addresses.

The team of scientists, from Indiana University and the Information Sciences Institute (ISI), a unit of the University of Southern California’s Viterbi School of Engineering, compared the performance of two Tesla GPUs in a variety of applications using the native and the virtualized modes. To carry out the experiments, the researchers used two different machines, one outfitted with Fermi GPUs and and the other with newer Kepler chips. After running several benchmarks and assessing the results, the authors conclude that the GPU-backed virtual machines are viable for a range of scientific computing workflows. On average, the performance hit was 2.8 percent for Fermi GPUs and and 4.9 percent for Kepler GPUs.

In their comparison of virtualized environments with bare metal ones, the team studied three data points: FLOPS, device bandwidth and PCI bus performance. Among the more notable results in the FLOPS testing portion, the team found that even for double-precision FLOPS, the Kepler GPUs achieved nearly a doubling in performance. But more applicable to the research at hand, when it comes to raw FLOPS available to each GPU in both native and virtualized modes, the virtualization overhead was between 0 and 2.9 percent.

When other applications were tested, the performance penalty ranged from 0 percent in some cases to over 30 percent. The FFT benchmarks resulted in the most overhead, while the matrix multiplication based benchmarks had an average overhead of 2.9 percent for the virtualized setups.

In terms of device speed, which was measured in both raw bandwidth and 3rd party benchmarks, virtualization had “minimal or no significant performance impact.”

The final dimension being tested, the PCI express bus, had the highest potential for overhead, according to the research. “This is because the VT-d and IOMMU chip instruction sets interface directly with the PCI bus to provide operational and security related mechanisms for each PC device, thereby ensuring proper function in a multi-guest environment” the authors state. “As such, it is imperative to investigate any and all overhead at the PCI Express bus.”

In analyzing these results, the authors note that “as with all abstraction layers, some overhead is usually inevitable as a necessary trade-off to added feature sets and improved usability.”

While the same is true for GPU-equipped virtual machines, the research team contends that the overhead is minimal. They add that their method of direct PCI-Passthrough of NVIDIA GPUs using the Xeon hypervisor can be cleanly implemented within many Infrastructure-as-a-Service environments. The next step for the team will be integrating this model with the OpenStack nova IaaS framework with the aim of enabling researchers to create their own private clouds.