Greetings from Germany and the International Supercomputing Conference (ISC14) where, as happens each year, the bi-annual list of the top 500 fastest supercomputers is unveiled.

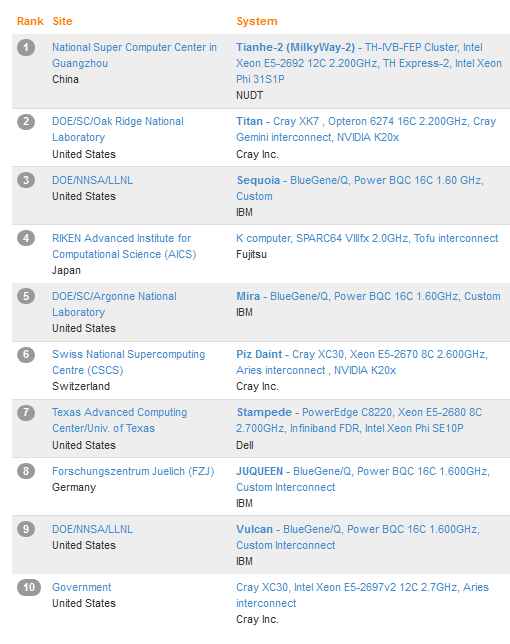

Usually, this happens with a great deal of fanfare and speculation over which machine will take the top position. However, this year, there is little surprise in the finding that the Chinese Tianhe-2 system, which blew all others out of the water when it was announced last year, held firmly onto its number one position. While you can view the specs of each of the machines in more detail at the TOP500 site, we wanted to use this time to gauge some of the overarching trends we’ve been observing in terms of performance curves over time, accelerator adoption, architecture choices and more. In short, after you browse this very familiar top ten, take a look at what’s really happening…

To review, the Tianhe-2 system, which stands at 33.86 petaflops (compared to the number two system at Oak Ridge National Lab, the Cray “Titan” machine, which offers 17.59 petaflops/s) has 16,000 nodes, each of which are outfitted with two Ivy Bridge and three Xeon Phis for a total of over 3 million cores is going to be a tough one to beat. As we noted earlier this year, China has plans to continue the build-out of this system in hopes of reaching exascale potential. The system is unique with a number of homegrown parts, including the TH Express-2 interconnect, OS, tooling and front-end processors. While it may be a powerhouse, the energy efficiency lags behind the “smaller” Titan machine. Tianhe-2 runs Linpack at 17.8 megawatts while the 261,632 core NVIDIA K20-boosted Cray system at Oak Ridge runs at 8.21 megawatts.

The IBM Sequoia system at Lawrence Livermore is holding steady at number three, which in its three years alive has topped out at 17.17 petaflops/s, not far behind Titan. For those not familiar with the list this further shows the Linpack benchmark performance chasm between the number one system and those that trail it—all of which in the top ten range between 17.59 petaflops/s at the top to 3.1 petaflops/s for #10. The 500th machine on the list runs at just a tick over the 133 teraflop/s peak mark.

For those familiar with the list in its last form in November, you’ll notice that there is only one change in the top ten—a Cray XC30 is now in place and running at 3.14 petaflops at an undisclosed U.S. government site. While other than this new, mysterious addition, there might not be any earth-shattering news on this Top 500 list, there are some trends that we’ve been monitoring over the last few list iterations—and some that have evolved since November. For instance, the United States, which once dominanted the Top 500, dropped from 265 systems during November’s listing to 233 on this 43rd Top 500.

Meanwhile, the number of Chinese systems in increasing. In addition to securing the number one spot by a significant margin, there are an additional 13 machines from China, bringing their total share of the Top 500 to 76. To put that in some perspective, the UK has 30, France has 27 and Germany has 23. Japan has contributed an additional two machines, bringing their total to 30.

When it comes to the overall list, performance is continuing to climb. The total of all machines on the November list is now 274 petaflops, compared to 250. To add further perspective, the total petaflop count across all machines reporting results was 223 petaflops. That sounds like a rather noteworthy increase until one takes a look at the long term growth line in performance…

Remember that strong performance development staircase we’ve steadily been climbing? If you take a look at the graphic below using the latest data from today’s Top 500 announcement, you’ll see that slight planing off in reach that we began to spot over the last year and a half. As our friends at TOP500 noted today, “From 1994 to 2008 [performance] grew by 90% per year. Since 2008 it only grows by 55% per year.” And when you take a close look at the list over the last couple of years, you’ll see that the reason why that declining figure isn’t more pronounced is simply because the top tier of the list is propping it up—most notably with the addition of the Tianhe-2 system, which holds 13.7% of the performance share of the entire list.

When examined as a whole, we’re falling off except at the highest end…but what does this mean for end user applications? Is high end computing getting smarter in terms of efficiency and software to where, for real-world applications, FLOPS no longer matter so much? Many argue that’s the case…and some will await the new HPCG benchmark and forgo Linpack altogether in favor of a more practical benchmark. That hasn’t had an impact yet on this summer’s list but over time it will be interesting to watch.

One gamechanger for the historical performance trends is certainly the mighty accelerator/coprocessor. But even the accelerator story has some interesting twists and turns to report. A total of 62 systems are using some form of accelerator or coprocessor technology, which is up slightly from 53 machines on the November list. Of those, 44 are using NVIDIA GPUs, 17 have deployed Xeon Phi and two have ATI Radeon as the booster of choice.

With that in mind, there’s another phenomenon that stands out. While this isn’t a suggestion that the performance leveling off is because of this, the trend around accelerator use isn’t quite as strong as it used to be either, as you can see on the historical development chart below. There are many reasons why this might be the case. For instance, national labs and scientific computing centers tend to be among the first to experiment with new technologies, although for GPUs in particular, this doesn’t completely match up since the real spike in NVIDIA-powered systems happens late in 2011–quite a long time after GPU computing began to take off. It’s possible to see in that spike for Intel when Xeon Phi landed in several shops as experimental technology as well, but even with a spike visible now, it’s difficult to see widespread adoption.

Of course, keep in mind that a tapering off of GPU or other accelerated systems doesn’t exactly mean that there is an overall slowdown. This is one segment of the HPC arena–there are many, many machines from academia and enterprise, that do not choose to run the HPL benchmark. Even if there are 20% of these machines missing from the list, the effect on that list would be felt in such a graphic. We asked Addison Snell of Intersect360 Research about the accelerator graphic above and he echoed this, noting that “Change in share in the Top 500 doesn’t necessarily reflect market trends. While Intel did gain share in microprocessors in 2013 over AMD and IBM Power, we also have seen a number of HPC systems with GPUs installed, which has risen to 44% of systems installed since the beginning of 2012.”

Of course, keep in mind that a tapering off of GPU or other accelerated systems doesn’t exactly mean that there is an overall slowdown. This is one segment of the HPC arena–there are many, many machines from academia and enterprise, that do not choose to run the HPL benchmark. Even if there are 20% of these machines missing from the list, the effect on that list would be felt in such a graphic. We asked Addison Snell of Intersect360 Research about the accelerator graphic above and he echoed this, noting that “Change in share in the Top 500 doesn’t necessarily reflect market trends. While Intel did gain share in microprocessors in 2013 over AMD and IBM Power, we also have seen a number of HPC systems with GPUs installed, which has risen to 44% of systems installed since the beginning of 2012.”

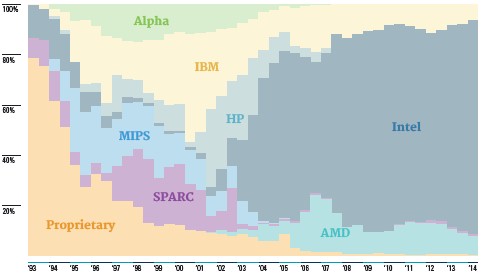

The real story that’s developing further with this list–and we expect, given changes at IBM in particular–is on the chip front. A great deal more will be revealed about the nature of such shifts in November and next June…and definitely by the end of 2015 if the many developments at IBM, Intel, NVIDIA and elsewhere are on schedule.

To put those in more accurate light, Intel has an 85% share of the systems on the list with IBM Power at 8 percent and AMD Opterons moving down three percent in terms of share to 6%. TOP500 reports that among these systems, 96% are sporting six or more cores with 83% harnessing eight or more. To say that Intel continues to dominate is an understatement. But despite any perceived stagnation of this chart from the last couple of years, get ready, because the new few years are set to bring strong winds of change due to momentum with OpenPower and perhaps even AMD. The arrival of 64-bit ARM will shake things up as will new choices in chips, but expect a flat list at least through this time next year unless something completely unexpected happens. Fill in the blank on what that might be, but free, easy to program quantum computing systems seems the only option.

Right now, IBM’s Blue Gene/Q holds the majority of systems in the top ten. However, with changes at IBM, which is now focusing its efforts on the future of OpenPower and Power more generally, once these systems are decommissioned, along with the many others on the list (176 currently), it’s hard to say what their position will be. We talked with IBM’s Dave Turek this week in advance of ISC and we have an interview coming during our special coverage that will offer a sense of what’s next for Big Blue in HPC, so keep an eye out for that.

On the network front, there haven’t been any major changes. 222 systems are sporting Infiniband on this most recent list, up from 207 in fall. 75 entries are reporting 10 GbE, which is two less than the last list A total of 127 systems are outfitted with standard GbE (compared to 135 in November). There are 52 custom interconnects and 5 proprietary interconnects (which now includes the Cray Aries assets, which used to be counted under their own system name). The Gemini interconnect can be found on 18 systems, including, of course, Titan.

For some additional background on this summer’s list, we thought it might be useful to show two figures that demonstrate where a few trends in the list and its participants. The first will also not offer much in the way of difference or surprises compared to November’s iteration of the Top 500, although it’s thrilling to see growing industry participation take a slight rise.

The figure below puts all of this in context by showing the dominant trend in terms of systems–again, not a surprise, but a useful visualization.

The figure below puts all of this in context by showing the dominant trend in terms of systems–again, not a surprise, but a useful visualization.

Of all of these systems, HP has a 36% share (down from 39% in November), IBM has 35% (up from 33% on the fall list) and Cray sits in third position for vendor share with 51 systems—a total of just a tick over 10% of the 500 machines.

What’s more enlightening on those figures is the performance share. As noted above, the Tianhe-2 system itself provides over 13% of the performance share for the list. But by vendor, IBM has a 32% performance share, Cray edged up to 18.6% (up by two percentage points, in part due to the new #10 government XC30), and although they sell more systems than the others, HP’s performance share is just a tick below Cray’s at 15.6%.

Stay tuned for our visual feature set go live later this morning CET that showcases other subtle trends on this summer’s TOP500 list.

And in the meantime, stop by the HPCwire booth to say hello. You’re welcome to bring a pot of coffee with you. I take it with milk, no sugar. And I will drink it all. Thank you.