Over at the Typhoon Computing blog, Michel Müller addresses a topic that is top of mind to many HPC programmers: porting code to accelerators.

Fortran programmers porting their code to GPGPUs (general purpose graphics processing units) have a new tool at their disposal, called Hybrid Fortran. Müller shows how this open source framework can enhance portability without sacrificing performance and maintainability.

From the blog (editor’s note: the site was down at the time of publication):

Say, you are on the onset of programming a HPC application. No problem, right? You know how the underlying machine works in terms of memory architecture and ALUs. (Or not? Well, that’s no problem either, the compilers have become so good I’m hearing, they will surely figure it out). You know what numeric approximation will be used to map your problem most efficiently. You know all about Roofline performance modelling, such that you can verify whether your algorithm performs on the hardware the way you’ve expected. You know what you’re supposed to do when you encounter data parallelism. So – let’s sit down and do it!

But wait!

You’re hearing about your organisation ordering a new cluster. In order to get closer to Exascale, this cluster will sport these fancy new accelerators. So all new HPC software projects should evaluate, if and how they can make use of coprocessors. You start reading yourself into the accelerator landscape. OpenCL, CUDA, OpenACC, OpenMP, ArrayFire, Tesla, Intel MIC, Parallela… Your head starts getting dizzy from all this stuff – all these hardware and software tools have lots of overlap, but also significant differences. Especially, they’re very different from x86 CPU architecture. Why is that?

It essentially comes down to the fact that in 2005, the free lunch was over.

By free lunch, Müller is of course referring to the ramping down of Moore’s law. When processor clock rates topped out, chipmakers began cramming multiple cores on a chip, and the multicore era was born. This puts the burden on the programmer to harness this parallelism. But as long as you have to do all that multithreaded implementation, why not get the most out of it, asks Müller, or as he puts it: “Why care about six or eight threads if we can have thousands?”

From here Müller goes through a step by step process of the other potential roadblocks, such as applications that are limited by memory bandwidth, the slow PCI Express bus, and the temptation to let scientists use the old (non-accelerated) version of your code on existing CPU-only machines.

This is all leading up to the ultimate dilemma: what if increased portability comes at the expense of performance and maintainability?

For codes written in Fortran, there is hope in the form of an open source Fortran directive called Hybrid Fortran. The code’s github page explains it as “a way for you to keep writing your Fortran code like you’re used to – only now with GPGPU support.”

With this machine-driven solution, a Python-based preprocessor takes care of the necessary transformations at compile-time, so there is no runtime overhead. It parses annotations together with your Fortran code structure, declarations, accessors and procedure calls, and then writes separate versions of your code – one for CPU with OpenMP parallelization and one for GPU with CUDA Fortran.

The programmer only needs to add two things:

(1) Where is the code to be parallelized? (Can be specified for CPU and GPU separately.)

(2) What symbols need to be transformed in different dimensions?

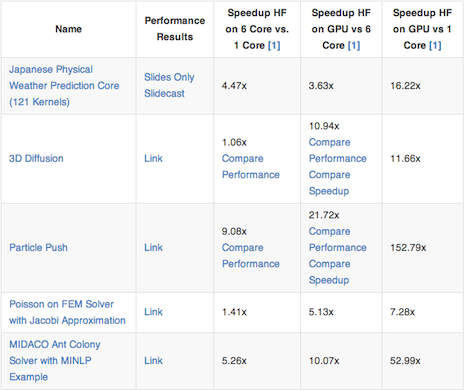

Müller charts the performance differences of this approach below:

[1] If available, comparing to reference C version, otherwise comparing to Hybrid Fortran CPU implementation. Kepler K20x has been used as GPU, Westmere Xeon X5670 has been used as CPU (TSUBAME 2.5). All results measured in double precision. The CPU cores have been limited to one socket using thread affinity ‘compact’ with 12 logical threads. For CPU, Intel compilers ifort / icc with ‘-fast’ setting have been used. For GPU, PGI compiler with ‘-fast’ setting and CUDA compute capability 3.x has been used. All GPU results include the memory copy time from host to device.

Müller didn’t just stumble upon this solution, he is the primary developer of the codebase. At the Tokyo Institute of Technology, Müller ported the Physical Core of Japan’s national next generation weather prediction model to GPGPU. He ran into many of the problems he presents in this blog, and solving these issues led to the development of Hybrid Fortran. Müller currently works at the Tokyo Institute of Technology, where he is planning to port the internationally used open source weather model WRF to Hybrid Fortran.