HPC continues to make inroads into the oil and gas industry, now one of the main commercial users of supercomputing, along with financial services. In the past year, energy giants BP, Total and Eni have rolled out mega supercomputing centers and smaller players are eager to follow suit.

According to the industry-focused publication PennEnergy, it is no longer unusual for energy companies to possess a data portfolio of tens or even hundreds of petabytes. This creates a compelling need for high performance computing (HPC) for data acquisition, analysis and storage, but that’s just the tip of the iceberg.

The high stakes nature of oil and gas development is putting pressure on energy companies to shorten time to oil. As global demand climbs, the industry looks to increase the yield of mature assets while competitively pursuing unconventional exploration. In the words of one oil and gas-focused vendor, “[exploration is] becoming deeper, harsher, more remote and subsequently more expensive.” The intensity of these challenges is driving HPC adoption, as companies turn to technology to speed ROI and minimize risk.

A recent case study from the Council on Competitiveness further details the benefits of advanced computing to oil recovery efforts. During the last few decades, the recovery rate has gone from 30 percent to 50 percent assisted by HPC technologies such as enhanced imaging and modeling.

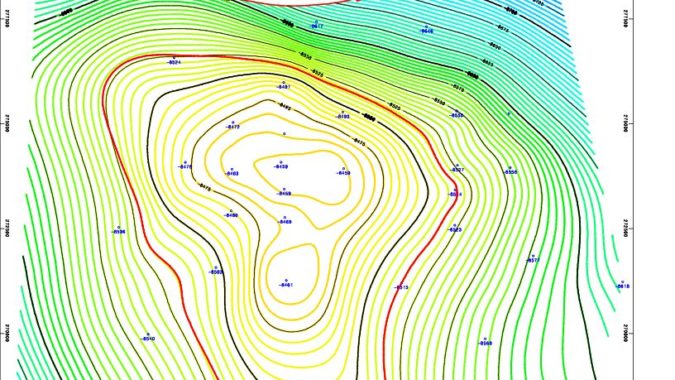

Supercomputing has moved out of the research center and into the field, and is now an essential tool for reservoir engineers and earth scientists. From seismic processing to Reverse Time Migration to reservoir modeling (and monitoring), a carefully executed HPC strategy can satisfy these and other workloads, such as compliance mandates created by new federal regulations.