Nearly one year after the Department of Energy inked a $70 million contract for the exascale-relevant Cori system, news of a second, smaller system has come to light. In an interesting turn of events, the National Energy Research Scientific Computing Center (NERSC) revealed today that it will be acquiring a “Cori Phase 1” system, a 10-cabinet Cray XC40 machine outfitted with Intel “Haswell” parts to be installed this summer.

The new system is in addition to the original Cori contract – the outcome of the NERSC-8/Trinity procurement partnership – which is still on track for mid-2016 delivery. Named after American biochemist Gerty Cori, the next-gen Cray XC system with 9,300 self-hosted Intel Knights Landing (KNL) takes a manycore approach that is a distinct departure for the leading-edge of supercomputing.

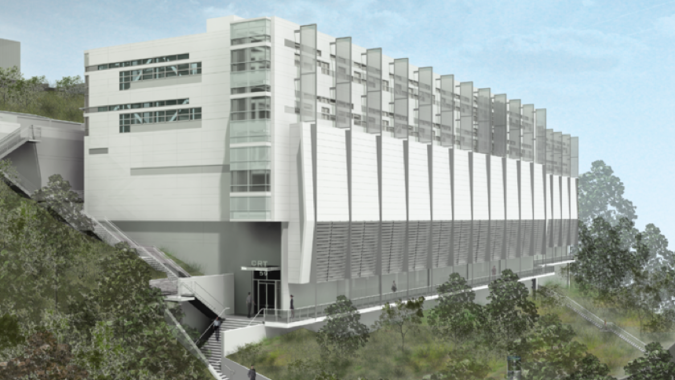

The Phase 1 Cori will employ 16-core E5 v3 Xeons running at 2.3 Ghz, the follow-on to the Intel “Ivy Bridge” processors that drive Edison. The Cray XC40 will be the first NERSC system installed in the newly built Computational Research and Theory (CRT) facility at Lawrence Berkeley National Laboratory. Hopper, the Cray XE6 that was NERSC’s first petascale supercomputer, and another system, Carver, will not make the move to CRT; but will retire from the Oakland Scientific Facility (OSF) in downtown Oakland.

Speaking with HPCwire, Jay Srinivasan, NERSC’s Computational Systems Group lead, characterized the Haswell-based Cori as a familiar system that will bridge the gap between Hopper’s retirement and the KNL-based Cori in 2016. The upgraded Xeons mean that users can continue to run their applications without interruption in support of their efforts to develop new energy sources, improve energy efficiency, and understand climate change.

While NERSC was reticent to divulge total peak performance, Srinivasan reported that the Phase 1 system will offer its users roughly the same sustained application performance as Hopper, which touts a peak performance is 1.28 petaflops. The Lustre file system and dragonfly topology based on the Aries interconnect are identical to NERSC’s current Edison supercomputer (a Cray XC30). Each of the more than 1,400 Haswell compute nodes touts 128 gigabytes of memory, twice the per-node memory of Edison.

Beyond ensuring that there is no dip in compute supply for DOE users, other characteristics, from the processors’ enhanced instruction set to the additional memory bandwidth and accelerated application I/O, offer the opportunity “to explore new workflows and paths to computation,” said Srinivasan. Users that run data-intensive workloads on other NERSC systems now have the option to run on a Cray platform, he adds.

“They can start off by using the Haswell machine the same way they use Edison,” explained Srinivasan. “They can take their code and start running it on day one. Then there are other features that we have on there, the interactive nodes, the batch system policies, that allow for high-throughput and serial workflows and we will allow the compute nodes to interact with external databases, which a lot of data-intensive workflows need. They can then start bringing more data-intensive workflows into the machine, and start using it that way. They can have a firm foundation of familiarity and then start building on newer ways of using the system. Once we have Cori integrated, that will really provide a whole new computing paradigm, melding data-intensive computing with traditional computing.”

In its official press release, NERSC details a number of advanced features designed to benefit data-intensive applications, including:

* Large number of login/interactive nodes to support applications with advanced workflows.

* Immediate access queues for jobs requiring real-time data ingestion or analysis.

* High-throughput and serial queues can handle a large number of jobs for screening, uncertainty qualification, genomic data processing, image processing and similar parallel analysis.

* Network connectivity that allows compute nodes to interact with external databases and workflow controllers.

* The first half of an approximately 1.5 terabytes/sec NVRAM-based Burst Buffer for high bandwidth low-latency I/O.

* A Cray Lustre-based file system with over 28 petabytes of capacity and 700 gigabytes/second I/O bandwidth.

When work on Cori is finalized, 9,300 Knights Landing compute nodes and more than 1,900 Haswell nodes will be lashed together on the same high speed network, providing NERSC scientists with a twofold increase in application I/O acceleration. This speedup is owed to Cray’s DataWarp “Burst Buffer” technology, which uses NVRAM to move data more quickly between processor and disk. The Cori Phase 1 burst buffer will feature approximately 750 terabytes of capacity and approximately 750 gigabytes/second of I/O bandwidth. The completed Cori supercomputer will double these specs, boosting capacity to more than 1.5 petabytes and I/O bandwidth to more than 1.5 terabytes/second.

The staff at NERSC emphasized how the whole in this case is more than the sum of its parts, offering unique benefits to the large DOE user base.

“The line between big data and high performance computing is really very blurred, especially in computational science,” said Katie Antypas, head of NERSC’s User Services Department in a prepared statement. “The combined Cori system is the first system to be specifically designed to handle the full spectrum of computational needs of DOE researchers, as well as emerging needs in which data- and compute-intensive work are part of a single workflow. For example, a scientist will be able to run a simulation on the highly parallel Knights Landing nodes while simultaneously performing data analysis using the Burst Buffer on the Haswell nodes. This is a model that we expect to be important on exascale-era machines.”

NERSC and Cray also announced that they are working together on two ongoing R&D efforts aimed at enabling data-intensive science. One project seeks to maximize Cori’s data potential by enabling higher bandwidth network capability to the outside world, and the second project is focused on putting Linux container virtualization functionality on Cray compute nodes to allow custom software stack deployment.

The price of the new system was not disclosed, but it does fall under a separate contract from the original Cori win.