While the shifting architectural landscape of elite supercomputing gets a lot of the spotlight especially around TOP500 time, cluster computing has grown to comprise more than three-quarters of the HPC market. At a joint Cray-IDC webinar held earlier today, the partners discussed how clusters, and specifically scale-out “cluster supercomputers,” are evolving in ways that can benefit from supercomputing technology.

Steve Conway, research vice president of High Performance Computing at IDC, made the point that clustered servers (clusters) have democratized the supercomputing market. Clusters are the dominant type of HPC systems today, owing to the compelling price/performance that is enabled by their heavy reliance on industry-standard technologies. According to IDC market research, spending on all supercomputers about doubled from 2009 to 2014, and clusters captured an ever-larger share of this growing market segment. In 2014, clusters accounted for over 85 percent of worldwide HPC server revenues, up from just 33.5 percent five years prior.

“Although tightly-coupled supercomputers that capture a lot of the media headlines are indispensable for the most challenging HPC problems, most big problems can be handled very well by large scale-out clusters,” said Conway. The vast majority of big supercomputers, ones that sell for more than $500,000, are clusters — IDC calls these “cluster supercomputers.”

Conway pointed out that in this high-end >$500,000 segment, the average price point in 2014 was a little over $2 million, noting that this of course includes some supercomputers that cost more than $100 million. “In the former era of monolithic vector supercomputers, the biggest machine you could buy cost about $30 million because they really weren’t scalable systems,” said Conway, adding that “today’s clusters can scale way up and way down.” The average workgroup cluster cost just $19,000 in 2014.

Over the past five years, IDC figures show that average core counts have almost doubled. This means that there are more parts to manage in the typical cluster and a higher probability of having one or more of the parts fail.

“But escalating core counts are only one of the cluster management challenges users consistently point out to us in research studies,” Conway observed. “The challenges pretty much begin with the fact that clusters are made up of independent computers that were not originally designed to work with each other. You really need outstanding networking and software technologies to overcome this deficit and to coax strong performance out of clusters.

“Other important challenges include heterogeneous components, especially the addition of accelerators and coprocessors, but also on the software side, the components of the software stack have become very numerous and very varied. The basic cluster architecture doesn’t really vary and that’s a great benefit but vendors offer a whole spectrum of configuration choices and that adds to the management challenge as well so does data movement and storage especially given the growing importance of big data workloads.”

Storage vendors cite their biggest challenges as mixed I/O, that is having to deal with both batch and streaming data on the same cluster.

“As you’d expect the cluster challenges are exacerbated in cluster-supercomputers,” Conway shared. “It’s easy to build a really big cluster but it’s not easy to build one that works at large scale. Only a few vendors in the world have mastered that art — Cray being one of them. The company figured out how to make really big supercomputers work decades before it got into the cluster business and they’ve been exploiting that expertise and experience for the cluster-supercomputer products that they make now.

“Making these mega-clusters effective in production settings you need to deal with reliability and resiliency in situations where one or more parts may be in failure mode. Otherwise you can lose on your long running jobs, and that’s painful. You need to monitor wellness, and anticipate parts failures before they happen so the system can do workarounds and you have to keep the data moving to keep the processors busy. Power and cooling is another big challenge.”

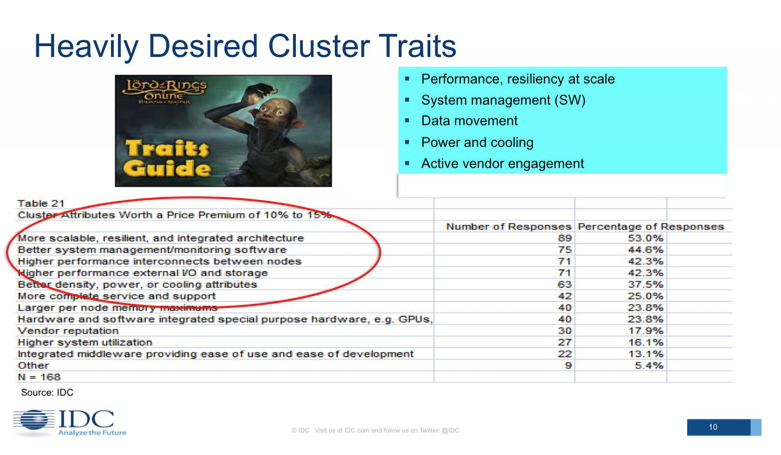

In a recent study, buyers listed the improvements that they most desired. At the top of the list were tighter integration of the architecture, more resiliency, more capable management software, better interconnects and I/O and storage improvements.

“More scalable, resilient and integrated architecture” was the most cited response, getting the attention of 53 percent of those surveyed.

Near the bottom of the list, not surprisingly, was higher system utilization. The average cluster or supercomputer has utilization rates at about 90 percent or better, compared with about 30 percent utilization in the enterprise server market. These numbers help explain the very low adoption rates for virtualization and server consolidation in HPC.

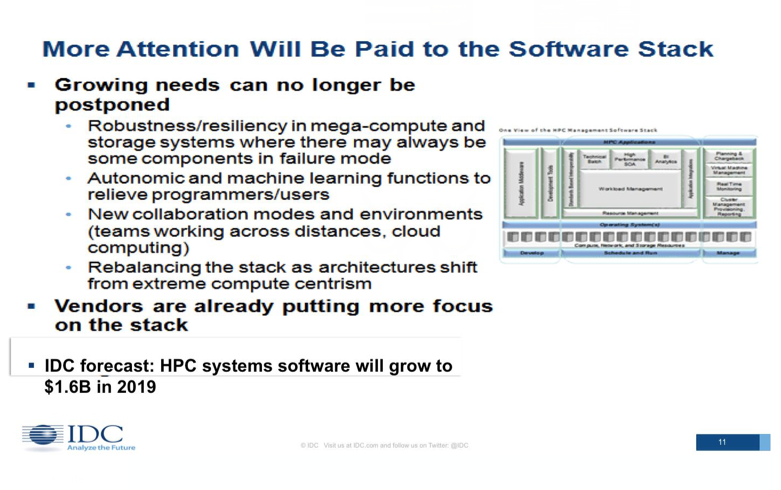

In line with user pain points, the software stack is going to be getting a lot more attention, according to the IDC analyst, due to the fast growth of clusters in size and complexity. Areas ripe for improvement include robustness; autonomic functioning and machine learning to relieve programmers, users and administrators; collaboration tools; and rebalancing of the stack to align with the rebalancing of workloads towards greater data-centricity.

IDC sees this as an attractive market for vendors and the numbers back this up. HPC systems software is on track to grow to $1.6 billion in 2019 and most of this will go to clusters since they represent most of the HPC market.

Increased heterogeneity is also driving cluster complexity as the market seeks alternatives to x86 processors that offer better data-level parallelism. The opening has allowed accelerator/coprocessor adoption to ramp up quickly with site deployment going from 9 percent to 77 percent from 2008-2013, but as IDC and other analysts have noted, the growth is wider than it is deep. A lot of sites have a small number of such devices used for exploratory or experimental purposes rather than production computing. Interestingly, IDC has found that industrial firms tend to buy fewer accelerator and coprocessor parts in relation to x86, but deploys them in production scenarios at higher rates.

Conway also commented on another fast-growth market for HPC clusters: high-performance data analysis (HPDA). It’s not very big yet at just under a billion dollars on the server side, but it’s headed to $2.6 billion in 2018. This is a growth rate that is about three times that of overall HPC server sales. Some of it is organic owing to existing HPC sites that are doing data-intensive simulation and adding analytics to the mix — but some of it is brand new, coming from commercial companies adopting HPC purely for analytics because their enterprise technology cannot handle it.

This slide from IDC lists the major reasons that this newer part of the market is turning to HPC for analytics because as Conway put it “there is no place else to go with enterprise technology.”

The driver that doesn’t get talked about as much as others is the third bullet point: variability. While the volume of data can be thought of as as rows in a table, variables can be thought of as the columns, Conway observed.

The major background trend is the move away from the static searches that characterized the past two decades to an era of pattern discovery.

In a statistic that may come as a surprise, IDC’s findings show a full two-thirds of HPC sites are performing high-performance data analysis work, which includes data-intensive simulation and/or advanced analytics, with 30 percent of all HPC cycles spent on these “big data” tasks. The study also showed how Hadoop is about as widely used in HPC as it is in the commercial market and it’s just starting to come into its own because of the addition of hooks that allow it to be used productively in HPC. HPDA users’ wish list for future clusters includes better interconnects, I/O and storage.

In summary, while the entire cluster segment is facing issues relating to heterogeneity, configurations and data delivery, cluster supercomputers are facing additional pain points, which IDC lists as:

- Performance at scale.

- Reliability/resiliency at scale.

- System wellness monitoring.

- Data movement.

- TCO/opex (especially power/cooling).

In part two of this feature, we will take a look at how Cray’s experience with high-end supercomputing has evolved into a strategy to address these needs.