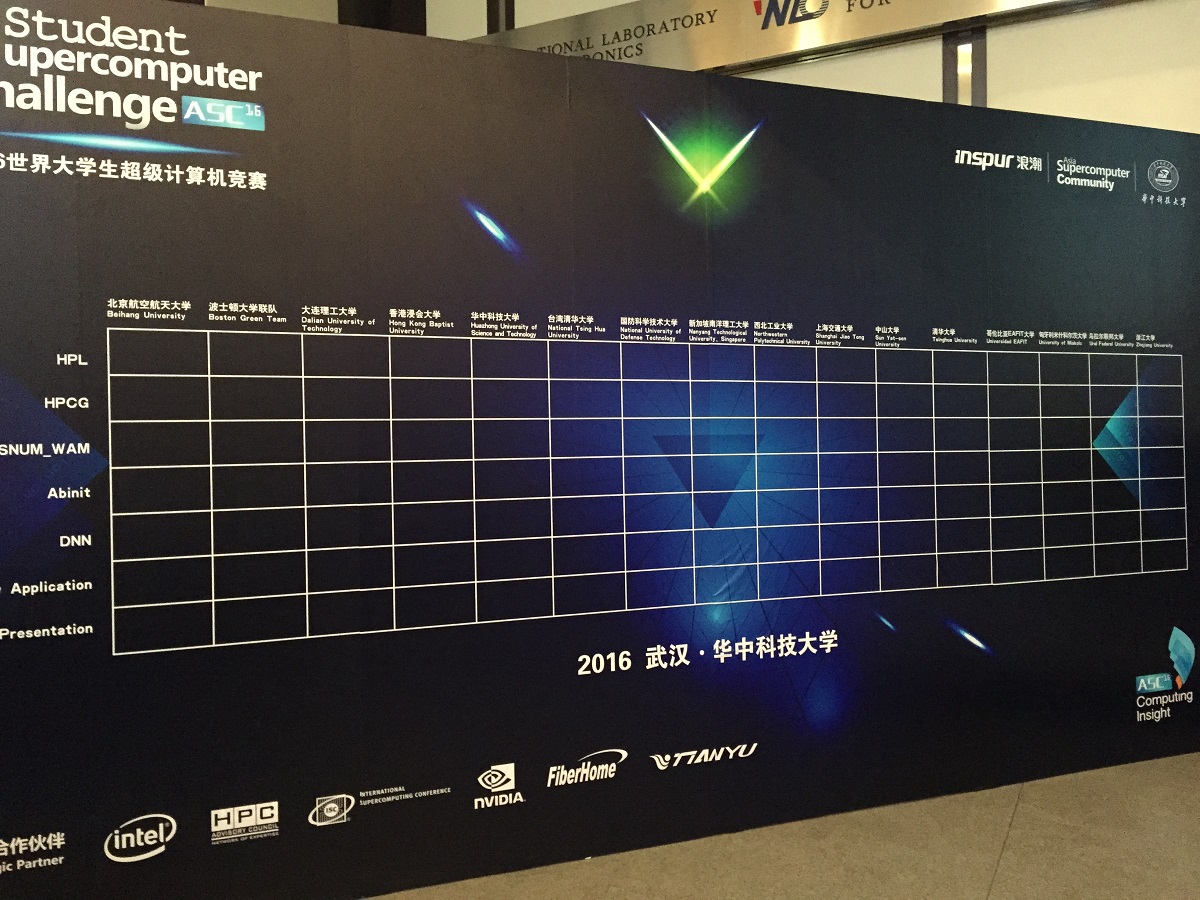

The fifth annual Asia Student Supercomputer Challenge (ASC16) got off to an exciting start this morning at the Huazhong University of Science and Technology (HUST) in Wuhan, the capital of Hubei province, China. From an initial pool of 175 teams, representing 148 universities across six continents, 16 teams advanced through the preliminary rounds into the final round, including returning champion and “triple crown” team, Tsinghua University, and the only US team to make it into the competition, the Boston Green Team.

With each iteration of the Asian Student Competition and its “sister” events at SC and ISC, the roster of competitors becomes more formidable. Many of the teams have accumulated a number of wins already, but there are new entrants too, including Hong Kong Baptist University and Dalian University of Technology, which have earned their place in this final based on their high rankings in the preliminary rounds. There’s also a steady influx of new students, as the competition torch gets passed on.

The teams that HPCwire spoke with who have been here before are aiming to put that experience to their advantage. Students from the Boston Green Team (team includes students from Boston University and Northeastern) gave a lot of consideration to the cluster design process. Some of the teams brought in Nvidia Tesla K80 GPUs at their own expense, but the Boston team opted to outfit their Inspur NF5280M4 server with the standard parts that Inspur and Intel supplied, including the Xeon E5-2650 v3 processor and the Intel Xeon Phi-31S1 coprocessor cards.

We also spoke with returning champs from Tsinghua University who had a somewhat different point of view. The team leader said that because the teams will all be using similar hardware with the same CPU, they will really be forced to focus on application testing and development. “There is not that large a difference in architecture, so I think we have to beat them by software and that’s a really big challenge.”

The purpose of the contest is for each team to design its cluster for the best application performance within the power consumption mandates. Per the contest regulations, power consumption must be kept under 3000W or the result of a given task becomes invalid (except for the e-Prize, which we’ll get to in a moment). While teams can build larger clusters — and indeed some teams on the contest floor have ten nodes, the hard power limit constrains how many of those nodes can be harnessed.

Boston Green Team members Winston Chen and John Smith pointed out that at five standard nodes (with two Xeon CPUs and a Phi coprocessor) that is right at the limit for 3,000 watts. Last year at ASC15, the team had an eight node straight-CPU cluster and it hit 3,000 watts. This year, they have six Phi-accelerated nodes — five to run their workloads and a spare.

In the five years since its inception, ASC has developed into the largest student supercomputing competition and is also one with the highest award levels. During the four days of the competition, the 16 teams at ASC16 will race to conduct real-world benchmarking and science workloads as they vie for a total of six prizes worth nearly $40,000.

The application set includes HPL, HPCG, MASNUM, Abinit, DNN and a mystery application; students also must deliver and are evaluated on a team presentation. The ASC program committee is dedicated to making the program as “life-like” as possible. “ASC provides a learning platform for students to get hands-on experience through the use of high performance computers,” observes Professor Pak-Chung Ching, Chinese University of Hong Kong. “But training supercomputing talents is more than that…they should be trained to understand a complex computational problem, break it down and then use computers to solve different types of problems from a professional perspective. ASC provides a platform for students to participate in practical projects as well as an opportunity to share experience and gain knowledge.”

The Applications

Students will be tasked with running two benchmarking applications. The High Performance LINPACK (HPL) benchmark — currently used to rank the TOP500 computing systems — plus the HPCG High Performance Conjugate Gradients (HPCG), an emerging international standard that exercises computational and data access patterns that are representative of a broad range of modern applications. The authors of the HPCG embrace both HPL and HPCG as bookends that enable application teams to assess the full balance of a system, including processor performance, memory capacity, memory bandwidth, and interconnection capability.

Teams will conduct three science applications: a surface wave numerical model MASNUM; a material simulation software ABINIT; and a mystery application, which will be announced on the day of the final.

e-Prize for Deep Learning Prowess

Even if teams have opted to forego using Phis in their cluster configuration, all teams get the chance to access the Phi MIC (Many Integrated Core) architecture when they compete for this year’s e-Prize, which requires that students set up and deploy a deep neural network (DNN). After developing the DNN on a Phi platform, teams will optimize the DNN algorithm using a remote login to Phi-based Tianhe-2 nodes.

The cluster has eight Phi nodes, each comprising two CPUs (Intel Xeon E5-2692 v2, 12 cores, 2.20GHz) and three Xeon Phi cards (Intel Xeon Phi 31S1P, 57 cores, 1.1GHz, 8GB memory). The Tianhe-2 network employs a custom high speed interconnect system with a bandwidth between nodes of 160 Gbps. There is no power consumption limit for this part of the contest.

The students will apply a data set comprising more than 100,000 pieces of speech data to the DNN with the objective of training the machine to be able to recognize speech as efficiently and effectively as the human brain.

The DNN performance optimization is given the highest weight in the contest, 25 percent of the total score. The ASC committee recognizes DNN as “one of the most important deep learning algorithms in artificial intelligence and the most popular cutting-edge emerging field in high-performance computing.” The organizers cite the recent “Man vs. Machine Battle” between AlphaGo and Lee Sedol for generating widespread interest in deep learning.

After the performance testing is concluded, the teams will present the results of their work to a panel of expert judges. The team presentation is worth ten percent of their final score. The winning teams will be announced during an awards ceremony on Friday, April 22.

HPC Workshop

The event will also be host to the 12th HPC Connection Workshop on April 21, 2016. The workshop features a roster of prominent HPC experts from around the world. Jack Dongarra, University of Tennessee professor and founder of the HPL benchmark, will discuss the current trends and future challenges in the HPC field. Depei Qian, chief leader of the “HPC and Core Software” Project in the China 863 Program, will share the vision of supercomputing mapped in China’s 2016-2020 Five-Year Plan. Yutong Lu, professor at National University of Defense Technology and deputy chief designer on the Tianhe-2 project, will discuss the convergence of big data and HPC on the Tianhe-2 supercomputer. The full listing of invited talks can be viewed here.