IBM scientists have broken new ground in the development of a phase change memory technology (PCM) that puts a target on competing 3D XPoint technology from Intel and Micron. IBM successfully stored 3 bits per cell in a 64k-cell array that had been pre-cycled 1 million times and exposed to temperatures up to 75∘C. A paper describing the advance was presented this week at the IEEE International Memory Workshop in Paris.

Phase-change memory is an up-and-coming non-volatile memory technology — a storage-class memory that bridges the divide between expensive performant, volatile memory (namely DRAM), and slower persistent storage (flash or hard disk drives). According to IBM, having the ability to reliably fit 3 bits per cell is what will make this technology price-competitive with flash.

With memory demands riding the tide of big data, phase change memory has a lot to recommend it but to be a market success, the economics must work, say the authors, and being able to store multiple bits per memory cell is essential for keeping costs under control.

Using a combination of electrical sensing techniques and signal processing technologies, the researchers have shown for the first time the the viability of Triple-Level-Cell (TLC) storage in phase-change memory cells. The researchers addressed challenges related to multi-bit PCM including drift, variability, temperature sensitivity and endurance cycling with two innovative enabling technologies:

(a) an advanced, nonresistance cell-state metric that exhibits robustness to drift and PCM noise, and (b) an adaptive level-detection and modulation-coding framework that enables further resilience to drift, noise and temperature variation effects.

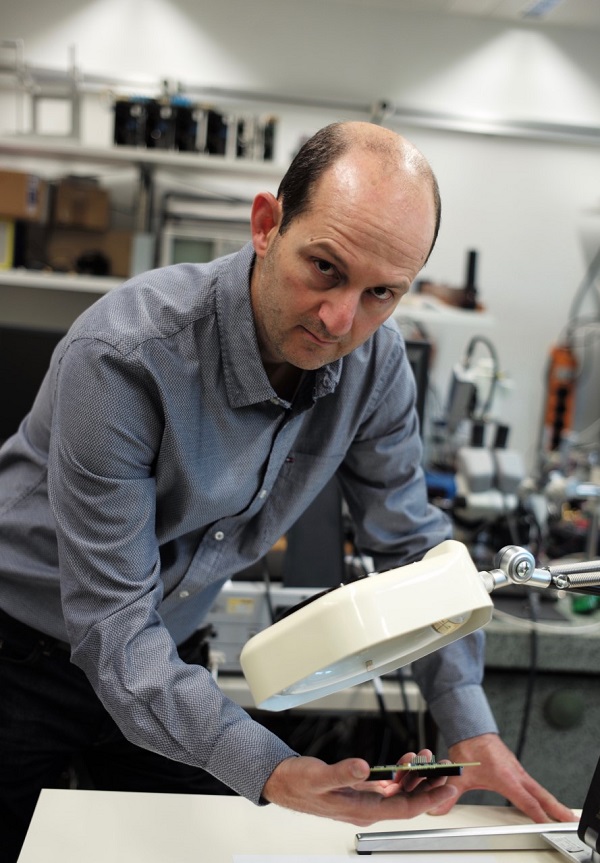

At the Paris IEEE event, Dr. Haris Pozidis, an author of the paper and the manager of non-volatile memory research at IBM Research – Zurich, explained that phase change memory is based on the properties that a chalcogenide alloy has when heat is applied. A laser pulse is used to switch the ally between its polycrystalline and amorphous (glassy) state. By controlling heating and cooling, one can switch reliably between these two phases, said Pozidis. The principle of storing more bits per cell is based on the fact that the alloy can also exist in an intermediate phase, which is a mixture of a crystalline and amorphous state that gives rise to an intermediate resistance.

“In terms of basic characteristics,” said Pozidis, “phase change memory exhibits latency on the order of hundred nanoseconds to a couple microseconds, compared to hundreds of microseconds to milliseconds for flash, so about three orders of magnitude faster. In terms of write endurance, we have demonstrated one million cycles, but there are other demonstrations that have shown in excess of one million cycles and therefore this brings it to at least 1000x or even more than flash. In terms of cost, this is a crucial attribute, because this is what will pave the way for PCM acceptance in the marketplace, and there PCM is believed to be between DRAM and flash. With this technology of storing 3 bits per cell, we believe that the cost per bit of PCM will potentially approach that of flash today.”

The TLC PCM offers a moderate data retention of 10 days at temperatures as high as 75∘C. Beyond being persistent, IBM’s PCM technology is radiation-hardened and it offers through random access capability and write in place capability unlike flash. It’s also very scalable, noted Pozidis, with research showing PCM properties on materials down to less than 10nm in diameter.

IBM has plans to integrate PCM at a cluster and datacenter level using low-latency networking and support from system software to enable new use cases for data-intensive applications. Strides were made toward this goal at the 2016 OpenPower summit, when IBM scientists demonstrated the attachment of its second-generation phase-change memory to POWER8-based servers via the CAPI protocol. Latency was observed for 128-byte read/write access from/to PCM DIMMs on a POWER8 server. 99 percent of reads completed within 3.9 us.

“Right now it’s a technology that has just seen the light of day in the form of a controlled release,” said IDC analyst Ashish Nadkarni, who was pre-briefed on the announcement, “so it’s going to take some time before it reaches the point [of flash cost parity]. Right now it’s between DRAM and NAND, and it’s more a period of time where it’s inched closer toward the cost of NAND, but as the cost of NAND itself goes down, PCM today is somewhere in the middle and it has to move faster to catch up. I suspect it will take a couple years before it’s comparable. They still need to find suppliers who can manufacture the technology; they need to be able to iron out all the kinks, and the supply chain has to fall in line.”

The IBM effort is competing with 3D XPoint, the non-volatile memory play from Intel and Micron that was announced last summer. Those partners have been less forthcoming with the specifics of the enabling technology, which is speculated to be either ReRAM- or PCM-based, but like IBM’s PCM device, 3D XPoint targets that gap between speedy RAM and high-capacity, low-cost flash. IBM hasn’t publicly announced a partner yet, but it has worked with SK Hynix in the past.

“Clearly there’s going to be a competitive landscape,” Nadkarni reflected. “Samsung might enter the game as well. Intel and Micron have the benefit of being the first born, but they haven’t started shipping yet. For IBM, the benefit they have is they can start deploying it in their own products. Intel and Micron have to find other suppliers who can use the technology in their products. It’s not clear who’s superior today but from IBM’s perspective being able to stuff 3 bits in a single cell is a big deal as is being able to control the state of the bits based on temperature.”

The experiments were carried out on a prototype PCM chip consisting of a 2 × 2 Mcell array of 4 Mcells with a 4-bank interleaving architecture, connected to a standard integrated circuit board. The memory array size is 2 × 1000 μm × 800 μm. The prototype chip uses 90nm CMOS baseline technology.