Last Friday the University of Southern California (USC) announced it was establishing a quantum computing center under the school’s prestigious Viterbi School of Engineering’s Information Sciences Institute. Partnering with USC will be Lockheed Martin, which will earn the company a spot in center’s official name: the USC Lockheed Martin Quantum Computing Center. No word on how exactly much money was being invested by either USC or Lockheed.

According to the USC press release: “With the construction of the multi-million dollar quantum computing center, USC now has the infrastructure in place to support future generations of quantum computer chips, positioning the school and its partners at the forefront of quantum computing research.”

According to the USC press release: “With the construction of the multi-million dollar quantum computing center, USC now has the infrastructure in place to support future generations of quantum computer chips, positioning the school and its partners at the forefront of quantum computing research.”

Quantum computers are able to represent bits as both zero and one simultaneously, which enables such systems to perform calculations that are not feasible for classical binary computers, such as integer factorization and complex decision problems.

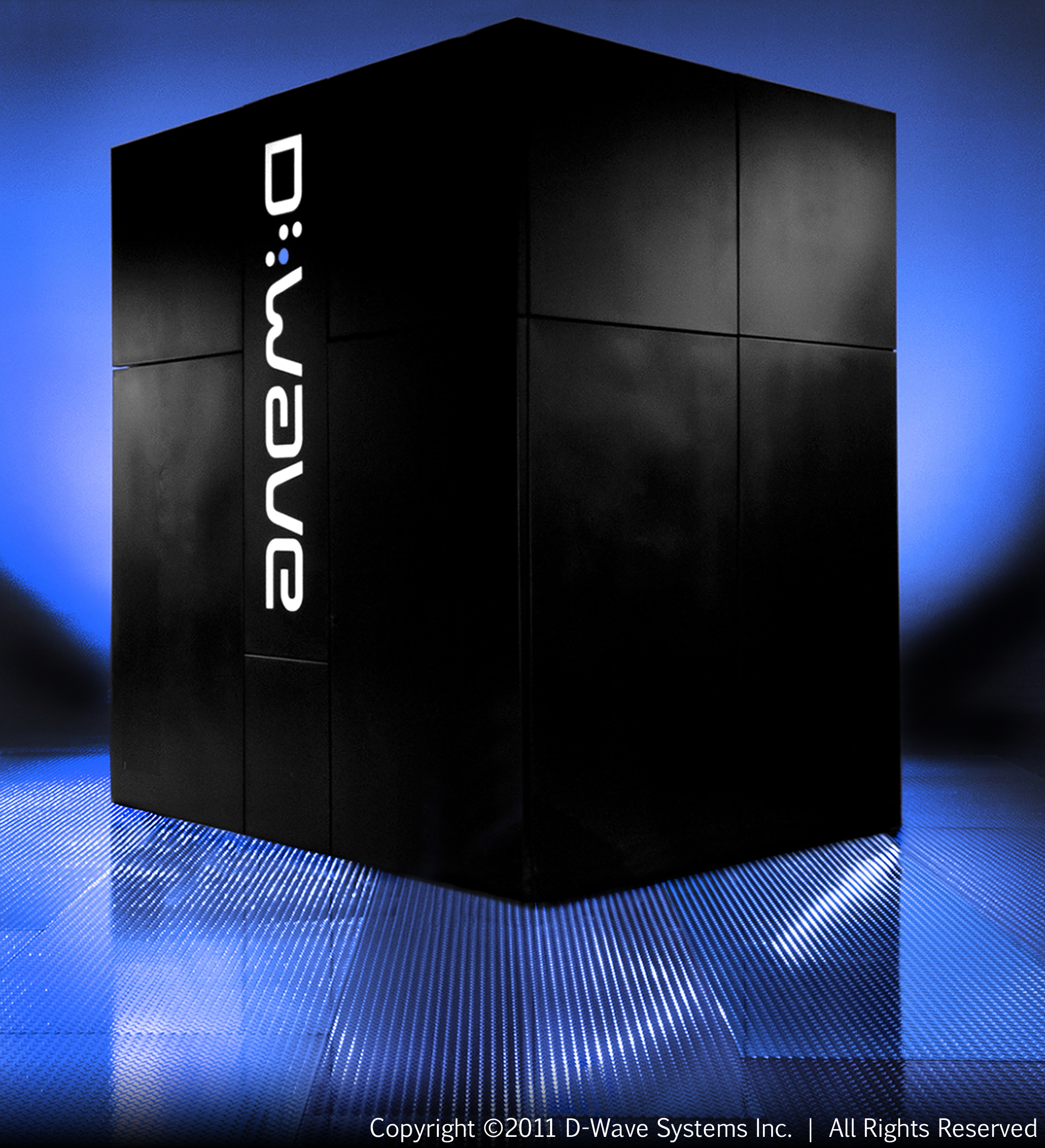

The notable aspect about this new USC facility is that it intends to be an operational quantum computing center; that is, it will run commercial quantum computers. Since Canadian startup D-Wave Systems is the only vendor claiming to have quantum computers, the center will employ the company’s superconducting quantum computer technology to power its initial system.

Back in May, D-Wave sold its first quantum computer to Lockheed Martin, who intended to use it for their “most challenging computation problems.” The system was based on the company’s latest 128-qubit chip, which needs to cooled to near absolute zero (-459F) to operate. The Lockheed sale gave D-Wave a big boost to its credibility, not to mention its prospects for attracting other customers.

In the past, the company has come under scrutiny, with critics claiming the technology does not deliver true quantum computing. But a May 2011 article in Nature validated at least some of D-Wave’s claims.

In any case, the USC center will give D-Wave some additional visibility, and, given the more open academic setting, a public platform to demonstrate the technology to other potential customers.