To the new age set, it may be the age of Aquarius, but to researchers everywhere, it’s the age of data. Just about every machine nowadays doubles as a data collection mechanism and while some of it has more immediate “low-hanging” value, the far greater portion must be managed, annotated and curated to extract that potential. This is the long end of the data tail and its stretch is vast. In an effort to wrangle this data beast, the National Science Foundation is proposing a new organization, the Institute for Empowering Long Tail Research, as part of its Scientific Software Innovation Institutes program.

Like their “missing middle” counterpart, much of America’s research community does not have the necessary tools to transform ever-growing data feeds into scientific knowledge. The Computation Institute, along with co-collaborators – the University of California, Los Angeles, the University of Arizona, the University of Washington and the University of Southern California – have received a $500,000 one-year planning grant from the NSF, to examine how innovative software can optimize the research process.

Like their “missing middle” counterpart, much of America’s research community does not have the necessary tools to transform ever-growing data feeds into scientific knowledge. The Computation Institute, along with co-collaborators – the University of California, Los Angeles, the University of Arizona, the University of Washington and the University of Southern California – have received a $500,000 one-year planning grant from the NSF, to examine how innovative software can optimize the research process.

Ian Foster, Director of the Computation Institute, explains that “with limited resources and expertise, even simple data discovery, collection, analysis, management, and sharing tasks are difficult for small teams.” In an official statement, he says that “this project represents the first significant effort to understand and articulate these researchers’ needs and translate them into a coherent roadmap for future research.”

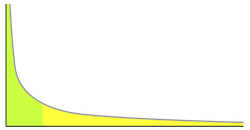

The phrase “long end of the tail” describes a statistical distribution in which a high-frequency population is followed by a low-frequency population which gradually tails off. The events at the farthest end of the tail have the lowest probability of occurrence. The concept is used to describe the retailing strategy of selling a high number of low-demand items, in which the proceeds from your less-popular items (through the sheer number of them) can create as much, or more, profit than your best-selling items.

Amazon and Netflix are well-known for employing this kind of business strategy, but the long-end of the tail shows up in lots of places. It’s the small and medium-sized businesses that are responsible for generating most of the nation’s wealth, and it’s the smaller labs that carry out the majority of science.

“For these small teams, the growing importance of cyberinfrastructure and its applications in discovery and innovation is as much problem as opportunity,” notes Bill Howe, Affiliate Assistant Professor in the Department of Computer Science and Engineering at the University of Washington. The long tail of science has an unfortunate consequence, adds Howe: “modern computational methods often are not exploited, much valuable data goes unshared, and too much time is consumed by routine tasks.”

The project aims to change this dynamic. Researchers from domains as varied as biology, economics and metagenomics will work together to figure out better ways of managing data. The long-end of the science tail may be a time-consuming affair, but as with the business world, the returns can be well worth it, a sentiment shared by project partner Bryan Heidorn, Director of the School of Information Resources and Library Science at the University of Arizona:

“There may only be a few scientists worldwide that would want to see a particular boutique data set, but there are many thousands of these data sets. Access to these data sets can have a very substantial impact on science. In fact, it seems likely that transformative science is more likely to come from the tail than the head.”

The teams are already looking at cloud computing-based tools like Globus Online as way to offload the more mundane tasks, such as monitoring data transfers, to free up time for more important endeavors. As the project makes headway, the collaborators will propose a second funding round, but there is no doubt about the institute’s potential, says Foster:

“We believe that a Scientific Software Innovation Institute focused on long tail research can have a transformative impact on US science,” he shares. “By accelerating discovery and innovation in those small laboratories where most research occurs, we can increase total research output, strengthen the powerhouses of US research, and motivate and prepare students to participate more effectively in research careers.”