Supercomputing Helps Explain the Milky Way’s Shape

September 30, 2022

If you look at the Milky Way from “above,” it almost looks like a cat’s eye: a circle of spiral arms with an oval “iris” in the middle. That iris — Read more…

Hubble Telescope, Supercomputing Enable Exoplanet Observations

May 5, 2022

The first exoplanet detection happened only 30 years ago—but now, thanks to rapid advances in observation and data processing technologies, astronomers are wo Read more…

NASA Spotlights Its Galaxy of HPC Activities

April 15, 2022

“HPC Matters!” was the big, bold title of a talk by Piyush Mehrotra, division chief of NASA’s Advanced Supercomputing (NAS) Division at its Ames Research Center, during the meeting of the HPC Advisory Council at Stanford last week. At the meeting, Mehrotra offered a glimpse into the state of supercomputing at NASA—and how its systems are being applied. Read more…

Space Weather Prediction Gets a Supercomputing Boost

June 9, 2021

Solar winds are a hot topic in the HPC world right now, with supercomputer-powered research spanning from the Princeton Plasma Physics Laboratory (which used Oak Ridge’s Titan system) to University College London (which used resources from the DiRAC HPC facility). One of the larger... Read more…

Organizations Partner to Rescue Petabytes of Data from the Arecibo Observatory

April 21, 2021

The Arecibo Observatory in Puerto Rico stood as the world’s largest single-aperture telescope for more than half a century, its grandiosity earning it a turn Read more…

Supercomputer Research May Have Solved a Planetary Mystery

February 19, 2021

For decades, astronomers have struggled to explain why planets with intermediate masses – such as Uranus and Neptune – overwhelmingly outnumber planets of o Read more…

Supercomputers Assist Galactic Archaeology Efforts

October 29, 2020

Galactic archaeologists trace back the origins of the Milky Way, examining the faintest traces of light, gases and matter to determine how, when and why various Read more…

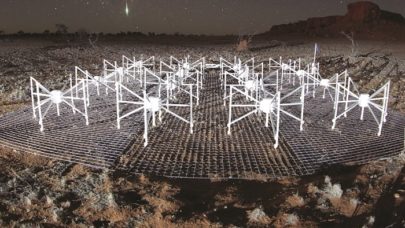

Pawsey Supercomputing Centre Spins Up ‘Garrawarla’ for Astronomical Insight

August 30, 2020

Pawsey Supercomputing Centre is welcoming a new GPU cluster, Garrawarla, a key resource for the Murchison Widefield Array (MWA) radio telescope project in Australia, a precursor to the Square Kilometre Array. Procured by CSIRO, Australia’s national science agency, from HPE in early 2020 at a cost of... Read more…

- Click Here for More Headlines

Whitepaper

Transforming Industrial and Automotive Manufacturing

In this era, expansion in digital infrastructure capacity is inevitable. Parallel to this, climate change consciousness is also rising, making sustainability a mandatory part of the organization’s functioning. As computing workloads such as AI and HPC continue to surge, so does the energy consumption, posing environmental woes. IT departments within organizations have a crucial role in combating this challenge. They can significantly drive sustainable practices by influencing newer technologies and process adoption that aid in mitigating the effects of climate change.

While buying more sustainable IT solutions is an option, partnering with IT solutions providers, such and Lenovo and Intel, who are committed to sustainability and aiding customers in executing sustainability strategies is likely to be more impactful.

Learn how Lenovo and Intel, through their partnership, are strongly positioned to address this need with their innovations driving energy efficiency and environmental stewardship.

Download Now

Sponsored by Lenovo

Whitepaper

How Direct Liquid Cooling Improves Data Center Energy Efficiency

Data centers are experiencing increasing power consumption, space constraints and cooling demands due to the unprecedented computing power required by today’s chips and servers. HVAC cooling systems consume approximately 40% of a data center’s electricity. These systems traditionally use air conditioning, air handling and fans to cool the data center facility and IT equipment, ultimately resulting in high energy consumption and high carbon emissions. Data centers are moving to direct liquid cooled (DLC) systems to improve cooling efficiency thus lowering their PUE, operating expenses (OPEX) and carbon footprint.

This paper describes how CoolIT Systems (CoolIT) meets the need for improved energy efficiency in data centers and includes case studies that show how CoolIT’s DLC solutions improve energy efficiency, increase rack density, lower OPEX, and enable sustainability programs. CoolIT is the global market and innovation leader in scalable DLC solutions for the world’s most demanding computing environments. CoolIT’s end-to-end solutions meet the rising demand in cooling and the rising demand for energy efficiency.

Download Now

Sponsored by CoolIT

Advanced Scale Career Development & Workforce Enhancement Center

Featured Advanced Scale Jobs:

HPCwire Resource Library

HPCwire Product Showcase

© 2024 HPCwire. All Rights Reserved. A Tabor Communications Publication

HPCwire is a registered trademark of Tabor Communications, Inc. Use of this site is governed by our Terms of Use and Privacy Policy.

Reproduction in whole or in part in any form or medium without express written permission of Tabor Communications, Inc. is prohibited.