VMware Tackles Memory Tiering with Project Capitola

October 7, 2021

At its VMworld 2021 event this week, VMware introduced Project Capitola, a new company-led effort to use its vSphere software to virtualize application access t Read more…

Micron Quits 3D XPoint, Puts Factory Up for Sale

March 16, 2021

After almost 10 years of investments and flagging progress, Micron announced today (Tuesday) that it is ending its involvement in 3D XPoint, the non-volatile me Read more…

Samsung Announces HBM Tech with Built-in AI Processing

February 17, 2021

Samsung today announced what it’s calling the industry’s first high bandwidth memory (HBM) memory with built-in AI processing capability. The new device – Read more…

Visualization and Filesystem Use Cases Show Value of Large Memory Fat Nodes on Frontera

February 2, 2021

Frontera, the world’s largest academic supercomputer housed at the Texas Advanced Computing Center (TACC), is big both in terms of number of computational nodes and the capabilities of the large memory “fat” compute nodes. A couple of recent use cases demonstrate how academic researchers... Read more…

HiPEAC Keynote: In-Memory Computing Steps Closer to Practical Reality

January 21, 2021

Pursuit of in-memory computing has long been an active area with recent progress showing promise. Just how in-memory computing works, how close it is to practic Read more…

Intel Touts Optane Performance, Teases Next-gen “Crow Pass”

January 5, 2021

Competition to leverage new memory and storage hardware with new or improved software to create better storage/memory schemes has steadily gathered steam during Read more…

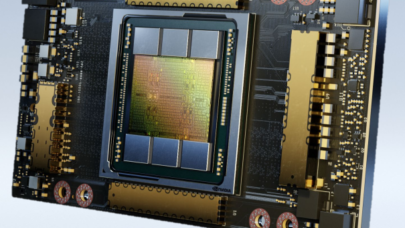

Nvidia Unveils A100 80GB Powerhouse GPU at SC20

November 16, 2020

Nvidia has doubled the memory of its previous supercomputing GPUs with its new A100 80GB GPU, which aims to drive new levels of supercomputing performance in a wide variety of uses, from AI and ML research to engineering and more. The new A100 80GB GPU comes just six months... Read more…

With Optane Gaining, Intel Exits NAND Flash

October 21, 2020

In a sign that its 3D XPoint memory technology is gaining traction, Intel Corp. is departing the NAND flash memory and storage market with the sale of its manuf Read more…

- Click Here for More Headlines

Whitepaper

Transforming Industrial and Automotive Manufacturing

In this era, expansion in digital infrastructure capacity is inevitable. Parallel to this, climate change consciousness is also rising, making sustainability a mandatory part of the organization’s functioning. As computing workloads such as AI and HPC continue to surge, so does the energy consumption, posing environmental woes. IT departments within organizations have a crucial role in combating this challenge. They can significantly drive sustainable practices by influencing newer technologies and process adoption that aid in mitigating the effects of climate change.

While buying more sustainable IT solutions is an option, partnering with IT solutions providers, such and Lenovo and Intel, who are committed to sustainability and aiding customers in executing sustainability strategies is likely to be more impactful.

Learn how Lenovo and Intel, through their partnership, are strongly positioned to address this need with their innovations driving energy efficiency and environmental stewardship.

Download Now

Sponsored by Lenovo

Whitepaper

How Direct Liquid Cooling Improves Data Center Energy Efficiency

Data centers are experiencing increasing power consumption, space constraints and cooling demands due to the unprecedented computing power required by today’s chips and servers. HVAC cooling systems consume approximately 40% of a data center’s electricity. These systems traditionally use air conditioning, air handling and fans to cool the data center facility and IT equipment, ultimately resulting in high energy consumption and high carbon emissions. Data centers are moving to direct liquid cooled (DLC) systems to improve cooling efficiency thus lowering their PUE, operating expenses (OPEX) and carbon footprint.

This paper describes how CoolIT Systems (CoolIT) meets the need for improved energy efficiency in data centers and includes case studies that show how CoolIT’s DLC solutions improve energy efficiency, increase rack density, lower OPEX, and enable sustainability programs. CoolIT is the global market and innovation leader in scalable DLC solutions for the world’s most demanding computing environments. CoolIT’s end-to-end solutions meet the rising demand in cooling and the rising demand for energy efficiency.

Download Now

Sponsored by CoolIT

Advanced Scale Career Development & Workforce Enhancement Center

Featured Advanced Scale Jobs:

HPCwire Resource Library

HPCwire Product Showcase

© 2024 HPCwire. All Rights Reserved. A Tabor Communications Publication

HPCwire is a registered trademark of Tabor Communications, Inc. Use of this site is governed by our Terms of Use and Privacy Policy.

Reproduction in whole or in part in any form or medium without express written permission of Tabor Communications, Inc. is prohibited.