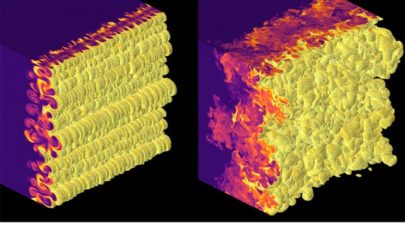

Sandia Supercomputer Model Simulates a Melting Diamond

March 11, 2022

Even the toughest materials on Earth are vulnerable under the most extreme conditions. A supercomputer simulation visualized just that by cracking, melting and Read more…

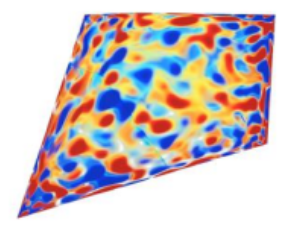

Supercomputer Research Investigates Fusion Instabilities

May 26, 2021

Inertial confinement fusion (ICF) experiments is a speculative method of fusion energy generation that would compress a fuel pellet to generate fusion energy ju Read more…

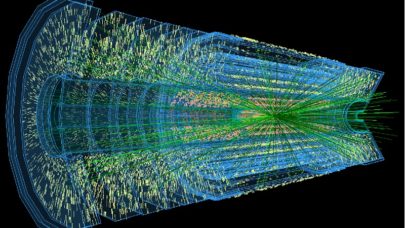

Epyc-Based Dell EMC Servers Power Physics Research at Nikhef

January 24, 2019

Another sign of growing traction for AMD Epyc processors, Nikhef, the Dutch National Institute for Subatomic Physics, has expanded its datacenter with single-so Read more…

NSF-Funded Institute Lays Groundwork for Future High-Energy Physics Discoveries

September 6, 2018

The National Science Foundation (NSF) made another big investment* this week with the launch of the Institute for Research and Innovation in Software for High-E Read more…

CERN Project Sees Orders-of-Magnitude Speedup with AI Approach

August 14, 2018

An award-winning effort at CERN has demonstrated potential to significantly change how the physics based modeling and simulation communities view machine learni Read more…

MIT-IBM Watson AI Lab Targets Algorithms, AI Physics

September 7, 2017

Investment continues to flow into artificial intelligence research, especially in key areas such as AI algorithms that promise to move the technology from speci Read more…

Alternative Supercomputing or How to Misuse a Computer

July 14, 2016

In 2008, the IBM Roadrunner supercomputer broke the petaflops barrier using the power of the heterogeneous Sony Cell Broadband Engine (BE) processor. A year prior, the Cell BE had already made its way into the consumer market as the engine inside the SonyPlaystation 3. The PS3's accelerated design, Linux-capability and low price point... Read more…

COSMOS Team Achieves 100x Speedup on Cosmology Code

August 24, 2015

One of the most popular sessions at the Intel Developer Forum last week in San Francisco, and certainly one of the most exciting from an HPC perspective, broug Read more…

- Click Here for More Headlines

Whitepaper

Transforming Industrial and Automotive Manufacturing

In this era, expansion in digital infrastructure capacity is inevitable. Parallel to this, climate change consciousness is also rising, making sustainability a mandatory part of the organization’s functioning. As computing workloads such as AI and HPC continue to surge, so does the energy consumption, posing environmental woes. IT departments within organizations have a crucial role in combating this challenge. They can significantly drive sustainable practices by influencing newer technologies and process adoption that aid in mitigating the effects of climate change.

While buying more sustainable IT solutions is an option, partnering with IT solutions providers, such and Lenovo and Intel, who are committed to sustainability and aiding customers in executing sustainability strategies is likely to be more impactful.

Learn how Lenovo and Intel, through their partnership, are strongly positioned to address this need with their innovations driving energy efficiency and environmental stewardship.

Download Now

Sponsored by Lenovo

Whitepaper

How Direct Liquid Cooling Improves Data Center Energy Efficiency

Data centers are experiencing increasing power consumption, space constraints and cooling demands due to the unprecedented computing power required by today’s chips and servers. HVAC cooling systems consume approximately 40% of a data center’s electricity. These systems traditionally use air conditioning, air handling and fans to cool the data center facility and IT equipment, ultimately resulting in high energy consumption and high carbon emissions. Data centers are moving to direct liquid cooled (DLC) systems to improve cooling efficiency thus lowering their PUE, operating expenses (OPEX) and carbon footprint.

This paper describes how CoolIT Systems (CoolIT) meets the need for improved energy efficiency in data centers and includes case studies that show how CoolIT’s DLC solutions improve energy efficiency, increase rack density, lower OPEX, and enable sustainability programs. CoolIT is the global market and innovation leader in scalable DLC solutions for the world’s most demanding computing environments. CoolIT’s end-to-end solutions meet the rising demand in cooling and the rising demand for energy efficiency.

Download Now

Sponsored by CoolIT

Advanced Scale Career Development & Workforce Enhancement Center

Featured Advanced Scale Jobs:

HPCwire Resource Library

HPCwire Product Showcase

© 2024 HPCwire. All Rights Reserved. A Tabor Communications Publication

HPCwire is a registered trademark of Tabor Communications, Inc. Use of this site is governed by our Terms of Use and Privacy Policy.

Reproduction in whole or in part in any form or medium without express written permission of Tabor Communications, Inc. is prohibited.