Solving Heterogeneous Programming Challenges with Python, Today

June 30, 2022

You may be surprised how ready Python is for heterogeneous programming, and how easy it is to use today. Our first three articles about heterogeneous programming focused primarily on C++ as we ponder “how to enable programming in the face of an explosion of hardware diversity that is coming?” For a refresher on what motivates this question... Read more…

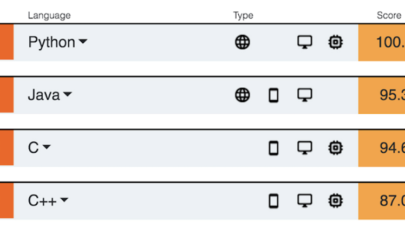

Python Again Tops IEEE Spectrum’s Programming Language List

July 22, 2020

What’s your go-to programming language? As judged by IEEE Spectrum, it is (again) Python and comfortably so according to an article posted today. C++ finished Read more…

Google’s ML Compiler Initiative Advances

September 12, 2019

Machine learning models running on everything from cloud platforms to mobile phones are posing new challenges for developers faced with growing tool complexity. Read more…

When Dense Matrix Representations Beat Sparse

September 9, 2019

In our world filled with unintended consequences, it turns out that saving memory space to help deal with GPU limitations, knowing it introduces performance pen Read more…

MIT Aims to Ease AI Programming

July 2, 2019

A new AI programming language seeks to ease the process of writing inference algorithms and other predictive models without the hassle of grinding out complicated equations and code. Among the goals of the probabilistic programming system dubbed “Gen” is making it easier for coding novices to write models and algorithms for broader AI applications such as computer vision and robotics. Read more…

TIOBE Index: Python Reaches Another All-Time High

June 17, 2019

TIOBE has released its June 2019 Index, and Python has reached another all-time high. TIOBE, which stands for “the importance of being earnest,” was founded in 2000. Its Programming Community Index – which is updated on a monthly basis... Read more…

ISC19 Panel: When’s enough, enough? With so many parallel programming technologies, is it time to focus on consolidating them?

June 10, 2019

ISC is looming fast and on the Wednesday we will be holding a panel asking the question whether it is time to focus more on the consolidation and interoperabili Read more…

Top Ten Ways AI Affects HPC in 2019

March 26, 2019

AI workloads are becoming ubiquitous, including running on the world’s fastest computers — thereby changing what we call HPC forever. As every organization Read more…

- Click Here for More Headlines

Whitepaper

Transforming Industrial and Automotive Manufacturing

In this era, expansion in digital infrastructure capacity is inevitable. Parallel to this, climate change consciousness is also rising, making sustainability a mandatory part of the organization’s functioning. As computing workloads such as AI and HPC continue to surge, so does the energy consumption, posing environmental woes. IT departments within organizations have a crucial role in combating this challenge. They can significantly drive sustainable practices by influencing newer technologies and process adoption that aid in mitigating the effects of climate change.

While buying more sustainable IT solutions is an option, partnering with IT solutions providers, such and Lenovo and Intel, who are committed to sustainability and aiding customers in executing sustainability strategies is likely to be more impactful.

Learn how Lenovo and Intel, through their partnership, are strongly positioned to address this need with their innovations driving energy efficiency and environmental stewardship.

Download Now

Sponsored by Lenovo

Whitepaper

How Direct Liquid Cooling Improves Data Center Energy Efficiency

Data centers are experiencing increasing power consumption, space constraints and cooling demands due to the unprecedented computing power required by today’s chips and servers. HVAC cooling systems consume approximately 40% of a data center’s electricity. These systems traditionally use air conditioning, air handling and fans to cool the data center facility and IT equipment, ultimately resulting in high energy consumption and high carbon emissions. Data centers are moving to direct liquid cooled (DLC) systems to improve cooling efficiency thus lowering their PUE, operating expenses (OPEX) and carbon footprint.

This paper describes how CoolIT Systems (CoolIT) meets the need for improved energy efficiency in data centers and includes case studies that show how CoolIT’s DLC solutions improve energy efficiency, increase rack density, lower OPEX, and enable sustainability programs. CoolIT is the global market and innovation leader in scalable DLC solutions for the world’s most demanding computing environments. CoolIT’s end-to-end solutions meet the rising demand in cooling and the rising demand for energy efficiency.

Download Now

Sponsored by CoolIT

Advanced Scale Career Development & Workforce Enhancement Center

Featured Advanced Scale Jobs:

HPCwire Resource Library

HPCwire Product Showcase

© 2024 HPCwire. All Rights Reserved. A Tabor Communications Publication

HPCwire is a registered trademark of Tabor Communications, Inc. Use of this site is governed by our Terms of Use and Privacy Policy.

Reproduction in whole or in part in any form or medium without express written permission of Tabor Communications, Inc. is prohibited.