Blue Waters Supercomputer Helps Tackle Pandemic Flu Simulations

February 26, 2020

While not the novel coronavirus that is now sweeping across the world, the 2009 H1N1 flu pandemic (pH1N1) infected up to 21 percent of the global population and Read more…

Irish Centre for High-End Computing Completes Major Climate Simulations

January 28, 2020

The new year marked a somber milestone for the planet: 2019 turned out to be the second-hottest year on record (inched out only by 2016, which was hotter by 0.0 Read more…

What’s New in HPC Research: Sky Surveys, HIV Research, HPC ‘First Contact’ & More

November 27, 2019

In this bimonthly feature, HPCwire highlights newly published research in the high-performance computing community and related domains. From parallel programming to exascale to quantum computing, the details are here. Read more…

Researchers Visualize the Largest Turbulence Simulation Ever

October 30, 2019

Near Munich, at the Leibniz Supercomputing Centre, magnificent work was afoot. The researchers (a team from Leibniz, Intel and the Australian National Universit Read more…

Optimizing Offshore Wind Farms with Supercomputer Simulations

October 9, 2019

Offshore wind farms offer a number of benefits; many of the areas with the strongest winds are located offshore, and siting wind farms offshore ameliorates many of the land use concerns associated with onshore wind farms. Some estimates say that, if leveraged, offshore wind power... Read more…

Supercomputers Generate Universes to Illuminate Galactic Formation

August 20, 2019

With advanced imaging and satellite technologies, it’s easier than ever to see a galaxy – but understanding how they form (a process that can take billions Read more…

Stampede2 ‘Shocks’ with New Shock Turbulence Insights

August 19, 2019

Shockwaves play roles in everything from high-speed aircraft to supernovae – and now, supercomputer-powered research from the Texas A&M University and the Read more…

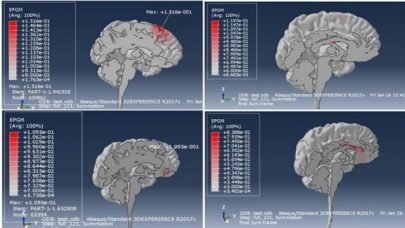

Dassault, NIMHANS, and UberCloud Foster Innovative Schizophrenia Treatment

March 29, 2018

In a series of challenging high performance computing applications in the life sciences, UberCloud’s HPC containers have been packaged recently with several scientific workflows and application data to simulate complex phenomena in human’s heart and brain. Read more…

- Click Here for More Headlines

Whitepaper

Transforming Industrial and Automotive Manufacturing

In this era, expansion in digital infrastructure capacity is inevitable. Parallel to this, climate change consciousness is also rising, making sustainability a mandatory part of the organization’s functioning. As computing workloads such as AI and HPC continue to surge, so does the energy consumption, posing environmental woes. IT departments within organizations have a crucial role in combating this challenge. They can significantly drive sustainable practices by influencing newer technologies and process adoption that aid in mitigating the effects of climate change.

While buying more sustainable IT solutions is an option, partnering with IT solutions providers, such and Lenovo and Intel, who are committed to sustainability and aiding customers in executing sustainability strategies is likely to be more impactful.

Learn how Lenovo and Intel, through their partnership, are strongly positioned to address this need with their innovations driving energy efficiency and environmental stewardship.

Download Now

Sponsored by Lenovo

Whitepaper

How Direct Liquid Cooling Improves Data Center Energy Efficiency

Data centers are experiencing increasing power consumption, space constraints and cooling demands due to the unprecedented computing power required by today’s chips and servers. HVAC cooling systems consume approximately 40% of a data center’s electricity. These systems traditionally use air conditioning, air handling and fans to cool the data center facility and IT equipment, ultimately resulting in high energy consumption and high carbon emissions. Data centers are moving to direct liquid cooled (DLC) systems to improve cooling efficiency thus lowering their PUE, operating expenses (OPEX) and carbon footprint.

This paper describes how CoolIT Systems (CoolIT) meets the need for improved energy efficiency in data centers and includes case studies that show how CoolIT’s DLC solutions improve energy efficiency, increase rack density, lower OPEX, and enable sustainability programs. CoolIT is the global market and innovation leader in scalable DLC solutions for the world’s most demanding computing environments. CoolIT’s end-to-end solutions meet the rising demand in cooling and the rising demand for energy efficiency.

Download Now

Sponsored by CoolIT

Advanced Scale Career Development & Workforce Enhancement Center

Featured Advanced Scale Jobs:

HPCwire Resource Library

HPCwire Product Showcase

© 2024 HPCwire. All Rights Reserved. A Tabor Communications Publication

HPCwire is a registered trademark of Tabor Communications, Inc. Use of this site is governed by our Terms of Use and Privacy Policy.

Reproduction in whole or in part in any form or medium without express written permission of Tabor Communications, Inc. is prohibited.