At PEARC22: Moving Beyond Exascale, Harnessing Artistic Expertise

July 15, 2022

The direction that exascale supercomputing will need to follow and the continuing value of visual and other non-computational experts in computer visualizations were the focus of the final two plenary sessions at the PEARC22 conference in Boston on July 13. Jack Dongarra, director of research staff and professor at the Oak Ridge National Laboratory and the University of Tennessee, Knoxville... Read more…

Intel Speeds NAMD by 1.8x: Saves Xeon Processor Users Millions of Compute Hours

August 12, 2020

Potentially saving datacenters millions of CPU node hours, Intel and the University of Illinois at Urbana–Champaign (UIUC) have collaborated to develop AVX-512 optimizations for the NAMD scalable molecular dynamics code. These optimizations will be incorporated into release 2.15 with patches available for earlier versions. Read more…

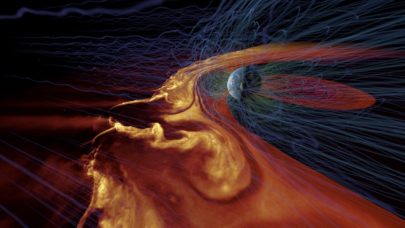

SuperMUC-NG Enables Innovative Science with ‘Best Scientific Visualization’

May 7, 2020

Ranked the 9th fastest supercomputer in the world as of the November 2019 Top500 list, SuperMUC-NG located at the Leibniz Supercomputing Centre (LRZ) is powerin Read more…

NASA’s Pleiades Simulates Launch Abort Scenarios

March 15, 2019

NASA is using flow simulations running on its Pleiades supercomputer to help design the agency’s next manned spacecraft, Orion. Crew safety is paramount, s Read more…

NCSA Industry Conference Recap – Part 1

October 31, 2018

Industry Program Director Brendan McGinty welcomed guests to the annual National Center for Supercomputing Applications (NCSA) Industry Conference, October 9-11 in Urbana, Illinois. One hundred eighty from 40 organizations registered for the invitation-only, two-day event. The program opened with a keynote address by Steven J. Demuth, Chief Technology Officer at Mayo Clinic. Read more…

Preparing for the Arrival of Aurora with CPU-based Interactive Visualization

October 30, 2018

In preparation for the arrival of Aurora, slated to be the first U.S. exascale supercomputer, Argonne National Laboratory is actively working to make techniques Read more…

University of Stuttgart Advances MegaMol Cross-Platform Visualization Framework

April 16, 2018

The MegaMol team at the Visualization Research Center of the University of Stuttgart (VISUS) works each day to empower discoveries in fields like biochemistry, Read more…

Intel Debuts Myriad X Vision Processing Unit for Neural Net Inferencing

August 28, 2017

Intel today introduced the Movidius Myriad X Vision Processing Unit (VPU) which Intel is calling the first vision processing system-on-a-chip (SoC) with a dedic Read more…

- Click Here for More Headlines

Whitepaper

Transforming Industrial and Automotive Manufacturing

In this era, expansion in digital infrastructure capacity is inevitable. Parallel to this, climate change consciousness is also rising, making sustainability a mandatory part of the organization’s functioning. As computing workloads such as AI and HPC continue to surge, so does the energy consumption, posing environmental woes. IT departments within organizations have a crucial role in combating this challenge. They can significantly drive sustainable practices by influencing newer technologies and process adoption that aid in mitigating the effects of climate change.

While buying more sustainable IT solutions is an option, partnering with IT solutions providers, such and Lenovo and Intel, who are committed to sustainability and aiding customers in executing sustainability strategies is likely to be more impactful.

Learn how Lenovo and Intel, through their partnership, are strongly positioned to address this need with their innovations driving energy efficiency and environmental stewardship.

Download Now

Sponsored by Lenovo

Whitepaper

How Direct Liquid Cooling Improves Data Center Energy Efficiency

Data centers are experiencing increasing power consumption, space constraints and cooling demands due to the unprecedented computing power required by today’s chips and servers. HVAC cooling systems consume approximately 40% of a data center’s electricity. These systems traditionally use air conditioning, air handling and fans to cool the data center facility and IT equipment, ultimately resulting in high energy consumption and high carbon emissions. Data centers are moving to direct liquid cooled (DLC) systems to improve cooling efficiency thus lowering their PUE, operating expenses (OPEX) and carbon footprint.

This paper describes how CoolIT Systems (CoolIT) meets the need for improved energy efficiency in data centers and includes case studies that show how CoolIT’s DLC solutions improve energy efficiency, increase rack density, lower OPEX, and enable sustainability programs. CoolIT is the global market and innovation leader in scalable DLC solutions for the world’s most demanding computing environments. CoolIT’s end-to-end solutions meet the rising demand in cooling and the rising demand for energy efficiency.

Download Now

Sponsored by CoolIT

Advanced Scale Career Development & Workforce Enhancement Center

Featured Advanced Scale Jobs:

HPCwire Resource Library

HPCwire Product Showcase

© 2024 HPCwire. All Rights Reserved. A Tabor Communications Publication

HPCwire is a registered trademark of Tabor Communications, Inc. Use of this site is governed by our Terms of Use and Privacy Policy.

Reproduction in whole or in part in any form or medium without express written permission of Tabor Communications, Inc. is prohibited.