Technical Director, Visualization Sciences Group,

Mercury Computer Systems, Inc.

3D visualization has been the key to increased success and efficiency in many areas of exploration and production (E&P). In this industry visualization plays a critical role in gaining insight from data. But often when we discuss visualization, we are talking only about the actual rendering of images on the screen. In fact, the visualization challenge for E&P is characterized by computationally expensive algorithms, a very large number of diverse data sets, and a need for greater interactivity and collaboration. To meet this challenge, we must make data management, computation and rendering work together smoothly and efficiently. In this way we will continue to deliver on 3D visualization’s promise of enabling better decisions in less time.

In the past, the E&P industry has been characterized by its use of big machines for both computation and rendering. As the economics of the “PC” architecture overtook big machines, it seemed that the capabilities of a single machine would never be sufficient. The industry turned to clusters of PCs as a solution. Clusters have been widely adopted for purely computational tasks, but only to a limited extent for visualization. Clusters have significant value for visualization, but also introduce significant complexity and cost in administration compared to single machines. Today, with advances in data management, computing and rendering, the “single machine” is once again a viable platform for visualization of E&P data.

Data management

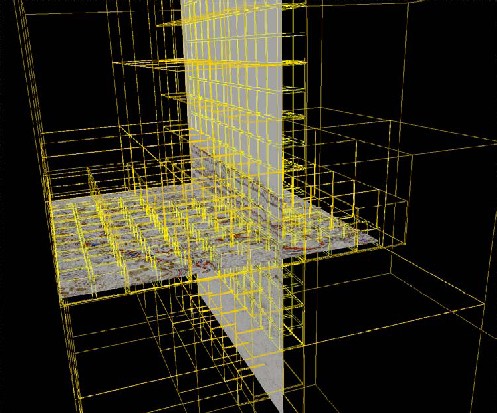

Seismic volumes are typically tens of gigabytes today, and hundreds of gigabytes are not uncommon. Sixty-four-bit operating systems have enabled much larger system memory, but both system memory and texture memory, on the graphics processing unit, remain scarce resources compared to the size of the data sets. An effective solution using hierarchical multi-resolution bricking is now available in visualization toolkits — middleware. In this solution, a pre-processing step subdivides the volume data into “bricks” and computes multiple resolution levels. The full-resolution data is the lowest level of the hierarchy and each higher-level brick represents multiple bricks at the level below. With data in this form, the middleware can initially load the lower-resolution data then automatically refine the image as higher-resolution data is loaded in the background. This enables interactive navigation of the largest volumes even on relatively low-end machines. The user does not have to wait for all the data to be loaded, only the data actually needed is loaded and multiple users can access the same data simultaneously because they use only their own local system memory to load the data. The multi-resolution bricking technique is already used in many E&P applications. VolumeViz from Mercury Computer Systems is one example of this visualization middleware. This same technique can be extended to other large data sets such as horizon surfaces and reservoir models.

Seismic volumes are typically tens of gigabytes today, and hundreds of gigabytes are not uncommon. Sixty-four-bit operating systems have enabled much larger system memory, but both system memory and texture memory, on the graphics processing unit, remain scarce resources compared to the size of the data sets. An effective solution using hierarchical multi-resolution bricking is now available in visualization toolkits — middleware. In this solution, a pre-processing step subdivides the volume data into “bricks” and computes multiple resolution levels. The full-resolution data is the lowest level of the hierarchy and each higher-level brick represents multiple bricks at the level below. With data in this form, the middleware can initially load the lower-resolution data then automatically refine the image as higher-resolution data is loaded in the background. This enables interactive navigation of the largest volumes even on relatively low-end machines. The user does not have to wait for all the data to be loaded, only the data actually needed is loaded and multiple users can access the same data simultaneously because they use only their own local system memory to load the data. The multi-resolution bricking technique is already used in many E&P applications. VolumeViz from Mercury Computer Systems is one example of this visualization middleware. This same technique can be extended to other large data sets such as horizon surfaces and reservoir models.

Computing

For many years applications enjoyed an automatic increase in performance as CPU vendors competed to increase the clock speed in each new generation of chips. Physical limitations such as power consumption and heat dissipation have largely ended this era. The CPU vendors are now competing to increase the number of “cores” in each new generation of chips. Dual-core chips are already common, with quad — and higher — core chips coming soon. To take advantage of this new performance curve software developers will need to embrace multi-threading.

At the same time, alternative chip architectures have become available that provide much higher floating-point performance than conventional CPU chips, but require even more unconventional programming models. The GPU chip on every 3D graphics board is programmable and has very high performance for some algorithms. Its biggest advantage is the option of combining computing and rendering on the same processor. The Cell BE processor is a next-generation heterogeneous multi-core chip now available on a PCI-Express accelerator board from Mercury Computer Systems. All of these programming models, whether multi-threading or stream computing, present tremendous challenges for software developers.

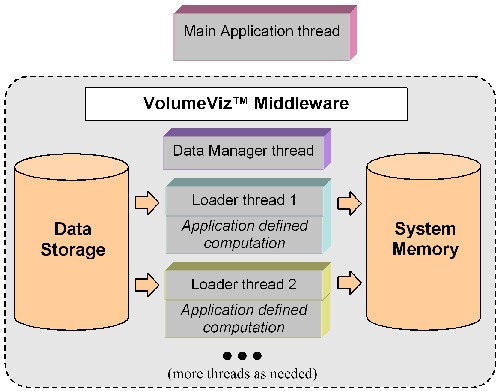

Middleware libraries can solve part of this problem. For example the VolumeViz toolkit automatically creates a separate thread to manage data loading and multiple separate threads to do the actual physical I/O. In addition, VolumeViz enables the application to supply computation modules that are executed in parallel by the data threads. This capability enables the application to take advantage of multiple cores without changing the application code. VolumeViz also provides a framework for managing computing and rendering on the GPU chip. Application-defined GPU programs are downloaded and executed by VolumeViz in cooperation with its predefined GPU programs for rendering effects. Middleware libraries also provide building-block algorithms, such as fast Fourier transform (FFT) and convolution that are already highly optimized for new architectures.

Middleware libraries can solve part of this problem. For example the VolumeViz toolkit automatically creates a separate thread to manage data loading and multiple separate threads to do the actual physical I/O. In addition, VolumeViz enables the application to supply computation modules that are executed in parallel by the data threads. This capability enables the application to take advantage of multiple cores without changing the application code. VolumeViz also provides a framework for managing computing and rendering on the GPU chip. Application-defined GPU programs are downloaded and executed by VolumeViz in cooperation with its predefined GPU programs for rendering effects. Middleware libraries also provide building-block algorithms, such as fast Fourier transform (FFT) and convolution that are already highly optimized for new architectures.

Rendering

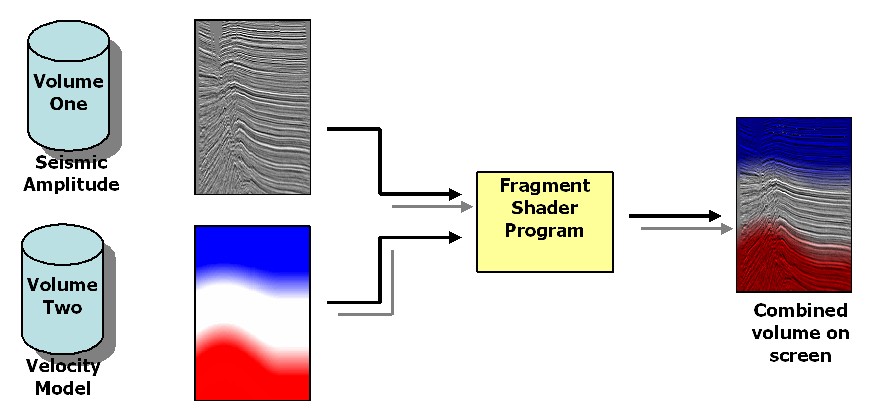

Rendering of 3D images is naturally a parallel-computing task. And each new generation of GPU chip has more “pipes” (parallel computing units), providing an automatic increase in rendering performance. Powerful GPUs are available even in laptop machines, making state-of-the-art rendering accessible to almost all users. The ability to program the GPU results in higher quality rendering, new rendering techniques, and new opportunities for interaction by combining computing and rendering on the GPU. Middleware libraries, such as VolumeViz, implement many of these techniques and provide a convenient framework for applications to implement their own techniques. Some relatively new rendering techniques include bump mapping, dynamic lighting, arbitrarily shaped probes — mapping seismic data onto arbitrary geometry — and co-blending of multiple data sets. Combining computing and rendering in the GPU enables techniques including volume clipping (e.g., against horizon surfaces), volume masking (using values of one volume to mask another volume), and volume warping (e.g., horizon flattening).

Summary

3D visualization will continue to be a critical part of addressing today’s challenges in exploration and production. To be effective and successful, 3D visualization must integrate solutions for data management, computing and rendering. Today, visualizing large E&P data sets no longer requires a supercomputer or even a super cluster. Advances in both hardware and software are coming together to enable larger data sets, more automated analysis, and more effective presentation of the data on single workstations. Taking advantage of these advances will be challenging for software developers and will require some re-thinking of application architectures and user interfaces. However innovative “middleware” solutions can solve some of these problems and provide a framework for a complete solution.

—–

Michael M. Heck is technical director of the Visualization Sciences Group (VSG) at Mercury Computer Systems, Inc. where he evangelizes the use of 3D visualization. He works with customers to understand their applications and apply visualization technology to meet current requirements, and guides the development of visualization technology to meet future requirements. Mr. Heck has been involved in implementing, managing, teaching and applying 3D visualization software for 20+ years. During that time he has been a speaker at conferences including SEG and the World Oil Visualization Showcase, has been an invited instructor for the SIGGRAPH conference courses, and he has authored technical articles on visualization for publications including Communications of the ACM and the American Oil & Gas Reporter.