The HPC community has been dabbling with Field Programmable Gate Arrays (FPGAs) for several years now, but the technology has never reached escape velocity. The attraction of reconfigurable computing has kept the supercomputing crowd dreaming, but clunky and non-standard programming environments, lack of FPGA chip real estate for 64-bit floating point operations, and I/O bandwidth limitations have inhibited their use in mainstream HPC. The common refrain of “FPGAs are the future of supercomputing and always will be” seemed destined to be a permanent joke.

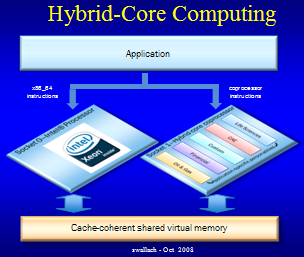

But at SC08 this week, startup Convey Computer Corp. launched a new server and software stack that aims to tame FPGAs and deliver reconfigurable computing to everyday HPC users. In a nutshell, the company has developed a “hybrid core” server, the HC-1, which wraps FPGAs into a reconfigurable coprocessor that runs alongside a standard multicore x86 CPU. The CPU and coprocessor can be programmed with standard C/C++ and Fortran. Essentially, you can take legacy code, run it through the Convey compiler, and out pops an executable that runs an order of magnitude faster on a Convey box than it would on an x86 system.

But at SC08 this week, startup Convey Computer Corp. launched a new server and software stack that aims to tame FPGAs and deliver reconfigurable computing to everyday HPC users. In a nutshell, the company has developed a “hybrid core” server, the HC-1, which wraps FPGAs into a reconfigurable coprocessor that runs alongside a standard multicore x86 CPU. The CPU and coprocessor can be programmed with standard C/C++ and Fortran. Essentially, you can take legacy code, run it through the Convey compiler, and out pops an executable that runs an order of magnitude faster on a Convey box than it would on an x86 system.

Convey is brainchild of Steve Wallach, co-founder and CTO of Convex Computer, a company that developed vector supercomputers back in the 80s and 90s. (In case you were wondering, yes, Convey = Convex+1.) Since programming vector processors was a pain for users, Convex developed automatic vectorizing compilers to enable standard codes to take advantage of their machines. In 1995, the company was bought out by HP and eventually Wallach hopped on the consulting circuit, selling his computing expertise to the government and IT venture capitalists.

His idea for hybrid core computing was born out of conversations with his contemporaries at Intel and Xilinx. Wallach convinced them that he would be able to take their commodity processors and create an innovative and commercially-viable platform for HPC users. Both Intel Capital and Xilinx became investors in Convey, along with CenterPoint Ventures, InterWest Partners and Rho Ventures. The initial funding amounted to $15.1 million.

Wallach, now the chief scientist at Convey, tapped some of the Convex alumni and assembled a 28-person team to get the new company off the ground. The Convey engineers resurrected the Convex auto-vectorization model with a new twist: using FPGAs as reconfigurable acceleration engines. But the idea of insulating the developer from the hardware is the same. “Our view is that you should be able to program in standard Fortran, C and C++,” says Wallach. So no extra language keywords, extensions, or special APIs are required to extract the extra performance from the FPGA-based coprocessor. According to Wallach, “you should put the burden on the compiler to do all the heavy lifting.”

This is a departure from most other HPC accelerator-based systems, where proprietary language or runtime API extensions are needed to tap the non-CPU hardware. Environments like CUDA (for GPUs) or ImpulseC (for FPGAs) rely on extended forms of C, which means legacy code must be ported before it can be accelerated. It also means newly developed code is tied to a particular architecture or must rely on a configuration management system to maintain separate source trees. All of that translates into lost human productivity.

On the hardware side, Convey’s principle architectural innovation is tightly coupling the x86 CPU with the reconfigurable coprocessor. To accomplish this, the Convey engineers designed a server with a CPU and multi-FPGA coprocessor that share the same view of virtual memory. The x86 is used mostly for scalar logic and the coprocessor is used for vector acceleration, while taking advantage of the FPGA’s ability to be tuned to workload-specific instruction streams. Since the coprocessor implements virtual memory and cache coherence, no data has to be shuffled back and forth between the CPU and externally connected FPGAs.

The way the coprocessor is reconfigured for different applications is by loading the FPGAs with a “personality,” which describe an instruction set that has been optimized for a specific workload. For example, there could be different personalities for bioinformatics, CFD, financial analytics, and seismic processing. If you had a financial analytic calculation where you wanted to see the results with different interest rates or with random numbers plugged in, your application would require double-precision function units and instructions to facilitate such operations as random number generation and exponentiation, square roots and logarithms. Other applications like seismic processing require single-precision, complex floating point instructions.

The way the coprocessor is reconfigured for different applications is by loading the FPGAs with a “personality,” which describe an instruction set that has been optimized for a specific workload. For example, there could be different personalities for bioinformatics, CFD, financial analytics, and seismic processing. If you had a financial analytic calculation where you wanted to see the results with different interest rates or with random numbers plugged in, your application would require double-precision function units and instructions to facilitate such operations as random number generation and exponentiation, square roots and logarithms. Other applications like seismic processing require single-precision, complex floating point instructions.

At compile-time, the developer selects a command-line switch to specify the appropriate personality for the application source. Based on the switch, the compiler extracts the parallelism from the source code by generating the personality’s extended instructions intermixed with x86 instructions, as appropriate. Prior to execution, the OS configures the FPGAs by loading the personality image corresponding to the extended instruction set.

At any one time, the coprocessor executes a single personality. In most cases, this will be sufficient for an entire application. But the FPGAs can be dynamically reconfigured during execution if an application embodies multiple types of workloads. A personality switch takes on the order of hundreds of milliseconds. The idea is that unless your application has a umm… “personality disorder,” switching occurs relatively infrequently during execution — basically during program startup or application phase changes.

There is also the ability for developers to build “procedural” personalities, which implement entire routines that are invoked like procedures or functions. To do this, a programmer will need to employ the Personality Development Kit (insert your own geek joke here) supplied by Convey.

The base hardware is a 2U rack-mountable server containing two sockets — one for an Intel CPU and one for the coprocessor. The coprocessor contains a host interface, three or four FPGA (Xilinx Virtex-5) chips, and a memory controller. The host interface encapsulates the communication with the CPU, instruction fetching and decoding, plus a common set of scalar op-codes for the coprocessor. The first version of the system will employ Intel’s front-side bus to talk to the coprocessor. But with Nehalem processors just around the corner, Convey already has plans in place for a QuickPath Interconnect-based system.

The memory controller manages a high bandwidth memory subsystem, which is incorporated into the CPU’s virtual memory space. It uses 16 DDR2 memory channels to deliver an aggregate bandwidth of 80 GB/sec. That’s a lot faster than what is currently available on an Intel Harpertown system and is even faster than what will be available on next year’s Nehalem chips. At these speeds, the controller is able to transfer individual 64-bit words (as opposed to just entire cache lines), which is how a vector processor would like to be fed.

Innovation doesn’t come cheap. An HC-1 server retail for around $32,000. But the pitch is that since an average HPC app can be accelerated 10x on this platform, each HC-1 is equivalent to 10 vanilla x86 boxes. If true that would translate to significant savings for system acquisition costs, as well as power and cooling.

UCSD is an early customer, using the HC-1 to accelerate a proteomics application, called InsPecT. Scientists there expect to achieve a 16x speedup with the new system. Pavel Pevzner, director of UCSD’s Center for Computational Mass Spectrometry, says a single rack of HC-1 servers can replace eight racks of conventional servers at the center.

How well the Convey platform performs over a range of HPC codes remains to be seen. And introducing a new company with a new architecture certainly has some risks, especially in this economy. But Wallach thinks he’s got a winner and seems undeterred about launching into a headwind. “The way you make money and be successful is to be a contrarian,” he says.

Steve Wallach will be honored at SC08 with IEEE’s Seymour Cray Award. For more about Wallach, see our in-depth interview with him in today’s issue.