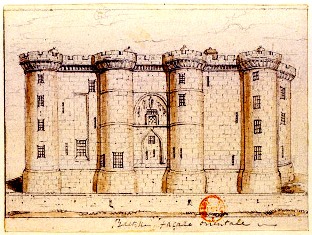

Yes, that is the Bastille. And yes, it actually is a relevant image to accompany this discussion — at least by way of analogy. It was tempting to paste the logos of a few select software providers around the perimeter but that might have been overkill.

News about big banks tentatively exploring cloud offerings has been circulating for a couple of years now but in recent headlines these movements are gaining some significant traction. While at first the steps to the cloud were relatively small and based on CRM and select SaaS projects, the last couple of months have produced far more newsworthy events for big banking in the cloud — and if the installed software at the offices of these banks had the ability to shake or fear for its life, it would probably be doing so.

Bank of America, Commonwealth Bank of Australia and Deutsche Bank have announced that they are forming an alliance to collaborate for the common goal of attempting to cut back on their general IT infrastructure costs by adopting cloud strategies. This alliance, as reported in the Australian Financial Review this week (subscription required) is not about coming together as intercontinental supercompetitors to research or merely discuss cloud options. This is about proactive advancement toward a solution that the three banks and any others who care to join the club (well, maybe not just any, apologies, First Federal of Scranton) can make use of to replace their current expensive infrastructure.

As the alliance seeks new international banking recruits it is becoming clear that the days of installed software from giants including Microsoft, Oracle, and IBM might be grinding to an extended halt. And on second thought, why is that “might be” in there? After all, we’re talking about banks that have just emerged from the weathering of a storm that was profoundly stronger than anyone could have expected just ten short years ago — back when these tech firms were reveling in an unprecedented sort of glory.

Michael Harte, Commonwealth Bank of Australia’s CIO stated in an AFR report, “We are not dependent on large software providers and therefore we don’t have to pay those terrible fees that deliver no particular value. We are going to rely much less on packaged software and we are going to be able to buy services in a much more commoditized manner.”

The present tense power behind Harte’s words cannot be ignored; close your eyes and repeat them to yourself — do you see the pitchfork-wielding men in suits lining up to storm a certain Seattle-based software giant’s offices before moving on to others of approximate size? It’s not the pitchforks old IT needs to worry about — it’s the sheer number of suits.

The Community Cloud Model in a Competitive Industry

This approach to collaboration to adopt a shared cloud infrastructure might strike some as a little familiar. This is the one solution that has been acceptable for the private sector in its slow move toward the cloud. What has emerged from a common need for shared infrastructure and costs outside of traditional implanted IT has been something a little more robust than a private cloud — it has more in common with what the National Institute of Standards and Technology (NIST) calls a community cloud.

The mode of cloud deployment that these banks are discussing is not the general all-purpose cloud that is suitable for small or medium-sized business with fairly routine scaling and cost considerations to weigh; this is a model based on the community cloud model of deployment wherein a particular community polls its resources and efforts to agree on a solution that is aligned with the mission of all members.

Most of what is being suggested by the bank alliance falls easily into the category of a community cloud, which, according to NIST’s definition means “the cloud infrastructure is shared by several organizations and supports a specific community that has shared concerns (e.g. mission, security, policy, and compliance considerations). Accordingly, the only large-scale groups that have been either adopting or considering the community cloud deployment model are government or research agencies that rely on a large amount of cooperation or sharing of resources and who naturally share common borders when it comes to concerns about governance, compliance, and the host of other expected requirements that factor into cloud decision-making in large organizations.

As Andrea DiMaio from Gartner notes, while the community cloud model serves international research agencies and governments well since they have no reason to be competitive with one another it is an interesting event indeed to see an alliance formed between competitive banking giants — spread across continents, no less. DiMaio says, “while cloud looks like a vendor market, what governments worldwide and now some banks are doing in turn is to turn it into a buyer’s market…in fact while some vendors are responding to demands from the U.S. government by establishing U.S.-based clouds that comply with United States federal security requirements, this won’t be enough to serve consortia that cut across geographies.”

This is a model based on common goals rather than, of course, love a sappy, warm genuine friendship among good-humored banking industry pals who just happen to compete with one another sometimes. The sentiment that’s been expressed here and there that the public sector would be the guiding light for major private sector parties for once might be correct, at least for banks who have been tied to high fees because of their custom implementations. When there are readily-available off-the-shelf or even open source solutions ready and waiting, what happens? The government and now the big banks are setting quite a precedent here.

How Soon is Now?

The question is not whether or not this effort will work — it is underway with the backing of three of the largest banks in the world supporting it. The issue at stake is how many more financial institutions will jump on board with this idea and send their CIOs out to talk about it?

Bank of America is no stranger to the notion of cloud as the most significant strategic move to cut IT costs. Andrew Brown, managing director and head of strategy, architecture and optimization, has been a public advocate of cloud for the financial services industry for the last year in particular. Brown sees the possibilities for the cloud in enterprise but is aware of the critical questions that enterprises need to ask themselves before adopting their cloud strategy. As Brown stated, “I think most forward-looking companies are looking at internal cloud solutions, external private cloud and external public cloud. The question is, how much data do you feel comfortable moving out and what level of service-level agreement can you obtain from internal, external private and external public cloud offerings?”

The voices of banking industry leaders are growing louder, and now, with the strategic triad of the world’s largest banks calling for revolution, we can expect some companies that have been rather slow rolling out their cloud provisions (and why should they hurry when the longer they drag their feet the more they can keep collecting on the service, use and other fees?) to start sending out their peacekeepers in droves. In short, expect a wave of new releases from the same giants who the banking triad has sought to replace — after all, if anyone is in a position of power to produce cloud service offerings that will be aligned with this new vision it’s the same companies whose provisions are being replaced. Right at this moment, no less.