Using high performance computing to help modernize US manufacturing is one of those good ideas that seems inevitable but always just out of reach. A recent study confirms this, and provides a framework for strengthening the HPC landscape in this sector.

Of course some might ask what’s the point of trying to boost manufacturing in the US when the sector only employs about 10 percent of the workforce, a figure that is projected to decline further in the coming years. Also, the use of HPC to make manufacturing more efficient is not likely help the downward employment trend. Employing virtual product design and development and automating other manufacturing processes will probably eliminate more jobs than it creates.

By world standards, the US manufacturing market is already fairly efficient. Despite the relatively few workers employed in the segment, because of its sheer size, US manufacturing dominates world production. Output in 2009 was $2.15 trillion (expressed in 2005 dollars), besting China’s contribution of $1.48 trillion and representing about 20 percent of the world’s manufacturing output.

But the real value of the US manufacturing sector is that it’s at the heart of much of the science and engineering innovation on which the remainder of the economy rests. Today US manufacturers employ more than a third of the country’s engineers and account for 60 percent of all private sector R&D. As such, it creates products that are used by the more lucrative service industries. Think, for example, of all the myriad services that are dependent on the production of computer chips and other electronic devices. Manufacturing, like agriculture before it, is a foundational activity that acts as a catalyst to other business sectors.

Furthermore, according to a recent article in The Atlantic, there is no realistic way to balance US foreign trade that relies exclusively on the service sector. Nor is there a feasible way to employ existing (and future) blue-collar workers without a healthy manufacturing sector.

And healthy it is not — at least from a global perspective. Based on a survey of CEOs conducted by Deloitte and the Council on Competitiveness released in June 2010, the US is ranked fourth in manufacturing competitiveness, behind China, India, and South Korea, and is expected to drop to fifth place, behind Brazil, by 2015. A National Institute of Standards and Technology factsheet recounts the need for the industry to focus on developing technologically-advanced products that can compete in the global marketplace. “There is widespread agreement that rather than engage in a ‘race to the bottom’ for low-wage production facilities, the United States should aim for high-value-added manufacturing opportunities,” says the factsheet.

Moving up the manufacturing foodchain often leads to a much better bottom line, and in some cases, extra jobs. For example, Frank van Mierlo, CEO of 1366 Technologies, claims that the US is in a good position to build a silicon chip industry for solar cells. According to Mierlo, the nation produces around 40 percent of the world’s high grade silicon for both chips and solar cells, which is worth about $1.7 billion. He says if US-based companies turned that silicon into wafers, it would become a $7 billion business and add 50,000 jobs.

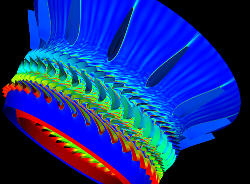

That kind of thinking is being embraced by non-profit groups as well. US government agencies, the Council on Competitiveness, and the National Center for Manufacturing Sciences (NCMS) are all big proponents of high-tech solutions. HPC, in particular, is seen as a key driver in upgrading the nation’s manufacturing capabilities. The use of such technology allows engineers and designers to perform prototyping, product design and analysis, product lifecycle management, and product optimization/validation, with much less reliance on physical mockups and testing.

But despite better access to HPC than is generally available in other countries, in the US fewer than 10 percent of manufacturers use this technology — that according to a recent study conducted by InterSect360 Research in conjunction with NCMS. The report surveyed 323 respondents across industry, academic, government and trade organizations in July 2010 to gather a snapshot of digital manufacturing practices and attitudes in the US.

Not surprisingly it found that top manufacturers were already major users of high performance computing. Based on the survey, 61 percent of companies with over 10,000 employees are using HPC today to model everything from engine parts to product packaging. The numerous case studies of digitally-engineered products at companies like Boeing, Procter & Gamble, and General Motors attest to the acceptance of HPC at these large firms.

Not surprisingly it found that top manufacturers were already major users of high performance computing. Based on the survey, 61 percent of companies with over 10,000 employees are using HPC today to model everything from engine parts to product packaging. The numerous case studies of digitally-engineered products at companies like Boeing, Procter & Gamble, and General Motors attest to the acceptance of HPC at these large firms.

Meanwhile, small manufacturers, which by number represent the vast majority of the companies in this sector, have barely touched the surface of high performance computing. Here only 8 percent of businesses with under 100 employees are using such technology. Where modeling and simulation tools are being employed, they’re mostly restricted to desktop systems, representing a sort of poor man’s HPC.

The study found the most significant barriers to adoption were the lack of internal expertise, the cost of software, and to a lesser extent, the cost of hardware. To some degree, though, cost concerns may be a misconception. Over 80 percent of companies that currently use HPC report they spent less than one-third of their IT budgets on HPC — not an insignificant amount, but not an overwhelming expense either.

Importantly, 72 percent of desktop-bound CAE users did see a competitive advantage in adopting more advanced computational technology. In such environments, long simulation times and other software issues (compatibility, robustness, data management) were cited as major limitations.

When asked about the importance of different business drivers — production efficiency, time to market, product novelty, product quality, industry leadership, etc. — the survey takers said all were important, but it was product quality that garnered the most intense response. Since HPC enables iterative product refinement in a virtual design and test environment, that could turn out to be a big selling point for the technology.

In manufacturing, as in most verticals, smaller companies tend to be at a disadvantage when it comes to adopting HPC, and this is certainly reflected by the InterSect360 study. But costs, at least of hardware, are coming down. And software costs, while more worrisome, would likely be no more expensive (or at least not substantially more) on an eight-node cluster than on eight standalone workstations.

What most of these manufacturers require is a low-risk path that allows them to segue into high performance computing. Whether that turns out to be partnerships with HPC-savvy organizations, system vendors who can understand and cater to low-end HPC users, or something else remains to be seen. What seems much more certain is the need for manufacturers in the US to be able to compete at the high end of the market with superior quality products. To do that, companies will need to accept HPC as a foundational technology for their businesses.