The recent growth of the HPC market, powered almost exclusively by the adoption of Linux clusters, has been dramatic. But the expanding complexity of technical clusters that is the dominant species of many HPC systems can have a diminishing effect if it is not effectively addressed. A major cause of cluster complexity is proliferating hardware parallelism with systems averaging thousands of processors, each of them a multi-core chip whose core count can double every other year. Add to this trend the additional issues of third-party software costs, the difficulty of scaling many applications and cluster management complexity quickly begins to augment.

Market research firms emphasize the importance of alleviating cluster complexity through a coordinated strategy of investment and innovation to produce an HPC integrated system. They stress that software advances will be more important for HPC leadership than hardware to advance to Exascale-class systems. This provides the urgency to highlight the need to build, plan and design supercomputer cluster architectures by choosing the right external partnerships, cluster software and management tools. In addition, complete system integration should include professional services and support team expertise to provide easier and faster results for datacenter administrators and end-users.

For 2012, IDC forecasts server revenue will reach a record level of $10.6 billion, en route to $13.4 billion in 2015 (7.2% CAGR).

Appro, www.appro.com for over 20 years has led innovation of common and open platform integration which provided exceptional supercomputing solutions while enabling researchers and scientists across the globe to fuel discovery through the use of HPC. Appro is well positioned to address the HPC cluster market growth and has amassed the in-house domain expertise needed to act as a trusted advisor to HPC users.

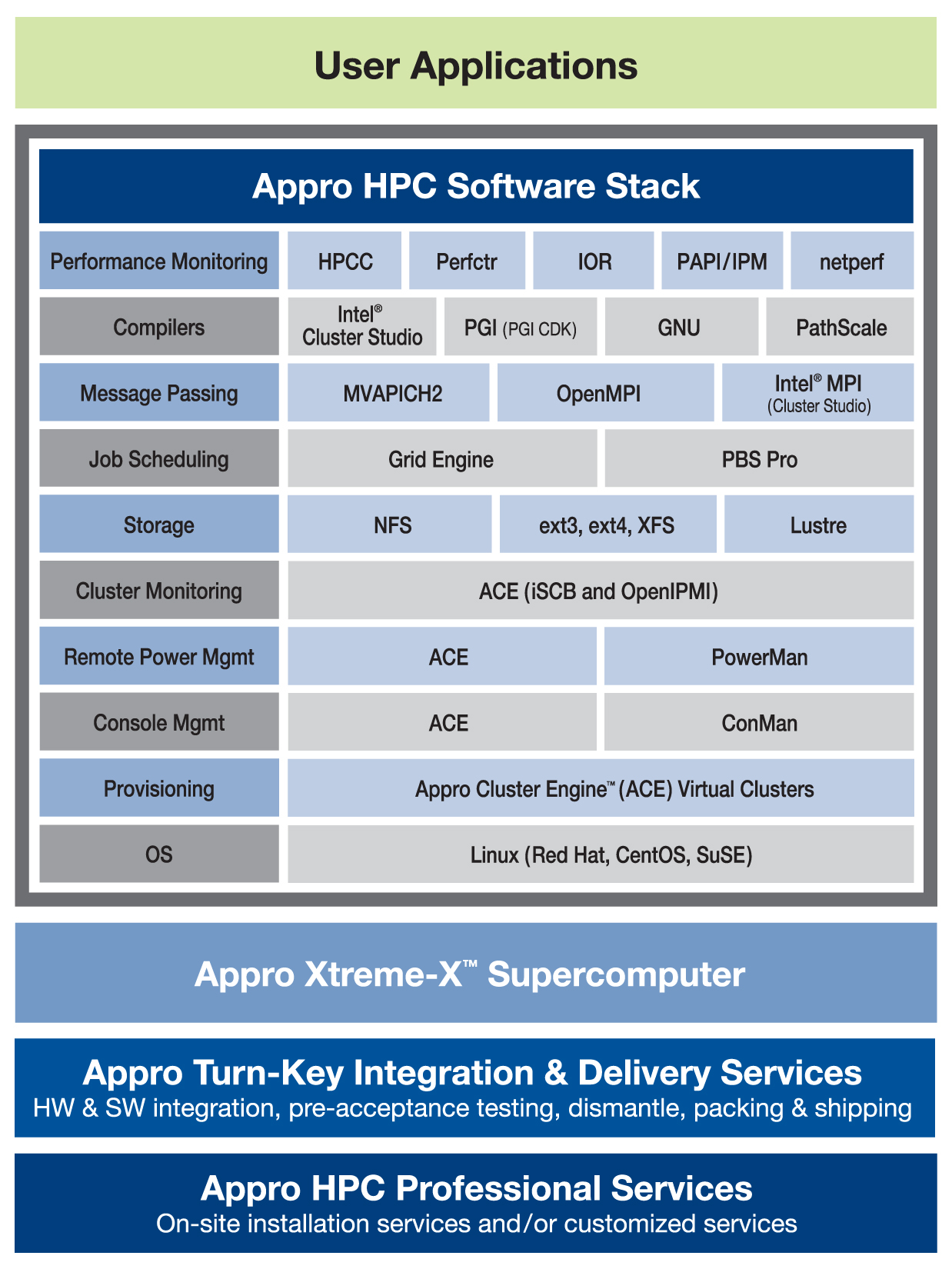

The Appro Xtreme-X™ Supercomputer is tightly integrated with the Appro HPC Software Stack providing cluster management, compilers, tools, schedulers, and libraries.

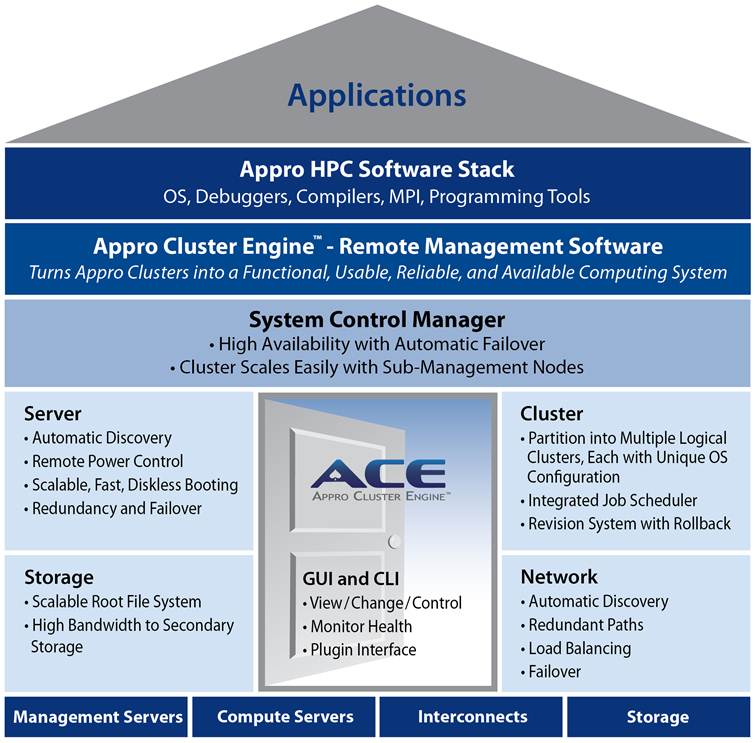

The Appro Cluster Engine™ (ACE) management software offers server, cluster, storage and network management features combined with job scheduling, failover, load balancing and revision control capabilities with multiple Linux OS support. ACE includes a Graphical User Interface (GUI) that is intuitive and easy-to-use, connecting directly to the ACE Daemon on the management server and can be executed from multiple operating systems.

As an overview, the following is a list of the essential HPC software and management tools required for cluster architecture:

The HPC Software Stack –

1) Operating System

2) Cluster Management System

3) HPC programming tools

The Operating System is the first level of the software stack. Most HPC systems use a variant of the commodity Linux operating system. The popular versions of Linux are Red Hat, CentOS, and SuSE. Since they are all derived from a common root, the main differences in these versions are the installation programs and the support provided by these companies. The most important factor that will drive your choice of operating system is the applications that you need to run on your cluster. Check with your application provider (or your application developer, if you are using a custom application) for supported operating systems and requirements for additional drivers.

The Cluster Management System is the second level of the software stack. ACE provides the management for the operation and maintenance of an Appro supercomputing cluster. ACE is an essential level of the Appro Software Stack. It is designed from the ground up for cluster scalability and performance, and for high-performance capacity and supercomputing capability based on open standards hardware. ACE manages a set of independent physical computers and networking connections that are interconnected by high-speed networks, allowing the individual computers to function as a single and integrated computing system. It also manages the redundant hardware and software components required to provide the fault-tolerance needed to meet enterprise level availability requirements. This total management capability is critical to the reliable operation and availability of a high-performance enterprise class capability supercomputer.

The HPC programming tools are the third level of the software stack. They support the generation, execution, and debugging of applications that run on the cluster. The HPC programming tools consist of the compilers, libraries, and special software used to develop and test application software. Included are library codes that perform common processes that are common to many applications. These common processes such as math functions are optimized to make the most efficient use of a particular processor’s capability. These libraries simplify user programs since they can be included where required and with little programming effort. The HPC stack may also include programs that can be used to debug programs and test system performance. These tools are all critical to the efficient use of an HPC cluster.

Appro products and solutions combine hardware, software, professional services and partnerships to offer a complete end-to-end supercomputing performance to various industries. Appro has a remarkable record of delivering scalable production deployments and is ranked in the Top500 list as the world’s fastest supercomputers based on open-standards cluster architecture using x86 processing technologies. Appro is leading the way in enabling technical applications and business results through outstanding power-efficient Petascale systems with fast time-to-market solutions based on the latest open standards technologies coupled with innovative HPC software cluster tools wrapped with HPC professional services and support.