The Moabcon event comes on the heels of Adaptive Computing’s recently-announced Moab portfolio refresh. Naturally, these products were the focus of the three-day event, which took place earlier this month, and featured heavily in the program, more details of which can be found here.

The conference was well attended in a magnificent ski resort in Park City; the only glitch: there was no snow to welcome arriving attendees. Daytime temperature was in the 70s on Tuesday and Wednesday, but what a difference a day makes. Thursday’s temperature probably never got above 35 following an overnight snowfall. See before and after pictures:

But enough of the weather and location. The company’s annual conference was Adaptive’s first opportunity to showcase the new Moab Version 7 to the large user community. Not to mention, the technical sessions and keynotes were all very well attended and by all accounts provided excellence value and education.

Highlights. Where do you start as there were so many good presentations? Let’s begin with Tuesday evening’s entertainment – HP Presents a Night at the Olympics. The adventure commenced with a Gondola Ride to the top of the mountain for networking and dinner followed by zip lining and assorted Olympic Games. Everyone headed up the mountain from about 7,000 feet to 8,000 feet. The evening weather great. Zip lining was big hit; this is where you’re suspended on pulley that is hooked on a cable mounted on an incline. You are then propelled by gravity traveling from the top to the bottom of the inclined cable while hanging on the pulley reaching speeds of 40+ mph, an adrenaline rush.

Wednesday kicked off with a fireside chat from HP executives Marc Hamilton and Jerome Labat in a lively discussion on the State of Cloud Technology, and what it means for HPC and beyond. This was followed by a keynote session – To cloud or not to cloud – which included a brief walk down memory lane to the foundations of cloud computing, timesharing, through to today’s private cloud benefits and ROI, and ending with obstacles to adoption with a focus on security and the need for a unified single management system that can discover, manage and report on all the heterogeneous cloud resources.

Following the keynotes, the day’s technical breakout sessions started. The conference ran two tracks with the second focused on cloud computing. Adaptive Computing’s Technical Consultant Dan Croft presented an introduction to the world of cloud from an HPC point of view. This focused on the many new features of Moab’s HPC cloud model and how it can be used in a traditional HPC environment together with an enterprise environment.

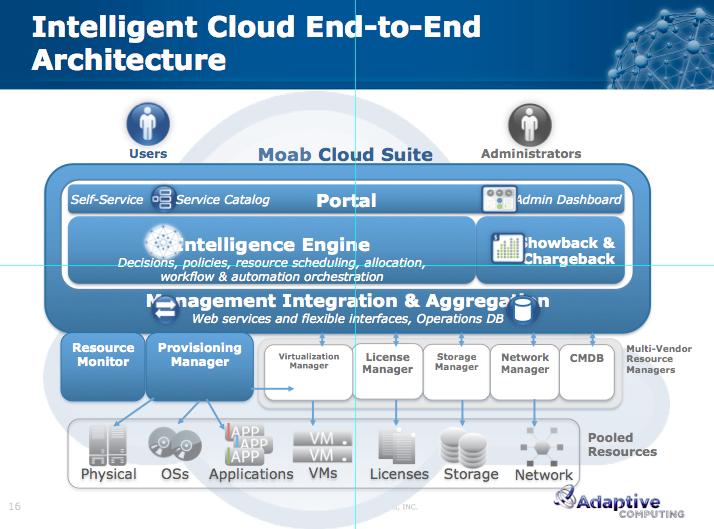

When it comes to running HPC workloads, even in the cloud, the Moab HPC suite will be the go-to product. With an emphasis on flexibility and automation, Moab’s private HPC cloud solution intelligently reprovisions machines depending on the needs of the workload. The Cloud suite, it should be noted, is mainly for running enterprise IT applications in a private or hybrid cloud.

This was followed by hands-on demos and a more detailed drill down into features of Moab Cloud Suite 7.0 xCAT edition. Lot of focus on the user experience, like the previous session, together with a deeper look at the accounting and resource allocation. Two really key areas that will improve adoption of private cloud computing. The good news is that this is all managed from single keyboard and screen.

The lunchtime speakers included:

-

Rich Tehrani, Cloud Computing Magazine

-

Michael Jackson, President and Founder, Adaptive Computing

-

Merle Giles, NCSA, Private Sector Program, Accelerating Business with HPC as a service

Rich shared some interesting industry trends and statistics on cloud computing pulled from several industry analyst reports including IDC, Gartner and Forrester Research information and other industry sources. Basically, the market is big and growing. Unfortunately, he was not able to drill down on HPC specific data.

Michael Jackson’s keynote focus was the new version of Moab and how it empowers the user to:

-

Increase performance

-

Workload

-

System

-

-

Increase agility

-

Increase reliability

-

Increase cost savings

-

Achieve your objectives

Michael positioned Moab 7.0 as an intelligent end-to-end architecture for cloud computing. Clearly Adaptive is gaining customers in the enterprise HPC market, specifically manufacturing, financial and oil and gas customers. Moab’s focus on ease of use, simplicity and accounting/usage will no doubt help gain additional enterprise class customers. Specific capabilities include:

-

Simplified job submission & management.

-

New Web Services for easier integration.

-

Updated self-service portal and admin dashboard.

-

Greater usage budgeting and accounting flexibility.

-

Additional database support.

Merle Giles, director of NCSA’s Private Sector Program, delivered a very good talk on the importance of HPC to the manufacturing industry. The keynote, Accelerating Business with HPC as a Service, was split into three parts 1) economic development, 2) accelerate business, and 3) addressing the future.

NCSA brings together traditional HPC hardware vendors, leading ISV manufacturing applications and key industrial partners. The end result is that the industrial partners are able to use HPC systems to improve product design and accelerate time to market by modeling their advanced designs and materials on some of the latest HPC systems. All this in a cloud computing pay-as-you go model. This program brings the promise of HPC to a broad segment of the market and enables businesses to tap into all the benefits HPC has to offer as well as having access to a wealth of knowledge within the HPC community.

NCSA’s computing resources, including the iForge system, a system with over 120 Dell Servers designed specifically for industrial use is a valuable platform for industrial power users to maximize productivity. The Moab intelligence engine is used to, automate, and self-optimize IT workloads, resources, and services in large, complex heterogeneous computing environments. This 22-teraflop high-performance computing cluster is used by NCSA’s Private Sector Program, which leases time on iForge in a cloud-like fashion to some of the biggest names in the industry, household names like Boeing, BP, Caterpillar, John Deere, Nokia Siemens Networks, Procter & Gamble and Rolls-Royce.

NCSA’s computing future is based on Blue Waters, the first open-access system tasked to achieve a petaflops or higher performance on a wide variety of applications. Blue Waters is a 25,000-node Cray system that will bring together government, university and industry collaboration. The system will not just crunch numbers but will deal with the growth in big data and data analytics.

Merle related a recent user experience and the value of HPC to the private sector. A small radiator supplier for one of the largest automotive companies needed to develop a new radiator. Under their existing environment this would take several days to complete. NCSA set the company up with access to iForge and access to licenses for thermal and CFD ISV codes. The same design now ran in a few hours, a significant difference. Everyone wins in this scenario. However, despite this huge performance improvement the engineering team was able to see that they could do an even better with the design given the horsepower now available, including 3D modeling. The end result was a redesign radiator that would be quicker to manufacture and provide better performance in the vehicle all in less time compared to doing the same design over and over again. This would not have been possible for this small company, as they neither had the hardware resources, application expertise or the dollars needed to do the work in a non-cloud environment.

Final key messages from Merle included the need for more collaboration and joint ventures between HPC community and commercial end users – joint ventures critical to going forward.

The final day, Thursday, a customer keynote was delivered by Preston Smith from Purdue University. Purdue is one of Adaptive’s most recent customer wins. This was a lighthearted trip down Purdue’s HPC computing memory lane with some great old photos of the computer systems spanning over 30 year.

The Computer Science Department, possibly the first in USA, created in 1962. In 1967 a CDC 6500 was installed. 1983 saw the installation of CDC 205 followed a few years later with ETA 10 running System V Unix. The 90’s systems included Intel Paragon, IBM RS6000 and even IBM SP/2. The 2000-decade saw changes to Linux Clusters and systems from Sun Microsystem, E10K and F6800, both fat node SMP systems. This later all changed to Dell/Intel/GigE to today’s HP/Intel/Mellanox InfiniBand with over 10,000 cores.

Over the years these various systems were managed using PBS Works. After a few months of evaluating Torque and Moab the decision was made to switch from PBS Works as Moab provided better scheduling and supported GPUs, upgrades with no downtime and NUMA support. Going forward with Moab, Purdue sees value in Job Status reporting, license management, overall usability and GPU scheduling.

Finally, this was a very well organized and successful user conference. Great location and hospitality, and more importantly, the technical sessions provided excellent value for Moab users.