Two of Cray’s more notable achievements in 2011 — the contract win of the “Blue Waters” supercomputer re-bid and the addition of a high-performance storage line — are reaping dividends in 2012. In this interview with Barry Bolding, Cray’s vice president of storage and data management, HPCwire takes a behind-the-scenes look at that unusual procurement and the company’s subsequent move into the storage business.

HPCwire: How quickly did Cray need to respond when the Blue Waters procurement re-opened last year?

HPCwire: How quickly did Cray need to respond when the Blue Waters procurement re-opened last year?

Barry Bolding: This opportunity was notable for the combination of its timeline, size and scope. Cray often needs to respond quickly and our manufacturing organization is optimized to produce large systems for customers. But the scope of the Blue Waters opportunity was big enough, and the timeframe was short enough, that we knew it would tax not only our own manufacturing, but our entire supply chain. The challenges included producing and delivering more than 270 Cray compute cabinets, a groundbreaking file system with 25 petabytes of storage and sustained aggregate performance of one terabyte per second, and fitting this into NCSA’s existing environment in a compressed timeframe.

HPCwire: What was the timeframe like?

Bolding: The NCSA request for vendors was issued in early August 2011, and by SC11 we were in contract, so this was a very compressed time to evaluate the architecture. We started delivering the first Cray system just after the contract was signed, and most of the hardware for an early science system was in transit by the end of the year.

HPCwire: Did you already have blueprint plans on hand that you could adapt?

Bolding: No, but we have processes in place and experience with building big systems. Our standard compute and storage products are all designed for high scalability. Cray’s blueprint is simply our single focus on HPC. We are optimized for the possibility of very large installations.

HPCwire: What’s the current status of the Blue Waters installation?

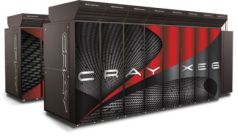

Bolding: In the first phase of the delivery, by early March 2012 we made a complete Cray system available for early science users. This Cray XE6 Early Science System has peak performance of more than one petaflop and two petabytes of high performance Cray Sonexion storage. That’s about 15 percent of the final system. The ESS is a standalone system with 48 cabinets that will be incorporated into the final Blue Waters system.

HPCwire: Who has access to the Early Science System?

Bolding: Initially, six research teams were awarded allocations through an NSF-led competition that started with more than two dozen teams. The research of the six teams focuses on topics ranging from supernovae to climate change to the molecular mechanism of HIV infection. Their progress in meeting preliminary goals has been good. In some cases, the Early Science System is already allowing researchers to do things they couldn’t do before. In May 2012, four more research teams were awarded time on the system. They are studying topics including severe weather, the life cycle of stars, and turbulent combustion.

HPCwire: What’s left to do on the Blue Waters deployment?

Bolding: A substantial portion of the hardware is now on site, so the delivery side is less of a major concern. Our current focus is on integration and performance scaling, including the software and the network. This will be largest Gemini network we’ve built. You always encounter new challenges when you build a system bigger than ever before, so we expect to see some new challenges.

HPCwire: Are you getting performance data yet on real-world codes?

Bolding: Users are already giving conference talks and publishing papers on their work using the Early Science System. The storage subsystem is performing and scaling very well. In the final configuration, there will be one terabyte per second of aggregate bandwidth. On the partial system, we’re seeing linear scaling to about 60 gigabytes per second today.

HPCwire: You’ve said that this is the largest supercomputer Cray was ever contracted to deliver. How was this assignment different from other big ones Cray’s tackled?

Bolding: Deploying our largest supercomputer ever and doing so at a site that hasn’t owned a Cray in recent years is a huge job. We often sell very large systems to existing customers that have started with moderate-sized systems, such as Los Alamos, Oak Ridge, and NERSC. The last time we installed a system of similar magnitude at a site that hadn’t had a Cray in a long time was the “Red Storm” system at Sandia. It’s been nine years since we did that.

Blue Waters will be one of the world’s highest sustained performance configurations on both the compute and storage sides. This is groundbreaking, even for Cray. The storage will be four times larger than on any prior Cray system. It’s no longer just about compute for Cray. World-leading compute and world-leading storage are now the one-two punch for our company.

HPCwire: What’s different about Sonexion storage?

HPCwire: What’s different about Sonexion storage?

Bolding: The Cray Sonexion storage product line was designed from the ground up for scalability. This is unlike any other Lustre file systems we’ve installed. We couldn’t have met the requirements for NCSA Blue Waters with any current technology other than Sonexion. I believe Sonexion has the best odds of achieving one terabyte per second sustained performance based on its highly scalable building-block architecture. Other vendors are aiming for similar milestone installations, but we see those architectures as less scalable than the Sonexion architecture.

It’s built for high scalability with Scalable Storage Units, or SSUs. Each time you add an SSU you add both bandwidth and capacity to the file system in a very balanced way. The switching and server infrastructure are integrated into the file system racks and cabinets themselves. This is done in the factory so it’s ready to run at the customer site.

This storage architecture also minimizes the switching and cabling needed to any compute module it’s attached to. More conventional architectures require external servers, more complex InfiniBand switching and each server would need many more spinning disks to match the performance of a single Sonexion SSU.

We see all this as adding costs and risks to the ability to scale storage productively. Another unique thing is that Sonexion is also designed as a datacenter-wide file system that connects not just to Cray supercomputers but to any others in the data center.

HPCwire: So do you plan to sell Sonexion products to sites that don’t have Cray supercomputers?

Bolding: Absolutely yes.

HPCwire: How have things been going for the Sonexion products in the marketplace? Has it been hard for HPC buyers to see Cray as a storage vendor?

Bolding: We’re traditionally viewed as an HPC compute company, and customers are still learning to view us as a storage company. But every time we install a new Sonexion system, the perception changes for that customer and that set of users. We’ve already done Sonexion installations in government, academia, and in the energy sector, and they’re all in heavy production mode.

To date, all of these are connected to Cray supercomputers, but we’re starting to sell to non-Cray HPC customers and this will help change current perceptions. Don’t forget that our predecessor, Cray Research, made some of the greatest storage innovations with SSDs and data migration. We work closely with our OEMs to ensure we are on the cutting edge from the perspective of software management, performance, and price-performance. While we’ve worked closely with a storage OEM on it, Sonexion is a Cray offering from the ground up. Our expertise includes Lustre scalability.

HPCwire: NCSA formed 25 domain-specific scientific teams two years before Cray entered the picture and has been working with them to prepare their codes for pursuing sustained petaflop performance on Blue Waters. Has Cray gotten directly involved with these teams?

Bolding: NCSA is the primary driver collaborating with the science teams. NCSA and NSF did highly value Cray’s deep experience with the apps. We have one of the most experienced applications teams in the world. We work with scientists all around the world and we have centers of excellence where we work closely with end-users to help scale up their codes. NCSA saw real value in this.

Today, our apps experts are working closely with NCSA science teams to scale their applications to meet performance expectations. Another Cray system has already enabled sustained petaflops performance on a different workload of five real-world codes. We believe that this demonstrates that Cray systems are more productive at scale than those of any other vendor.

NCSA-NSF users have a different set of very challenging applications, so it won’t be easy to achieve this breakthrough performance level. All applications are different and have all their own challenges. But Blue Waters is a groundbreaking supercomputer and we’re confident it will meet the expectations of NCSA and NSF by enabling users to achieve petascale performance on a range of codes.

HPCwire: Are the requirements of NSF scientific users different from those of DOE scientific users Cray serves?

Bolding: There is no single NSF or DOE user type. We’ve been selling into DOE for a number of years. Some systems, like “Jaguar,” support a small number of apps that are scaling high. NERSC has a much broader mandate and supports a larger number of applications. I think Blue Waters will have both, a broad user base from many scientific domains, and a broad range of scaling requirements and goals, on up to petascale.

The 25 science teams formed by NCSA represent more domains of science than are typical for a DOE site. A subset of Blue Waters users will be running at extreme scale, almost to the full size of the 11.5 petaflop system.

HPCwire: Blue Waters will also be available to industrial users through NCSA’s Private Sector Program. Do these users have any special storage or other requirements?

Bolding: NCSA has a very active private sector program that Cray has joined. We think it’s vital that systems like Blue Waters are leveraged for the good of the economy and American competitiveness. Cray is dedicated to both public-sector and private-sector computing.

Our M-series systems are designed primarily for the private sector. For their most advanced research and in other cases where they don’t have adequate HPC resources of their own, private-sector firms sometimes need access to much larger systems and to expertise in using them. That’s what NCSA’s private sector program is all about, and Cray is now part of this program.

HPCwire: What’s the overall significance of the Blue Waters project for Cray? How does it move you forward as an HPC vendor?

Bolding: NCSA is a flagship site for highlighting Cray as a scalable storage provider, and it’s a great launching point for us. We’ll deploy one of the largest, most capable storage systems in the world there. On the compute side, Blue Waters is the latest in a continuing series of the kinds of big challenges that Cray is organized to face. So, this is an important milestone for us. I don’t think any other company on the planet could deliver the sustained performance we’ll be delivering for Blue Waters in this challenging timeframe.