On Aug. 1, Rackspace became the largest OpenStack-based public cloud in the world. After a four-month beta period, Rackspace began using the open source cloud management platform OpenStack to power its extensive public cloud infrastructure. The company is allowing customers to continue using the legacy system if they have any concerns about the transition.

The move occurs just weeks after OpenStack celebrated its second birthday. The open source project was founded by NASA and Rackspace in 2010, and quickly gained a dedicated following. At last count, their global community had attracted nearly 3,400 experts and developers and 184 participating companies. The OpenStack foundation is set to launch later this year and as part of that process is preparing to hold elections for its board later this month.

The move occurs just weeks after OpenStack celebrated its second birthday. The open source project was founded by NASA and Rackspace in 2010, and quickly gained a dedicated following. At last count, their global community had attracted nearly 3,400 experts and developers and 184 participating companies. The OpenStack foundation is set to launch later this year and as part of that process is preparing to hold elections for its board later this month.

In an April announcement, Jonathan Bryce, OpenStack Project Policy Board and co-founder Rackspace Cloud, stated: “In less than two years, we’ve had five software releases from hundreds of contributors from more than 50 companies, and the cloud operating system has grown from two core projects to five core projects across compute, storage and networking. The formation of a Foundation is about preserving and accelerating what’s working and moving the community building activities to a neutral long-term home with a broad base of support.”

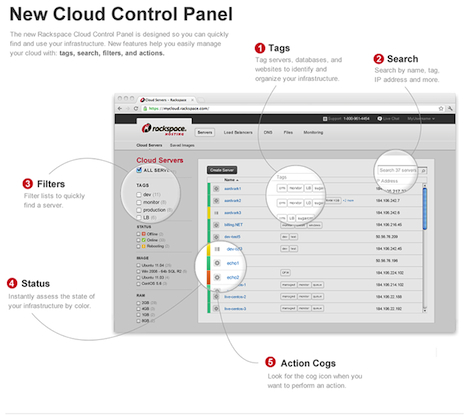

Rackspace’s open cloud portfolio relies on core components of Essex, the fifth OpenStack software release. It includes Cloud Servers and Databases, based on OpenStack Compute (code-named Nova), as well as Cloud Files, based on OpenStack Object Storage (code-named Swift). Cloud Sites platform as a service supports .NET, PHP, load balancers, and monitoring. Rackspace also boasts a new Cloud Control Panel, which works with Rackspace’s legacy code and OpenStack. Designed to be more user-friendly, the Web-based console incorporates tags, filters, search functionality and action cogs to ease the management of large and complex deployments.

Rackspace Cloud Control Panel – click to enlarge

Rackspace says the new Cloud Servers deliver benefits such as increased efficiency, scalability and agility, and allows customers to launch up to 200 cloud servers in 20 minutes. The company claims its MySQL database service delivers 229 percent faster performance than Amazon’s MySQL-based Relational Database Service (RDS).

Not all of OpenStack’s components were ready to make the transition. Cloud Networks (based on Project Quantum) and Cloud Block Storage (based on the Lunr project), which were in beta in April, are still not in production. Rackspace says it is planning to incorporate these additions this fall, perhaps because that’s when the OpenStack “Folsom” release is due out.

The new entry price for Cloud Servers is 2.2 cents per hour (or $16.06 a month), which gets you a 512MB virtual Linux server with 20GB of disk. This reflects a 27 percent discount from the previous price of 3 cents per hour. The old entry-level configuration – 256MB of virtual memory and 10GB of disk for 1.5 cents per hour – is no longer available. A Windows virtual machine with 1GB of memory and 40GB of disk will run eight cents an hour or $58.40 a month versus six cents an hour and $43.80 a month for the Linux equivalent. The pricing differential reflects the additional costs associated with Windows machines.

Rackspace is not forcing customers to migrate to the OpenStack code, but that is obviously their end goal, and as of Aug. 1, all new instances are defaulting to the new infrastructure. Customers who signed up before the transition who seek to spin up legacy-code machines will have to ask Rackspace for a workaround. The company acknowledges that it could take at least a year or more before every single customer makes the shift.

The speculation mill posits this move is surely aimed at taking market share from cloud king Amazon, but Rackspace CEO Lanham Napier says that’s just not true. The head Racker told Gigaom’s Derrick Harris that Rackspace is not attempting to go head to head with Amazon Web Services, noting that while “AWS, in particular, is playing a scale game. We’re playing a different game” – a tri-pronged approach that emphasizes speed, performance and service.

Speaking of service, Napier doesn’t miss an opportunity to highlight Rackspace’s attention to delivering “fanatical support,” the company’s trademarked term for its brand of exceptional customer service. He speaks more about this customer focus in a recent blog, where he expounds on how this commitment aligns with the company’s move to embrace open source:

“Right now,” writes Napier, “we’re focused on moving Fanatical Support to a new open-source cloud computing platform that we call Open Cloud, which will allow customers to avoid the vendor lock-in that they’ve faced up until now — on our platform as well as on others.”

“We think customers ought to be able to move anytime they see another provider offering better features or service or value. Yes, this means they can leave us on a whim. Moving to this new platform is a big risk for us. It’s expensive. It’s caused some short-term volatility in our business. But it’s working for us. We’re getting great feedback from customers about our offering that gives them the freedom to move. But by sticking to our guns and focusing on the customer, we hope they won’t.”

To complete their open source makeover, Rackspace announced some “brand enhancements” on Tuesday, nearly one week after the big OpenStack launch. With the addition of “the open cloud company” to their red-and-black logo, Rackspace is declaring itself a 21st century “open” cloud provider. On the same day, the company released its second quarter financial earnings statement. Profits rose 43 percent, reflecting better-than-expected revenue from its public cloud business. The strong results could help allay concerns about the disruption caused by the OpenStack rollout, which as the CEO rightly points out is a risk.

The phrase “Linux of the cloud” is being applied to OpenStack, but it’s still too soon to tell if the open source cloud “operating system” has the same staying power. The platform is not without critics. The most-repeated refrain is that it’s not an actual unified product, but rather a collection of components, which could make it difficult for some enterprises to implement. The sentiment is that “it’s got great potential, but call me in two years.” However, with the right backing, technology can ramp up quickly. The project’s nearly-200 contributors have deep expertise and even deeper pockets, not to mention a vested interest in making sure that its baby’s twos are not terrible. Time will tell and very soon so will the more than 190,000 Rackspace customers.

The phrase “Linux of the cloud” is being applied to OpenStack, but it’s still too soon to tell if the open source cloud “operating system” has the same staying power. The platform is not without critics. The most-repeated refrain is that it’s not an actual unified product, but rather a collection of components, which could make it difficult for some enterprises to implement. The sentiment is that “it’s got great potential, but call me in two years.” However, with the right backing, technology can ramp up quickly. The project’s nearly-200 contributors have deep expertise and even deeper pockets, not to mention a vested interest in making sure that its baby’s twos are not terrible. Time will tell and very soon so will the more than 190,000 Rackspace customers.