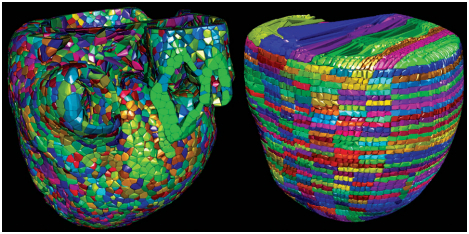

The Cardioid code developed by a team of Livermore and IBM scientists divides the heart into a large number of manageable pieces, or subdomains. The development team used two approaches, called Voronoi (left) and grid (right), to break the enormous computing challenge into much smaller individual tasks. Source: LLNL

The world’s fastest computer has created the fastest computer simulation of the human heart.

The Lawrence Livermore National Laboratory’s Sequoia supercomputer, a TOP500 chart topper, was built to handle top secret nuclear weapons simulations, but before it goes behind the classified curtain, it is pumping out sophisticated cardiac simulations.

Earlier this month, Sequoia, which currently ranks number one on the TOP500 list of the world’s fastest computer systems, received a 2012 Breakthrough Award from Popular Mechanics magazine. Now the magazine is reporting on Sequoia’s ground-breaking heart simulations.

Clocking in at 16.32 sustained petaflops (20 PF peak), Sequoia is taking modeling and simulation to new heights, enabling researchers to capture greater complexity in a shorter time frame. With this advanced capability, LLNL scientists have been able to simulate the human heart down to the cellular level and use the resulting model to predict how the organ will respond to different drug compounds.

Principal investigator Dave Richards couldn’t resist a little showboating: “Other labs are working on similar models for many body systems, including the heart,” he told Popular Mechanics. “But Lawrence Livermore’s model has one major advantage: It runs on Sequoia, the most powerful supercomputer in the world and a recent PM Breakthrough Award winner.”

The simulations were made possible by an advanced modeling program, called Cardioid, that was developed by a team of scientists from LLNL and the IBM T. J. Watson Research Center. The highly scalable code simulates the electrophysiology of the heart. It works by breaking down the heart into units; the smaller the unit, the more accurate the model.

Until now the best modeling programs could achieve 0.2 mm in each direction. Cardioid can get down to 0.1 mm. Where previously researchers could run the simulations for tens of heartbeats, Cardioid executing on Sequoia captures thousands of heartbeats.

Scientists are seeing 300-fold speedups. It used to take 45 minutes to simulate just one beat, but now researchers can simulate an hour of heart activity – several thousand heartbeats – in seven hours.

With the less sophisticated codes, it was impossible to model the heart’s response to a drug or perform an electrocardiogram trace for a particular heart disorder. That kind of testing requires longer run times, which just wasn’t possible before Cardioid.

The model could potentially test a range of drugs and devices like pacemakers to examine their affect on the heart, paving the way for safer and more effective human testing. But it is especially suited to studying arrhythmia, a disorder of the heart in which the organ does not pump blood efficiently. Arrhythmias can lead to congestive heart failure, an inability of the heart to supply sufficient blood flow to meet the needs of the body.

There are various types of medications that disrupt cardiac rhythms. Even those designed to prevent arrhythmias can be harmful to some patients, and researchers do not yet fully understand exactly what causes these negative side effects. Cardioid will enable LLNL scientists to examine heart function as an anti-arrhythmia drug enters the bloodstream. They’ll be able to identify when drug levels are highest and when they drop off.

“Observing the full range of effects produced by a particular drug takes many hours,” noted computational scientist Art Mirin of LLNL. “With Cardioid, heart simulations over this timeframe are now possible for the first time.”

The Livermore–IBM team is also working on a mechanical model that simulates the contraction of the heart and pumping of blood. The electrical and mechanical simulations will be allowed to interact with each other, adding more realism to the heart model.

It’s not entirely clear why a national defense lab took on this heart simulation work. Fred Streitz, director of the Institute for Scientific Computing Research at LLNL, would say only that “there are legitimate national security implications for understanding how drugs affect human organs,” adding that the project stretched the limits of supercomputing in a manner that is relatable to the American people.

The cardiac modeling work was performed during the system’s “shakedown period” – the set-up and testing phase – and the team had to hurry to finish in the allotted time span. Once Sequoia becomes classified, it’s unclear if it will still be available to run Cardioid and other unclassified programs, although access will certainly be more difficult since the machine’s principle mission is running nuclear weapons codes.

Sequoia is an integral part of the NNSA’s Advanced Simulation and Computing (ASC) program, which is run by partner organizations LLNL, Los Alamos National Laboratory and Sandia National Laboratories. With 96 racks, 98,304 compute nodes, 1.6 million cores, and 1.6 petabytes of memory, Sequoia will help the NNSA fulfill its mission to “maintain and enhance the safety, security, reliability and performance of the U.S. nuclear weapons stockpile without nuclear testing.”

The Cardioid simulation has been named as a finalist in the 2012 Gordon Bell Prize competition, awarded each year to recognize supercomputing’s crowning achievements. Research partners, Streitz, Richards, and Mirin, will reveal their results at the Supercomputing Conference in Salt Lake City, Utah, on November 13.