On Monday, AMD announced it is adding ARM-based Opterons to its server portfolio, the first non-x86 Opterons in the company’s history. The new processors, due out in 2014, will use 64-bit ARM SoCs on top of its SeaMicro Freedom Fabric technology, and will be aimed at the datacenter and cloud space.

At the Monday morning press briefing, CEO Rory Reed examined the backdrop for this bold move. “There’s no doubt that the cloud changes everything,” he said. “The cloud truly is the killer app that’s unlocking the future; it’s driving the fastest level of growth across the industry. Over the last decade, we’ve seen an annual increase of about 33 percent in CAPEX spending in the datacenter on the large mega-datacenter cloud services, and that’s only going to continue to evolve and expand.”

At the Monday morning press briefing, CEO Rory Reed examined the backdrop for this bold move. “There’s no doubt that the cloud changes everything,” he said. “The cloud truly is the killer app that’s unlocking the future; it’s driving the fastest level of growth across the industry. Over the last decade, we’ve seen an annual increase of about 33 percent in CAPEX spending in the datacenter on the large mega-datacenter cloud services, and that’s only going to continue to evolve and expand.”

The modern computing landscape is changing. The growing interest in energy-efficient microservers marks an important shift. For the past two decades, x86 has been the only (commodity) option for mainstream server computing, but the emergence of microservers gives ARM a unique opportunity to gain a foothold in the market.

Dell and HP both have a microserver play, and last month we saw Penguin Computing jump on the bandwagon. Intel is attempting to address the market with tweaked variants of its Atom and Xeon processors, but AMD will the first chipmaker to offer both 64-bit ARM and x86 server processors.

It’s the 64-bit aspect that will enable the ARM architecture to compete with x86 in the datacenter realm; 32-bit chips have a limited address reach (4 GB), which is problematic for server-sized datasets. Although 64-bit implementations of ARM aren’t expected until 2014, chipmakers are beginning to lay out their roadmaps today. Besides AMD, Calxeda and NVIDIA have also announced intentions to take 64-bit ARM silicon into the datacenter.

Until now making a datacenter more efficient meant increasing CPU horsepower or upping the core count. With the rise of cloud, mobile and Web computing, there are more bytes streaming into the datacenter. However, a lot of these Web era workloads are highly-parallelizable. ARM CPUs, which grew up inside mobile devices, are particularly efficient at these types of slice-and-dice workloads and have a power profile that is about one-third their x86 cousins.

Dr. Lisa Su, AMD senior vice president and general manager, talked about the product plans in more detail at the company’s ARM press event. “The biggest change in the datacenter is there is no one size fits all,” she emphasized.

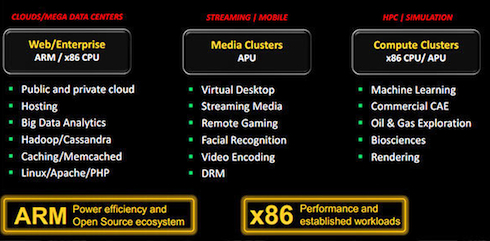

AMD is positioning itself to offer a broad menu of choices to meet different kinds of datacenter workloads, and has apparently come to the conclusion that some of them are better served by ARM rather than x86. In particular, the company thinks ARM-based servers will be a good fit for clouds and mega-datacenters, but it’s still targeting its more powerful x86-based Opterons for the heavier lifting, like rendering, machine learning and HPC applications.

Even though AMD initially plans to direct its ARM portfolio to the generic cloud and Web service space, customers may get a bit more creative. For example, ARM could be the way to go for some embarrassingly parallel HPC applications like genomic analysis, which doesn’t need scads of floating point horsepower nor single-threaded performance. AMD would probably rather sell higher-end x86 Opterons to such users, but the market will do what it wants. And given the up-front and power costs of large clusters, HPC users can be particularly opportunistic.

Aside from adopting the ARM architecture, AMD will also incorporate its SeaMicro Freedom Fabric into the chips. This is the company’s secret sauce that they claim will set it apart from competing ARM SoCs. The fabric optimizes system performance by offering a high-bandwidth, low-latency system interconnect that keeps all the CPUs well fed with data.

While the ARM-based server design has a lot of promise, including higher compute per dollar and compute per watt, changing architectures can’t be done overnight. From the software perspective, the biggest difference between x86 and ARM parts is the instruction set. At the very least, applications and operating systems must be recompiled to support the new platform. Meanwhile software that is closer to the metal, like compilers, will have to be tweaked or, in some cases, developed from scratch.

So while it may seem premature to announce a product in 2012 that won’t be ready until 2014, it will take at least that long to bring the software up to speed. On AMD’s end, they’ll be busy completing the chip design and lining up OEM partners. Indeed, ecosystem development has been the main thrust of all the early microserver announcements.

The day after AMD dropped the big news, ARM unveiled its 64-bit Cortex-A50 processor series based upon the ARMv8 architecture, which it introduced a year ago. The implementations include the Cortex-A53, ARM’s most energy-efficient yet, and the Cortex-A57, the more performant version. According to the company, 64-bit execution will enable “new opportunities in networking, server and high-performance computing.” In addition to AMD, Broadcom, Calxeda, HiSilicon, Samsung and STMicroelectronics are partnering with ARM to license the new processor series.

The day after AMD dropped the big news, ARM unveiled its 64-bit Cortex-A50 processor series based upon the ARMv8 architecture, which it introduced a year ago. The implementations include the Cortex-A53, ARM’s most energy-efficient yet, and the Cortex-A57, the more performant version. According to the company, 64-bit execution will enable “new opportunities in networking, server and high-performance computing.” In addition to AMD, Broadcom, Calxeda, HiSilicon, Samsung and STMicroelectronics are partnering with ARM to license the new processor series.

AMD is obviously betting on its new ARM-SeaMicro roadmap to help regain market share from its nemesis on the server front. AMD pioneered 64-bit x86 computing in 2003, and for a while, claimed a sizeable chunk of the business. After Intel followed suit with its 64-bit x86 offerings, AMD saw its market share steadily erode. Now that the chipmaker has, once again, decided to offer something completely different from Intel, we’ll see if history repeats itself.

Related articles

Penguin Joins Microserver ARMs Race

Dell Develops Second ARM Server Platform

Analyst Weighs In on 64-Bit ARM

Calxeda Takes Aim at Big Data HPC with ARM Server Chip

Arm Yourselves for Exascale, Part 1

Arm Yourselves for Exascale, Part 2