Today’s notion of safe passwords may soon be a thing of the past. Thanks to cheaper hardware, cloud software, and free password cracking programs, it’s easier than ever to hack these digital keys.

Security researcher Jeremi Gosney has taken this craft to a new level. At the Passwords^12 Conference held this week in Oslo, Norway, Gosney’s custom-built GPU cluster tore through 348 billion password hashes per second. His story was covered in the Security Ledger.

The system sports five 4U servers equipped with 25 AMD Radeon-based GPUs connected via SDR InfiniBand. To help keep costs down, Gosney purchased many of his GPUs (not just the ones in this system) from retired bitcoin miners, and his team also uses spare GPU cycles to mine for bitcoins.

For the demonstration, the researcher used the OpenCL framework over a Virtual OpenCL (VCL) platform to run the Hashcat password cracking algorithm. Against this combination of hardware and software, passwords protected with weaker encryption algorithms are basically obsolete.

A cluster that can chew through 348 billion NT LAN Manager (NTLM) password hashes every second makes even the most secure passwords vulnerable to attacks. In real-world terms, a 14-character Windows XP password hashed using LAN Manager (LM) would take just six minutes to break, while more secure NTLM passwords take significantly longer to crack, around 5.5 hours for an 8-character password.

Such evidence leads Per Thorsheim, organizer of the Passwords^12 Conference, to conclude that Windows XP passwords aren’t good enough anymore.

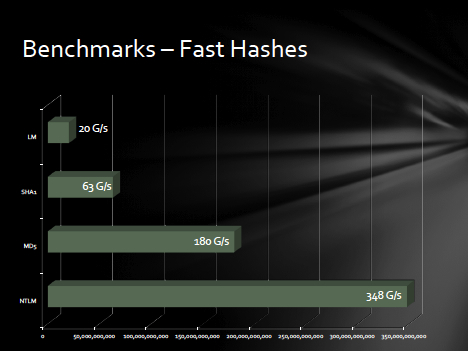

Other password hashing algorithms were tested with mixed, yet still impressive, returns. Fast hashes MD5 and SHA1 allowed 180 billion and 63 billion tries per second, respectively. While slow hashes were tougher to crack: bcrypt (05) and sha512crypt yielded 71,000 and 364,000 attempts per second, respectively, and md5crypt permitted 77 million per second.

While these statistics are for so-called brute attacks, Gosney points out that he and his cohorts employ dozens of more sophisticated tricks that fare much better for user-selected password recovery.

Gosney’s setup is not intended for online or “live” attacks, where the targeted system generally limits the number of login attempts. Here, the likely use case is for offline attacks waged against a collection of encrypted stolen accounts, allowing the hackers to in-effect guess as many times as necessary to gain entry.

Gosney has been working on clustering approaches for the last four or five years, and already has an established track record. Earlier this year, after 6.4 million LinkedIn password hashes were leaked, Gosney and a partner successfully cracked nearly 95 percent of them and published an analysis of their findings.

Originally, Gosney’s group just wanted to build the biggest GPU rigs they could, putting as many GPUs into a single server as possible so that they didn’t need to worry about clustering or distributing load.

But the idea of scaling via clusters was enticing. After an unsuccessful foray into VMware clustering, Gosney’s group happened across Virtual OpenCL (VCL). A free cluster platform distributed by the MOSIX group, VCL allows OpenCL applications to run on many GPUs in a cluster, as if all the GPUs are on the user’s computer.

Gosney first had to convince Mosix co-creator Professor Amnon Barak that he was not going to “turn the world into a giant botnet.” But he soon received the professor’s blessing and his assistance in getting the program to work with the Hashcat algorithm.

Discovering Virtual OpenCL (VCL) marked a turning point: “It just did what I wanted,” Gosney shared with Security Ledger. “I always had these dreams of doing very simple and very manageable grid/cloud computing. It really is the marriage of two absolutely fantastic programs, which allows us to do unprecedented things.”

With the load balancing power of VCL, Gosney and his team can scale the application beyond the 25-GPU system to support upwards of 128 AMD GPUs.

Code breaking has made huge strides in the last few years due to the culmination of cheap computing power and clustering/grid tools. However cheap is still relative. Gosney has put a lot of time and money into this project and hopes to recoup some of this investment by either renting out time on the system or by offering a paid password recovery and domain auditing service.

For those who hope to never need the services of a password recovery expert, the annual SplashData list of the worst passwords offers some practical advice for creating secure digital keys. The most common (i.e., worst) password for 2012 is once again password, followed by “123456” – with monkey, letmein and dragon all appearing in the top 10. Want to test the relative strength of your access codes? Check out How Secure Is My Password? But just to be safe, you might not want to enter your actual passwords.