Ask any IT engineering organization and they will tell you software has transformed their business, enabling them to run simulations more efficiently to automating design verification processes. The result is organizations are more competitive by delivering products to market faster and for less cost.

On the flip side, the licenses for engineering tools have increased exponentially in price while IT budgets continue to be flat or even reduced. Despite this situation, IT departments often fail to adequately track and manage software licenses. William Bryce, Vice President of Products at Univa, said “Companies spend heavily on expensive software tools without really understanding how to ensure that these critical assets are being optimally used. Consequently, an unacceptable proportion of that expenditure could be wasted.”

Additionally for organizations that have development centers around the world, the need to increase the visibility and overall efficiency of license use is critical. Many organizations spend limited budget on licenses for regional use that are then not maximized across the enterprise. Application software licenses need to be treated as an integral operational component of the business where utilization approaches 100 percent, licenses are assigned to the most critical projects and when tokens are available in remote sites they are used seamlessly by another group or region to meet business objectives.

There are, however, IT departments that realize that controlling the spiraling cost of software licenses can be done by increasing efficiency and implementing license management. Some have even hobbled together homegrown solutions. However, these solutions do not scale to meet the needs of a complex and growing organization. Furthermore, these homegrown solutions take valuable IT resources away from managing core business needs.

Univa, a leading datacenter automation company, has developed a licensing management solution, License Orchestrator, that enables IT departments to maximize their current license usage while ensuring budgets are being used to purchase the most business critical licenses. This solution enables IT departments to share licenses easily between geographically dispersed sites, which enables organizations as a whole to save money by purchasing fewer licenses. Additionally, License Orchestrator provides organizations with a competitive advantage, by ensuring licenses are available for critical projects, and has the intelligence to obtain licenses from another non-critical project or a remote site to meet a specific business goal. Univa’s license management solution provides IT departments with accurate data on license usage allowing the ability to bill cost back to specific groups, and get better visibility to ensure budget is being spent on the most needed licenses.

Many organizations have multiple license servers at different geographically separate sites. It can be quite difficult to obtain a single view of all license assets across an organization. Univa License Orchestrator combines the information from multiple Flexera FlexNet Publisher instances into one single software asset view for an organization greatly simplifying management, measurement and reporting.

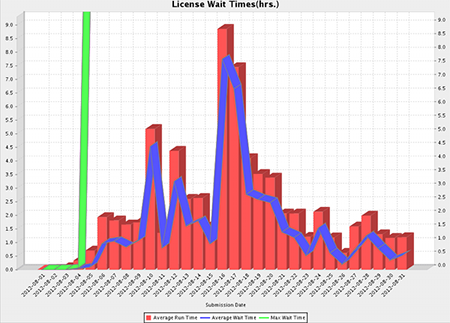

Once you have a single view into all software license assets in an organization the next obvious step is to track the usage of licenses across multiple datacenters, provide reports on usage and, if necessary, a chargeback report for license usage. Univa License Orchestrator is integrated with Univa’s reporting and analytics product UniSight. The reports provide a single management view of all licenses used in Univa Grid Engine clusters. On a daily, weekly or monthly basis administrators and management can login to UniSight, generate ad-hoc reports or create custom views of license usage data as needed.

Ensuring optimal usage of software licenses isn’t trivial. The definition of ‘optimal’ changes based on the business objectives of an organization. Which project, group or user is most important this week? It is obvious that the most important project changes dynamically on a day-to-day basis and possibly on an hourly basis within a company. Sophisticated scheduling policies can be defined in Univa License Orchestrator mapping the current business objectives to the actual license usage by the users in an organization. These policies can be changed as needed and License orchestrator will automatically apply the changes to all jobs or applications that request licenses in Univa Grid Engine Clusters.

When a job is submitted by a user or via a commercial software application into a Univa Grid Engine Cluster, an application license (or even multiple licenses) is needed to run the job on a server. This process is managed through tight coordination between Univa Grid Engine and License Orchestrator, and is transparent to the user. All a user really cares about is ‘run my job as soon as you can with the correct licenses’. The system takes care of the rest. Behind the scenes License Orchestrator synchronizes the scheduling of the license with the job.

In certain situations, IT departments require more control over how licenses are shared across datacenters and for an application which license server is used when the job runs in the cluster. Univa License Orchestrator provides this flexibility. You can choose the specific license server you want to communicate with for a license or you can let the system automatically communicate with the local license server first then try a remote license server if needed and available. Combining automated and administrator preferred selection of License servers allows the end users to submit their jobs and ‘just forget about the license’ since Univa’s system will take care of things automatically.

One of the unique challenges that is addressed by Univa License Orchestrator is the ability to seamlessly collect all the license information from multiple Flexera FlexNet Publisher instances in real-time ensuring a single consistent view of all license assets from a single management console. Univa License Orchestrator provides a consolidated view but must also be synchronized with the state of all Univa Grid Engine Clusters in an organization so that when a end user submits their job and requests a license the ‘correct’ or ‘highest priority’ user must get the license first according to policies and limits defined in Univa Grid Engine and Univa License Orchestrator. This becomes even more challenging when the policies and limits are changed on the system dynamically to reflect new high priority projects or business objectives. Univa License Orchestrator has to automatically reprioritize all jobs waiting for licenses from all clusters that are registered in Univa License Orchestrator.

Univa knows that only by optimizing software license usage by reconciling product use rights with how employees are actually using the software, can organizations really gain control of licensing expenditure. Furthermore, it is critical for enterprises to obtain better visibility on license usage across the organization to eliminating wasteful purchasing. For example, consider an organization with 4,000 licenses, each costing $2,500. Without the visibility into license usage, an IT department, could inaccurately budget a 10% increase at a cost of $1 million. With Univa’s License Orchestrator, an IT department now has a central view of license usage empowering them to better forecast license expenditure and match license usage to business critical projects improving the company’s bottom line.

To learn more about best practices in managing application licenses or to get started with a FREE Trial contact Univa today at [email protected]

For more information, about Univa’s innovative datacenter automation products including License Orchestrator please check out http://www.univa.com