In this provocative talk from TEDGlobal, MIT Media Lab professor Kevin Slavin paints a picture of a world that is increasingly being shaped by algorithms. Slavin explores how these complex computer programs evolved from their espionage roots to become the dominant mechanism for financial services. But as the 2008 financial crash exemplified, allowing algorithms to replace human-based decision-making can have unintended and even dire consequences.

Slavin’s tale is one of contemporary math, not just financial math. He calls on the audience to rethink the role of math as it transitions from being something that we extract and derive from the world to something that actually starts to shape it. Consider that there are some 2,000 physicists working on Wall Street. Algorithmic trading evolved in part because institutional traders needed to move a million shares of something through the market, so they sought to find a way to break up that big thing into a million little transactions, and they use algorithms to do this.

“The magic and the horror of that,” says Slavin, “is that the same math that is used to break up the big thing into a million little things can be used to find a million little things and sew them back together to figure out what’s actually happening in the market.”

The less mathematically-inclined can imaging this scenario as a bunch of algorithms that are programmed to hide and a bunch of algorithms that are programmed to go find them and act. “This represents 70 of the United States stock market, 70 percent of the operating system formerly known as your pension, your mortgage,” says Slavin, drawing pained chuckles from the audience.

What could go wrong?

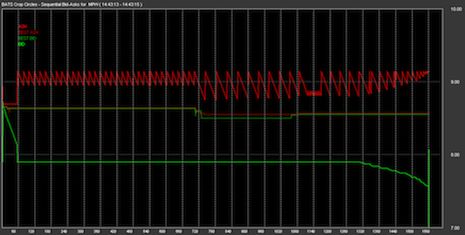

What could go wrong is that on May 6, 2010, 9 percent of the stock market disappeared in five minutes. This was the flash crash of 2:45, and it was the biggest one-day point decline in the history of the stock market. To this day, no one can agree on what caused this to occur. No one ordered it. They just saw the results of something they did not ask for.

“We’re writing these things that we can no longer read…and we’ve lost the sense of what’s actually happening in this world that we’ve made,” cautions Slavin.

When trying to make sense of uncharted territory, or new environs, scientists will start with a name and description of the new thing or place. A company called Nanex has begun this work for the 21st century stock market. It’s identified some of these algorithms and given them names like the Knife, Twilight, the Carnival, and the Boston Shuffler.

These kinds of algorithms aren’t just operating in the stock market, they are being used to set prices in online marketplaces, like eBay and Amazon. Algorithms locked in loops without human supervision caused a hundred dollar book about fly genetics to climb to $23.6 million (plus shipping and handling) on Amazon.

Netflix is using algorithms, like “pragmatic chaos,” to power its recommendation algorithms. This particular code was responsible for 60 percent of movies rented. Another company uses “story algorithms” to predict which kinds of movies will be successful before they are written. Slavin terms this process as the physics of culture.

Algorithms are even affecting architecture. There is a new style of elevator called destination control elevators that employ a bin-packing algorithm to optimize elevator traffic. The buttons for these systems are pressed before stepping onto the platform. The initial data show that people are not comfortable riding in an elevator where the only internal button option is “stop.”

Algorithmic trading, aka high-frequency trading, is also responsible for the repurposing of building space near major financial centers, where algorithms have become VIP tenants. The big traders want to be as close to the action as possible because in high-frequency trading milliseconds matter. Buildings near the stock exchange – like the Carrier Hotel in Manhattan – are being hallowed out to make room for datacenters.

Telecommunications provider Spread Networks dynamited through mountains to create a 825 mile trench in order to run a fiber-optic cable between Chicago and New York City to be able to traffic one signal nearly 40 times faster than the click of a mouse. Spread’s flagship Ultra Low Latency Chicago-New York Dark Fiber service is now operating with a roundtrip latency of 12.98 milliseconds.

“When you think about this, that we’re running through the United States with dynamite and rock saws so that an algorithm can close the deal 3 microseconds faster all for a communications framework that no human will ever know, that’s a kind of manifest destiny – and we’ll always look for a new frontier,” observes Slavin.

The acceleration and automation go hand and hand, but there is danger in making decisions solely based on computational insight. When an algorithm on Amazon goes out of bounds, it leads to a humorous tale about an overpriced biology text book, but when the market crashes, lives are affected around the world. The flash crash could have served as a warning, but little has changed since that time. The vast majority of stock trades are executed in less than a half a millionth of a second – more than a million times faster than the human mind can make a decision.

As Slavin says: “It’s a bright future if you’re an algorithm.”