Founded in 2005, Terascala has focused on the simulation, analysis and modeling application needs of a number of HPC-oriented industries, including financial services, manufacturing, the life sciences, and at the heart of several research institutions.

On that “traditional HPC” front, the company has managed to secure some wins, including via their strategic partnership with Dell for the University of Florida’s HiPerGator system, as well as with another more recent win at the University of North Texas, which just installed a Dell Terascala solution as part of its Talon 2.0 system.

According to the company’s Vice President of Engineering, Peter Piela, Terascala’s growth is stemming from the expected HPC quarters, but they’re pushing closer into large-scale enterprise. Doing so, however, means rethinking their approach to Lustre-driven high performance storage—from the manageability, reliability, availability and serviceability angles in particular.

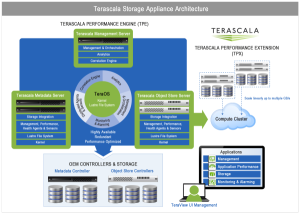

We spoke with Piela at length about the future of Lustre-driven storage in a growing set of high performance and “big data” environments following the company’s recent announcement of a revised vision for HPC efficiency. Piela and team made key tweaks to the TeraOS operating system, which is at the heart of their High Performance Storage Appliance, and negotiates the dependencies between the software and the hardware, as well as the overall management, monitoring, and tuning. The goal of these refinements (and their resulting appeal to more potential enterprise users) is to emphasize a more manageable, unified storage approach.

Terascala argues that conventional HPC solutions focus on overcoming the I/O bottleneck that occurs between the compute cluster and the storage subsystem caused by the inability of scale-out NAS to deliver the multiple gigabytes per second of throughput performance that HPC requires.

While high throughput is important, it is only part of the solution. In other words, the company moved away from simply seeing their role in HPC as storage and hardware-driven; rather, they’re considering it is a comprehensive connected system of hardware, data, applications and workflows that must be optimized in combination to achieve the best results.

The company recently announced its new Terascala Intelligent Storage Bridge (ISB), a workflow manager for users leveraging Lustre who require direct access to data at any given point in their overall application workflow. The goal is to help large organizations deal with massive datasets quickly and automatically between fast scratch storage and enterprise storage—and hopefully to extend their reach beyond the base set of HPC customers in research and academia who are seeking a Lustre-based solution to their storage woes.

“Some time ago, we recognized that for HPC to be effective in the enterprise, the industry would need to adopt a holistic approach to the HPC lifecycle,” said Steve Butler, CEO, of Terascala following the announcement of their new approach to storage. “The fastest storage is useless if the user applications are architected in such a way that they cannot take advantage of it. Terascala’s vision of optimizing application workflows will maximize the return on investment organizations gain from their large investments in HPC infrastructure.”

They are currently hooked in with Dell and NetApp as the exclusive partners for their offerings and have managed to raise over $30 million in funding since their origin.