Today, during the Amazon Re:invent conference in Las Vegas, Amazon Web Services rolled out a new generation of EC2 instances, aimed at the most compute-intensive applications. The C3 instance type sports the latest Intel Xeon processors, generous memory and fast storage and networking.

The C3 joins the C1 and C2 instances that make up Amazon’s Compute Optimized family, which Amazon says has “the highest performance processors and the lowest price/compute performance compared to all other Amazon EC2 instances.”

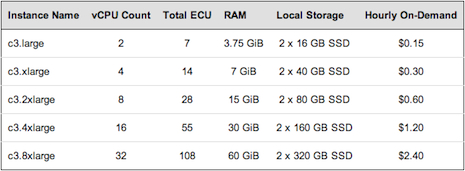

There are five sizes to select from: c3.large, c3.xlarge, c3.2xlarge, c3.4xlarge and c3.8xlarge with 2, 4, 8, 16 and 32 virtual cores (vCPUs) respectively.

The C3 instance type is powered by two-socket servers outfitted with Intel’s Xeon E5-2680 v2 processors, which sport 10 cores (20 threads) running at 2.8 GHz. These are variants of the Ivy Bridge parts that Intel released in September. Each C3 virtual core (vCPU) is a hardware Hyper-Thread. The C3 instance boasts SSD-based storage and employs what AWS calls “Enhanced Networking,” for “improved inter-instance latencies, lower network jitter and higher packet per second (PPS) performance.” The exact interconnect technology is not specified, but so far Amazon’s high-end instances have topped out at 10GbE.

What Amazon has begun specifying are the underlying processor types for its new instances. In a blog post, AWS rep Jeff Bezos explains that this enables customers to tune their application to exploit the processor’s specific characteristics.

Timothy Prickett Morgan, editor of HPCwire’s sister publication, EnterpriseTech, reports that Amazon CTO Werner Vogels, speaking at the AWS re:Invent conference, called this offering the “absolute highest performance instance” that AWS has. Vogels also said that the inter-instance latencies have been “brought down much lower,” enabling AWS users to run the highest performance HPC applications.

To highlight the potential of the C3 instances for real HPC workloads, AWS launched a 26,496 core cluster and compared it with the most recent TOP500 scores. With a LINPACK reading of 484.18 teraflops, the C3-backed cluster would nab a 56th place finish on the June 2013 list. This virtual cluster is twice as performant as its predecessor, an AWS cluster of 1,064 CC2 instances, which achieved 241 teraflops Rmax for a 41st place finish on the November 2011 TOP500 list.

AWS envisions that customers will use the new instance types for CPU-bound, scale out applications and compute-intensive HPC workloads. Potential applications include high traffic front-end fleets, batch processing, distributed analytics and HPC applications that can benefit from increased speed, higher memory, faster storage, and an overall tighter configuration thanks to improved networking.

C3 instances are available for purchase in the US East (N. Virginia), US West (Oregon), EU (Ireland), Asia Pacific (Singapore), Asia Pacific (Tokyo), and Asia Pacific (Sydney) regions. On-Demand, Reserved and Spot pricing are all supported.