“I remember I had this little computer with 16K of memory, and everyone was astonished! What was I going to do with all this memory!” Hans Zimmer around 1983.

Music and technology have been walking side by side for millenniums. Musical instruments have been following the advancements in technology. They evolved with mechanical and acoustics advancements, followed by advancements in electronics, and finally, they now transition into virtual reality, based on powerful code and efficient computational resources. History taught us that different musical instruments gave us different sound palettes and eventually different genres of music. Mastering new technologies has always helped us to develop new compositional styles, and enhance production approaches and sonics.

There are computer technologies that, as they go from one generation to the next, improve by an average factor of 2. With high performance computing and supercomputers, these improvements can actually be a factor of 10, or more. In a classic supercomputing style let’s look for things that will substantially change the way people perform their audio and music work and eventually how the audience enjoy their products.

Music and Technology – An Ancient Bond

Around the 5th century BC ancient Greeks created the Chorus, a homogeneous, non- individualized group of performers who communicated with the audience usually in song form. The Chorus originally consisted of fifty members. Tragedians, such as Sophocles and Euripides, changed this number through various experimentations. At the same time, on their quest to optimize the audience experience, the ancient architects built venues with custom designed acoustics. During the 18th Century, the chamber orchestra was found, also consisting of fifty musicians. Later, the full symphonic orchestra came along with about 100 musicians facilitated in custom-acoustic auditoriums that defined the sound of the experience. Music, orchestration and acoustics were always treated as one and there is a good reason for this.

The symphonic orchestra is truly a piece of technology: Every instrument is a different technological wonder and concert halls around the world are subjects of tremendous acoustic research. However, the most important element of an orchestra is the conductor. The conductor acts as the central piece of a very low message-passing latency and high-bandwidth fabric. The conductor is directing the musical performance in real time. This system architecture is the reason we have “Classical Music”. It became a reality based on organic nodes (human players), acoustic and physics laws and predetermined music written by the composer. The only limitations of this very advanced form of expression are that the music is already written by the composer and the acoustics are also more or less predetermined. To put that in perspective, in Jazz, the music can change in real time (improvisation) but the amount of people interacting in real time is greatly reduced.

The Time Machine

During the last 40 years, with the advancement of supercomputers and high-performance computing, we realized that we can scientifically create virtual environments, in which we can define specific questions and get answers. The better the questions are formed, the more defined the answers will be. This is what supercomputers have allowed us to do for many decades now and in many industries. They are like time machines. They allow us to understand the past and create the future.

But what is the ultimate answer to Music? Maybe we can discover this by moving backwards, and this is the main reason for this historic introduction to music technology. If we take one of the highest forms of human collaboration and expression, the symphonic orchestra and classical music, and we investigate those forms of expressions by a modern prism, we might get the answers we are looking for.

What are the ingredients of the modern hybrid recipe of orchestral music? Hollywood is the best place to look as scoring movies is the modern way of creating future classics.

Creating the HPC384 Spec.

I will use another Hans Zimmer quote here: “Music is organized chaos! ….but not necessarily in a bad way, as organized chaos can sound pretty good!” Composers might be inherently good in organizing chaos.

For the past 17 years, programmers from all around the world have built virtual instruments and effects based on software interfaces like VST, which runs seamlessly over an x86 microprocessor architecture. Among the high-performance computing systems, HPC clusters provide an efficient performance compute solution based on industry-standard hardware connected by a high-speed network.

Using HPC we can work with advanced physics to model plate reverbs, create evolving non-linear auditorium acoustics and emulate multi-microphone positions that will give sound endless possibilities. It is no longer necessary to work with oversampled peak detection in order to estimate the peak samples on a signal. We have overcome those barriers of conventional underpowered discrete-time systems. We process the actual audio and not ‘the estimation of it’ without any more fighting with conventional CPU or DSP constraints. There is no way we can overload an HPC music production system when we work with 88.2 kHz, 96 kHz, 192 kHz or even 384 kHz. Moreover, HPC allows us to have different sound qualities in the same project so we can push the engines hard when we want to emulate analog synthesizers, luscious reverbs or accurate solid-state and thermionic valve circuitry that needs advanced resolution at a microsecond’s time domain.

At this critical juncture of entertainment evolution, with 3D & HDR, IMAX Cinema, Dolby® Atmos, DTS® Headphone X, 6K Cinema and 4K TV with HDMI 2 (which has an audio bandwidth of 1536 kHz), the industry creates a roadmap for a quality aware audience. A true quality upgrade of the overall cinematic experience is on-going. HPC384 Spec. is here to keep music production on par with those innovations and it will provide the necessary tools, specifications and revolutionary techniques so that music professionals will be able to produce and deliver high quality content to meet the demands and expectations of their audience.

Preliminary Tests

In our preliminary tests we rendered the first ever reverb at 1536kHz using U-He Zebra 2 VST clocked at 384 kHz as our sound generator. This sound is quite likely the most mathematically complex and harmonically rich single sound ever created in the digital domain. Sound examples here: http://www.hpcmusic.com/#!hpc384/crrb

U-He Diva, which is an advanced VST instrument, could playback in real time at 384 kHz with infinite notes of polyphony while the same instrument when used in a top-of-the-range workstation cannot perform more than few notes at 192 kHz. The highest bandwidth we managed to work with was 6144 kHz. We use bandwidth as a measure of efficiency of the system when it comes to music production. This way, when software developers are ready for heavy mathematics in low latency, almost real-time performance, we would know how to setup this reality-engine. Moreover, Dolby is heavily experimenting with many surround channels in order to enhance the localization information of sound. Using HPC we can go a step further and enhance the localization information of music (and not only sound) by composing and arranging in many-channel surround formats in a fully discrete way (3D Music)

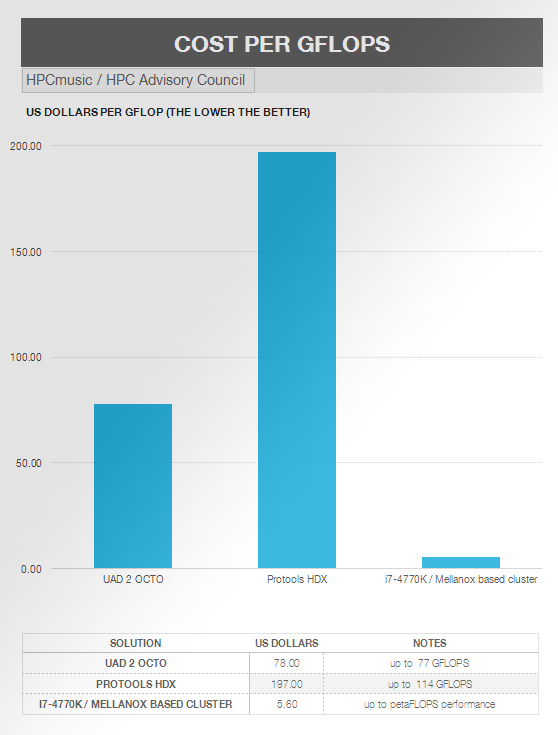

On a cost per GFLOPS basis, we found that HPC for music can be roughly 35X better than the current industry-standard solutions, with 10X more bandwidth we can operate in real-time performance per audio track and enable unlimited track counts (high scalability).

The future is about the audience experience

As for next steps, we need to work on the form factor of those solutions and further explore software opportunities. The evolution of music creation leads to an evolution of music enjoyment. In the same way that the vinyl record, walkman, CD and MP3 changed music for the better (or sometimes for the worse), we now see new products on the horizon that can revolutionize the audience experience.

Antonis Karalis

More info at www.hpcmusic.com