Researchers at North Carolina State University working in partnership with the Argonne Leadership Computing Facility (ALCF) have successfully demonstrated a proof-of-concept for a novel high-performance cloud computing platform by merging a cloud computing environment with a supercomputer. Project leads Patrick Dreher and Mladen Vouk discuss the evolution of the project on the ALCF website.

Cloud computing environment embedded inside ALFC Blue Gene/P “Surveyor”

The proof-of-concept implementations show that a fully functioning production cloud computing environment can be completely embedded within a supercomputer, thereby enabling the cloud’s users to benefit from the underlying HPC hardware infrastructure.

The work was part of the ALCF’s Director’s Discretionary (DD) program, which provided the research team with access to a Blue Gene/P machine to explore the feasibility of situating a distributed cloud computing architecture within a supercomputer.

Dreher and Vouk describe how this project is different from most other HPC cloud efforts. “The ‘traditional’ cloud design approach often starts with a relatively loosely coupled X86-based architecture,” they write. “Then, if there is an interest in HPC, a cloud computing service can be added and adapted to HPC computational needs and requirements. More often than not, such a service is based on virtual machines and is used by distributed applications that are not sensitive to the latency requirements for HPC applications.”

This research project employed an HPC-first approach: using a supercomputer to host a cloud, ensuring that the native HPC functions were present. The supercomputer’s hardware provided the foundation for a software-defined system capable of supporting a cloud computing environment. The authors state that this “novel methodology has the potential to be applied toward complex mixed-load workflow implementations, data-flow oriented workloads, as well as experimentation with new schedulers and operating systems within an HPC environment.”

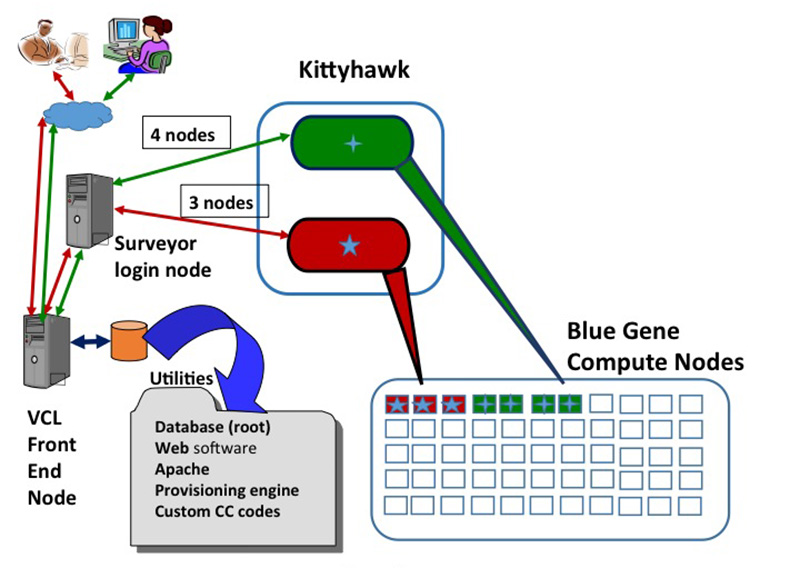

The project used the ALCF’s IBM Blue Gene/P supercomputer, Surveyor, a non-production, test and development platform with 1,024 quad-core nodes (4,096 processors) and 2 terabytes of memory, with a peak performance of 13.9 teraflops. A software utility package called Kittyhawk, originally developed with funding from IBM Research, proved to be indispensable. This open source tool serves as a provisioning engine and also offers basic low-level computing services within a Blue Gene/P system. The software is what enabled the team to construct an embedded elastic cloud computing infrastructure within the supercomputer.

For the cloud functionality, the team went with the Virtual Computing Laboratory (VCL) cloud computing software system, originally designed and developed at NC State University. This open source cloud computing and resource management system covers the full-range of cloud capabilities, including Hardware as a Service (HaaS), Infrastructure as a Service (IaaS), Platform as a Service (PaaS), Software as a Service (SaaS), as well as Everything as a Service (XaaS) options. It also includes a graphical user interface, an application programming interface, authentication plug-in mechanisms, on-demand resource management and scheduling, bare-machine and virtualized machine provisioning, provenance engine, a sophisticated role-based resource access, and license and scheduling options.

The HPC-first design approach to cloud computing leverages the “localized homogeneous and uniform HPC supercomputer architecture usually not found in generic cloud computing clusters,” according to the project leads. Such a system has the potential to support multiple workloads, from traditional HPC simulation jobs to workflows that involve both HPC and non-HPC analytics to data-flow orientation work. The implementation should be compatible on any supercomputers that have the Kittyhawk utility installed, and the next step is to scale the proof-of-concept to other systems.