Not sure about the rest of you out there in supercomputing land, but the month of January seems to have flown by. Time tends to tick a bit faster with an active news cycle and evolving promises of more action in these early days of 2014.

Before we move into the top picks from this week’s news, it seemed pertinent to point to something rolling out in the next couple of weeks. A new special feature series that takes a look at (the many) lessons HPC has to offer large-scale enterprise users kicked off with the introductory article, to be followed by more specific drill-downs on those themes, which include addressing, managing, storing and analyzing data at multiple layers; finding efficient ways to scale; balancing performance with other critical needs and more. Cultivating responses and crafting around the themes many of you identified for your enterprise brethren (and…sistren?) has been eye-opening, to say the least. Stay tuned.

With that, a quick note about another series—this one ongoing. As many of you have probably noticed, we’ve changed the format of our Soundbite podcast series and are now carrying daily (Monday through Friday) editions. The aim is to bring diverse perspectives to bear from many corners of the HPC ecosystem in engaging interview form, all in a 20-minute(ish) envelope. For instance, this week, we talked with a Texas A&M researcher seeking more robust measurements for quantum computing, surveyed the data landscape for astrophysics with Dr. Kirk Borne, went inside some molecular dynamics research at ORNL on the novel Anton system, and checked in with trusty Grid Engine via an interview with Univa’s CEO. On tap for tomorrow and next week, we have the new acting director at TACC on to talk about Stampede’s first year and lessons learned with their use of Xeon Phi, as well as talk with D-Wave president, Bo Ewald.

But enough chatter, take a moment to see if you’re on the list of People to Watch for 2014 (although chances are you’d know by now) then let’s burrow into the news of the week with some of the most noteworthy items that crossed the wires…

Congratulations to NERSC– Edison System Accepted

Congratulations to NERSC– Edison System Accepted

This is one cool system—quite literally.

In addition to its compute horsepower, this will be the first supercomputer at NERSC to rely solely on outside air to remain chill, a technique known as “free cooling.” Water is snaked through outside cooling towers that run back into the system’s internal radiators, which cool the air instead of heat it.

As NERSC describes, “Fans located between each pair of cabinets in a row pull air in one end; circulate it through a radiator, over the hot components and on to the next set of cabinets before it exits at the row’s end. This side-to-side airflow, or transverse cooling, is more energy efficient than the typical front-to-back flow of most systems.

As NERSC describes, “Fans located between each pair of cabinets in a row pull air in one end; circulate it through a radiator, over the hot components and on to the next set of cabinets before it exits at the row’s end. This side-to-side airflow, or transverse cooling, is more energy efficient than the typical front-to-back flow of most systems.

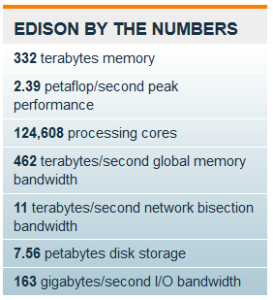

“Edison can execute nearly 2.4 quadrillion floating-point operations per second (petaflop/s) at peak theoretical speeds. While theoretical speeds are impressive, ‘NERSC’s longstanding approach is to evaluate proposed systems by how well they meet the needs of our diverse community of researchers, so we focus on sustained performance on real applications,’ said NERSC Division Deputy for Operations Jeff Broughton, who led the Edison procurement team.”

Edison will be dedicated on February 5 as the teams there also celebrate 40 years of the center’s operation. We’ll check in with the system following the dedication to learn how the current set of early users and production runs are making use of the scientific resource.

IBM Announces STAR of Approval, Partnerships

IBM has been stealing headlines in January, most notably with the sale of their x86 server business to Lenovo. We talked to Dave Turek, VP of Advanced Computing at IBM about that (and their refreshed HPC strategy here). The interview with Turek came in the wake of an announcement about a new partnership with Texas A&M University.

To add to their news ranks, they took the lid off eleven new servers that meet v2.0 guidelines now in effect under the U.S. Environmental Protection Agency’s ENERGY STAR program. The specific Power System and System x servers qualified for ENERGY STAR version 2.0 include: Power 730, Power 740, Power 750 and Power 760 servers. The qualified System x servers are: x3650 M4 HD, x3650 M4, x3500 M4, x3550 M4, dx360 M4, nx360 M4 and x222.

As IBM commented, “the agency also predicts that if all servers sold in the United States were to meet ENERGY STAR specifications, energy cost savings would approach $800 million per year and prevent greenhouse gas emissions equivalent to those from over one million vehicles.”

Internet2 Secures $1.3 Million NSF Grant

Internet2 Secures $1.3 Million NSF Grant

Internet2 announced this week that it received a grant for $1.3 million from the National Science Foundation (NSF) to support small colleges and universities that have notable research projects and cyberinfrastructure needs even though the institutions are not primarily research focused.

Internet2 provides a collaborative environment for U.S. research and education organizations to solve technology challenges, and to develop innovative solutions in support of their educational, research, and community service missions.

The grant project titled, “Broadening the Reach: Support of Campus Cyberinfrastructure at Non-Research-Intensive and EPSCoR Institutions,” will consist of three workshops and up to 30 campus consulting visits, over two years.

AMD Wraps Around ARM, Open Compute

The Open Compute Summit generated some key processor news this week from the likes of AMD, which announced a comprehensive development platform for its first 64-bit ARM-based server CPU, fabricated using 28 nanometer process technology. In addition, they also formally announced coming sampling of the ARM-based processor, named the AMD Opteron A1100 Series, and a development platform, which includes an evaluation board and a comprehensive software suite.

Adding to those key news items, AMD also said they’d be contributing a new micro-server design using the AMD Opteron A-Series for the Open Compute Project, as part of the common slot architecture specification for motherboards dubbed “Group Hug.” Awww….

University Technology Adoption/Research News

Here’s just a quick overview of some notable items on that front:

UL Lafayette Research Assists U.S. Military

University of Luxembourg Boosts Research Capabilities with Allinea MAP

University of Bristol Joins IPCC Program

And speaking of news from that segment, don’t forget that current students can Apply for Summer School on HPC Challenges in Computational Sciences

That about wraps it up for the week; make sure to take a look at the Off the Wire section for more news that didn’t make it to the top items.