Four years ago, a friend dropped a Sheeva Plug into the hands of Ronald Luijten, a system designer at IBM Research in Zurich. At the time, neither could have realized the development cycle this simple gift would spark.

If you’re not familiar, Sheeva Plugs are compact devices that look a lot like your laptop power adapter, except instead of an electrical output plug, there’s a handy gigabit Ethernet port. Luitjen, whose primary interests lie in data movement and energy management, immediately saw the potential. He put his minimalist inclinations to work, and within a few months, had a VNC, an OS and a web server running from a USB attached hard drive. What struck him the most, however, was when he measured it from the mains and found the whole thing was running at a mere 4.3 watts. “I couldn’t believe this,” he said. “When I thought about it further, I saw it was the beginning of a revolution.”

This discovery coincided with a much larger project Luitjen was involved with at IBM Research. In conjunction with ASTRON, a team tapped some of Big Blue’s best minds to help the Square Kilometer Array (SKA) team discover new solutions to solve the unprecedented power, compute and data movement challenges inherent to measuring the Big Bang. Over the next decade, SKA researchers will be able to look back 13.8 billion years (and over a billion dollars) with 2 million antennae that will pull together a signal at the end of each day based on 10-14 exabytes of data, culminating in a daily condensed dose of info in the petabyte range. To do this will require well over what the exascale machines of the 2020 timeframe will offer but there’s another problem. The signals are being collected at the most radio wave-free locations one earth, which happen to be places where there’s no power grid or internet.

This was the perfect set of conditions for IBM and SKA/ASTRON researchers to think outside of the power-hungry boxes that are required to feed this kind of science. And the perfect opportunity for an ultra low-power approach that recognizes that the compute is easy–it’s the data movement that’s the real power drain. Since altering the speed of light is out of the question, the only answer seems to be integrating as much as possible into a neat whole. While some of that technology still needs to mature (particularly in areas like stacked memory), Luitjen was able to demonstrate how big compute and little movement can be lashed together for maximum efficiency and multiple workloads.

But this isn’t all in the name of grand science. In addition to seeing a path to helping SKA with its noble mission, IBM too was able to see a path to meeting the “compute is free but data is not” paradigm. Luijten says their needs were specific; they wanted to see a microserver that could provide an ultra low-power “datacenter in a box” that could leverage commodity parts and condensed packaging. Further, it would have to be true 64-bit to be of commercial value (which meant no ARM since it wasn’t on the near horizon then), and would have to run a server-class operating system.

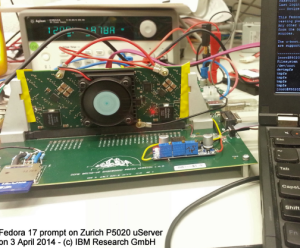

Building off the lesson learned during his Sheeva Plug jaunt, Luijten set to work with the one and only 64-bit chip on the market. In this case, it was the P5020 chip from Freescale—a product made specifically for the embedded market, thus without any of the software required for doing anything other than powering small devices operating on custom code. He says the Linux that came in the box was limited and he couldn’t even run the compiler. There was certainly no OS to meet IBM’s eventual needs, but with the help of a colleague and folks at Freescale, Luijten was able to get Fedora up and running on the 2.0 GHz Power-based architecture. And so the DOME Microserver was born.

Getting Fedora to sing on the DOME was one the first hurdle; the absence of an ecosystem was an incredible challenge and multiple iterations of attempting the use of different OS approaches that blended server and embedded realms. He imagined that finally being able to implement a functional server-class OS would be half of the trouble–that the real challenges were ahead in being able to build some functionality application-wise around that.

Getting Fedora to sing on the DOME was one the first hurdle; the absence of an ecosystem was an incredible challenge and multiple iterations of attempting the use of different OS approaches that blended server and embedded realms. He imagined that finally being able to implement a functional server-class OS would be half of the trouble–that the real challenges were ahead in being able to build some functionality application-wise around that.

However, to Luijten’s surprise, just two days after the Fedora success, they were able to get IBM’s DB2 up and running on the tiny motherboard. Without compiling. This is indeed the same DB2 that requires ultra-pricey System X datacenters at a much greater up-front and of course, operational/power cost.

Luijten relayed a quick story about how he had a chat with upper management on the development side at IBM about what they were able to do and he flat-out denied it was possible. “He probably still doesn’t believe it to this day,” he laughed. But sure enough, he said, they had a program that was running for weeks on a single node end atop DB2 with a PHP app on a web browser that could kick through a basket of workloads on the Freescale-carried DB2 engine, all at around 55 watts.

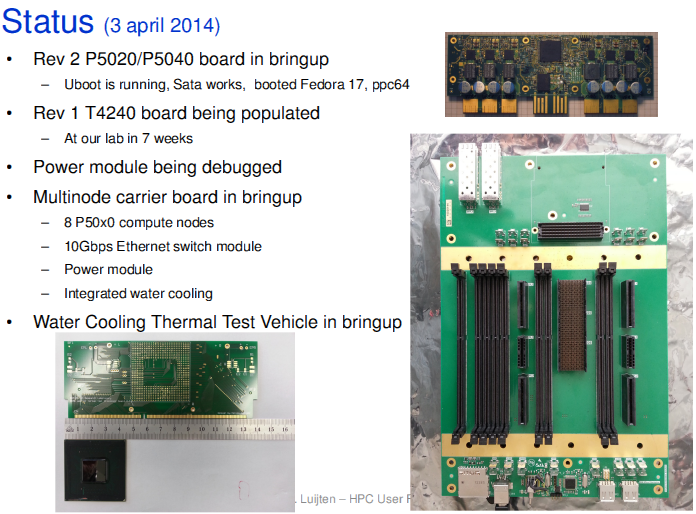

The very small team (just Luijten, another comrade and a group of researchers at Freescale) grabbed the chance to take hold of the new incarnation of the chip, which moved them from dual-core to 12 cores—a major leap that didn’t require a recompile to run DB2 again. The newest part, the T4240 runs at 60 watts but comes with some major enhancements to his aims in terms of threading (this is “true threading” he says, not hyperthreading), bumps to three memory channels, and moves them down to 28nm (versus 45 nm).

The very small team (just Luijten, another comrade and a group of researchers at Freescale) grabbed the chance to take hold of the new incarnation of the chip, which moved them from dual-core to 12 cores—a major leap that didn’t require a recompile to run DB2 again. The newest part, the T4240 runs at 60 watts but comes with some major enhancements to his aims in terms of threading (this is “true threading” he says, not hyperthreading), bumps to three memory channels, and moves them down to 28nm (versus 45 nm).

The datacenter in a box approach with 128 of these boards using the newest chip yields 1536 cores and 3072 threads with between 3 or 6 TB of DRAM with a novel hot water cooling (ala SuperMUC) installation makes this a rather compelling idea for cloud datacenters and of course, for power-aware, poor folks who want to their commercial or research applications to run in a lightweight, cheap way. As for HPC, it’s all about potential and possibilities at this point versus anything practical. Again, this is a proof of concept project. Benchmark results and scaling capabilities will be forthcoming, but for anyone who wants a firsthand lesson in some of the lessons of a non-existent software ecosystem, the ARM guys aren’t the only ones to look to for war stories.

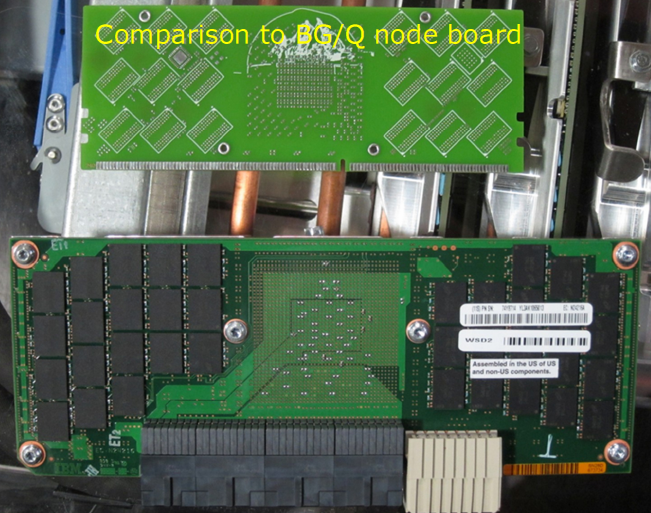

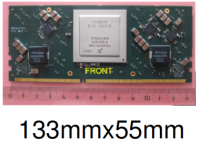

Just as a side note, while sitting with Luijten at the IDC User Forum this week, we set the little server node motherboard next to my iPhone—it was just a tad longer, do some mental comparisons for size scale or take a look below at his part versus a BlueGene board. Sitting this next to a Calexda or Moonshot offers about the same viewing experience.

Microservers should package the entire server node motherboard into a single microchip, leaving off some elements that wouldn’t make sense (including DRAM, power conversion logic and NOR Flash since they don’t fit), says Luijten. There are many motherboards that have graphics and such, but this is pared down.

And yes, this was from a conversation at an HPC-centric event, which might strike some of you as a bit strange. Luijten says that he definitely does not do HPC but Earl Joseph believes strongly that the DOME microserver project is a perfect example of the type of technology that could be disruptive to the industry going forward. It’s power constrained, price-aware, and performance-oriented. While the specs on the flops front are in short order (you can do some quick math based on what Freescale has made available—not shabby for the size and power envelope), Joseph is spot-on. This was one of the more compelling presentations during the two days in Santa Fe and based on sideline conversations, one of the most widely-discussed.

It should be noted that these aren’t coming to a rack near you anytime soon. It’s still a research project, but it’s one that Freescale isn’t taking lightly, even if it’s not been as mainstream at IBM as Luijten might like to see one day. This would make a pretty compelling cloud server for Freescale and they’re working with him now to run some benchmarks to get a better baseline on the performance capabilities that will be shared in a press release eventually.

What IBM will do with the eventual success or interest in the concept on the development side remains anyone’s best guess—especially as the first drums of the ARM invasion can be heard beating in the not-so-far distance. “IBM sold off its SystemX business is because the moment a technology becomes commodity, they get out of the game,” Luijten reflected. They can’t sustain a business on driving a commodity market, hence they’re looking now to things like cognitive computing, among other efforts.

He says that while IBM is not incredibly interested in what he’s working on now, at least in any serious product-driven way, he’s found that with research like this, it helps to be more than just a good technical engineer. “Someone said I’m like an entrepreneur,” he laughed. “It’s not enough to develop this technology, it has to be marketed and you have to find interest however you can.”

We’ll close with the most recent development/progress via one of his slides. And of course, we’ll continue to watch this, even if it’s remote from the HPC we’re looking at now.