With the much-talked-about upcoming report from the Intergovernmental Panel on Climate Change (IPCC), titled “Working Group III: Mitigation of Climate Change,” scheduled to be released on Sunday, we thought it fitting to highlight the community that makes this large-scale climate research possible, the Earth System Grid Federation (ESGF). The ESGF is an international collaboration for the software that powers most global climate change research, including assessments by the Intergovernmental Panel on Climate Change (IPCC).

This interagency effort is led by the Department of Energy (DOE) with co-funding coming from the National Aeronautics and Space Administration (NASA), National Oceanic and Atmospheric Administration (NOAA), National Science Foundation (NSF), and international laboratories such as the Max Planck Institute for Meteorology (MPI-M) German Climate Computing Centre (DKRZ), the Australian National University (ANU) National Computational Infrastructure (NCI), and the British Atmospheric Data Center (BADC).

The mission of the ESGF is to provide the worldwide climate-research community with access to the data, information, codes and analysis tools necessary to make sense of enormous climate data sets. For the ESGF, the US Department of Energy’s (DOE’s) Earth System Grid Center for Enabling Technologies (ESG-CET) team developed and delivered a production environment for managing and accessing extreme-scale climate data. This production environment serves a worldwide community with climate data from multiple climate model sources, ocean model data, observation data, as well as analysis and visualization tools.

Data holdings and services are distributed across multiple sites including ESG-CET sites – ANL, LANL, LBNL/NERSC, LLNL/PCMDI, NCAR, and ORNL – as well as partners sites, such as the Australian National University National Computational Infrastructure, the British Atmospheric Data Centre, the National Oceanic and Atmospheric Administration Geophysical Fluid Dynamics Laboratory, the Max Planck Institute for Meteorology, the German Climate Computing Centre, the National Aeronautics and Space Administration Jet Propulsion Laboratory, and the National Oceanic and Atmospheric Administration.

According to a recent report authored by principal investigator Ann Louise Chervenak with the University of Southern California, one of the seven initial ESG-CET partners, “ESGF software is distinguished from other collaborative knowledge systems in the climate community by its widespread adoption, federation capabilities, and broad developer base. It is the leading source for present climate data holdings, including the most important and largest data sets in the global climate community, and – assuming its development continues – we expect it to be the leading source for future climate data holdings as well.”

In the Final Report for University of Southern California Information Sciences Institute, titled “DOE SciDAC’s Earth System Grid Center for Enabling Technologies,” Chernavak conveys the comprehensive scope of the Earth System Grid and the numerous ways it is facilitating global climate science.

“As we transition from development activities to production and operations, the ESG-CET team is tasked with making data available to all users seeking to understand, process, extract value from, visualize, and/or communicate it to others — this is of course if funding continues at some level,” the report states. “This ongoing effort, though daunting in scope and complexity, would greatly magnify the value of numerical climate model outputs and climate observations for future national and international climate-assessment reports. The ESG-CET team also faces substantial technical challenges due to the rapidly increasing scale of climate simulation and observational data, which will grow, for example, from less than 50 terabytes for the last Intergovernmental Panel on Climate Change (IPCC) assessment to multiple petabytes for the next IPCC assessment. In a world of exponential technological change and rapidly growing sophistication in climate data analysis, an infrastructure such as ESGF must constantly evolve if it is to remain relevant and useful.”

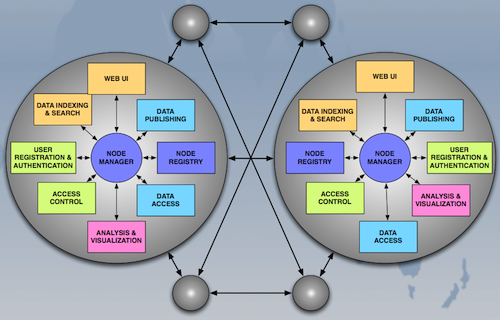

Despite the project’s significant role, this final paper also marks the end of ESG-CET’s funding stream; however, the system they helped create lives on. A principal aim of ESG-CET was implementing a peer-to-peer system of distributed nodes. This model allows the management of system information and the execution of data requests to be shared and spread across distributed nodes that are comprised of modular, pluggable components that interact through a lightweight messaging system.

Supporting critical earth science research continues under the Earth System Grid Federation (ESGF) Peer-to-Peer (P2P) project. Per the ESGF.org website, “ESGF P2P is a component architecture expressly designed to handle large-scale data management for worldwide distribution. The team of computer scientists and climate scientists has developed an operational system for serving climate data from multiple locations and sources. Model simulations, satellite observations, and reanalysis products are all being served from the ESGF P2P distributed data archive.”

The ESGF is also in the midst of some big changes. Known for supporting large-scale climate change research, the ESGF will soon be servicing two additional domains critical to 21st century life: biology/pharmacology and energy research.