We round out this middle zone of May today, sensing how much closer we are to the newest Top 500 list to be announced in Leipzig at the end of June. While we’re still not expecting any surprises to upset the Chinese system’s continued dominance of list, we have been catching wind of some new appearances and notable omissions from the Linpack benchmark. More coming on that in the next couple of weeks.

On another note, we’re quite excited that our call to action on open sourcing broader research concepts through HPCwire has spoken to several of you. We look forward to publishing the pieces that are currently in development and undergoing review. For more details, just in case you missed the initial call, there’s a detailed rationale here.

Before we kick off the week’s news, we wanted to share the preview of some amazing scientific visualization in action.

Data-driven viz created by NCSA’s Advanced Visualization Laboratory will be featured in the Adler Planetarium’s new live show “Destination Solar System.” Opening May 16, the show takes visitors on a tour “from sizzling solar flares on the Sun to liquid methane lakes on Saturn’s moon.” For those who are too far away to watch in person, check out the video:

It’s always great to see the fruits of HPC research find a way to mainstream audiences–and this looks quite stunning.

Top News Items This Week

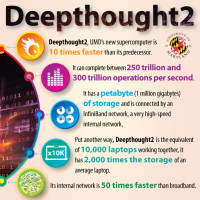

The University of Maryland has unveiled Deepthought2, one of the nation’s fastest university-owned supercomputers, to support advanced research activities ranging from studying the formation of the first galaxies to simulating fire and combustion for fire protection advancements. The Dell-built system has a processing speed of about 300 teraflops.

The University of Maryland has unveiled Deepthought2, one of the nation’s fastest university-owned supercomputers, to support advanced research activities ranging from studying the formation of the first galaxies to simulating fire and combustion for fire protection advancements. The Dell-built system has a processing speed of about 300 teraflops.

Deepthought2 replaces the original Deepthought, installed in 2006. The new supercomputer, which is 10 times faster than its predecessor, has a petabyte of storage and is connected by an InfiniBand network. “Deepthought2 places the University of Maryland in a leadership position in the use of high-performance computing in support of diverse and complex research,” said Ann G. Wylie, Professor and Interim Vice President for Information Technology. “This new supercomputer will allow hundreds of university faculty, staff, and students to pursue a broad range of research computing activities locally – such as multi-level simulations, big data analysis, and large-scale computations – that previously could only be run on national supercomputers,” Dr. Wylie said.

The German computing center for climate research and Bull have signed a contract for the delivery of a petaflops-scale supercomputer, as well as cooperation on climate research simulation. The contract worth 26 million euro covers the delivery of all the key computing and storage components of the new system.

The German computing center for climate research and Bull have signed a contract for the delivery of a petaflops-scale supercomputer, as well as cooperation on climate research simulation. The contract worth 26 million euro covers the delivery of all the key computing and storage components of the new system.

If the new system were fully installed today, it would rank among the five fastest supercomputers in Germany according to the current Top500 list. And the project breaks another record: its 45 Petabyte storage system is one of the largest in the world. DKRZ is setting new standards with the deployment of this outstanding infrastructure, specifically scaled to support its users’ scientific research programs.

Oxford University has installed Panasas ActiveStor 14 storage to improve reliability and performance at their Advanced Research Computing (ARC) Centre. The ARC facility is a central resource available to university researchers that need access to high performance computing resources. ARC operates a range of distributed memory, shared memory, and GPU enabled HPC clusters that are primarily used for scientific and statistical modeling workflows.

The installation consists of 330TB of Panasas ActiveStor 14 storage that is now providing a single pool of storage under a global name space at the heart of the center’s infrastructure. The hybrid scale-out NAS system provides university researchers with the storage required for home areas, general purpose mid-term projects, and high-performance short-term jobs.

A3CUBE has partnered with FCI to bolster its RONNIEE 2S, A3CUBE’s PCIe Network Interface Card with 80 Gb/s of aggregated bandwidth and four external Mini-SAS HD ports capable of running four PCIe x4 Gen 2 links (4 links x 20 Gb/s) over Mini-SAS HD cables. Connectivity options for the RONNIEE 2S include both copper and optical versions of the Mini-SAS HD cable. Connections up to 9m can be established over a standard AWG24 Mini-SAS HD copper cable. For longer lengths or for easier cable management, the RONNIEE 2S supports Mini-SAS HD Active Optical Cables. With the support of FCI, A3CUBE procured state of the art optical cables of varying lengths that were necessary for successful field tests.

Allinea announced that the latest version of its debugging tools, Allinea DDT 4.2.1, has been tailored to offer full support for NVIDIA CUDA 6, the latest release of the parallel computing platform and programming model. “Unified memory will be transformative for codes, as it removes the need to manually copy data between the host CPU and accelerator. The ability to debug with Allinea DDT from day one will make a huge difference to developers who need tools that can scale out to their biggest systems and most challenging bugs. With these tools available, users can quickly deploy CUDA 6 in production,” Allinea CEO, David Lecomber says.

Allinea announced that the latest version of its debugging tools, Allinea DDT 4.2.1, has been tailored to offer full support for NVIDIA CUDA 6, the latest release of the parallel computing platform and programming model. “Unified memory will be transformative for codes, as it removes the need to manually copy data between the host CPU and accelerator. The ability to debug with Allinea DDT from day one will make a huge difference to developers who need tools that can scale out to their biggest systems and most challenging bugs. With these tools available, users can quickly deploy CUDA 6 in production,” Allinea CEO, David Lecomber says.

Speaking of GPUS, ArrayFire v2.1, supporting CUDA and OpenCL has been released. This new version adds full commercial support for Mac OS X, as well as language support for R, Fortran, and Java. A complete list ArrayFire v2.1 updates and new features can be found in the product Release Notes. ArrayFire is a CUDA and OpenCL library designed for maximum speed without the hassle of writing time-consuming CUDA and OpenCL device code. With ArrayFire’s library functions, developers can maximize productivity and performance. Each of ArrayFire’s functions has been hand-tuned by CUDA and OpenCL experts.

On a final note, don’t forget…

The early bird registration period for the 29th annual International Supercomputing Conference will close Thursday, May 15, thus bringing the opportunity for attendees to save 25 percent off the on-site registration rates to an end. The organizers encourage participants not to wait until the last minute to register.

Can’t wait to see you all there…so fun to meet everyone in person, especially our European friends who don’t cross the pond for SC.