Today at ISC14 Cray announced a $54 million contract with the Korea Meteorological Administration (KMA) for two next-generation Cray XC supercomputers and a Cray Sonexion storage system. This latest win, however, is just part of a larger story about a supercomputing company that is still very much relevant four decades after founder Seymour Cray helped spawn an industry with the famed Cray-1.

Since the beginning of the year, Cray has announced six contracts from a portfolio that includes its flagship XC supercomputers and CS300 systems as well as its Adaptive Storage solution. In addition to the KMA deal, the company has signed agreements with the Department of Defense High Performance Computing Modernization Program, the Hong Kong Sanatorium and Hospital, the North German Supercomputing Alliance (HLRN), National Energy Research Scientific Computing Center (NERSC), and the University of Tsukuba in Japan.

Recent success aside, Cray faced some tumultuous periods in the 90s as foreign competition increased and it had to compete with the likes of Fujitsu and Hitachi. The company originally known as Cray Research was acquired by Silicon Graphics, Inc. (SGI) in 1996 and then sold to Tera Computer Company in 2000, before being reborn as Cray Inc. Some difficult restructuring followed and Peter Ungaro was promoted to CEO in 2004. Ungaro and his team decided that instead of fighting the commodity microprocessor trend, they would exploit it by focusing on multicore chips.

In the decade since Ungaro took the helm, the company has mounted a robust comeback, and one of the signs that this strategy is paying off is Cray’s strong showing in the recent TOP500 list. For starters, three of the top ten spots are occupied by Cray systems. Titan, a 17.59 petaflop (LINPACK) Cray KK7 cluster, has been sitting pretty in the number two spot since June 2013 when China’s Tianhe-2 knocked Titan from its first place perch. Cray also claims the number six spot (with the 6.27 petaflop Swiss “Piz Daint”) and the number ten spot, an undisclosed US government system, running at 3.14 petaflops. As we mentioned in yesterday’s detailed TOP500 coverage, this mysterious Cray XC30 is the only new addition to the coveted top 10 echelon.

Drilling down into the list to examine vendor share also turns up some revealing insight. Cray currently sits in third position with 50 systems, 10 percent of the total. HP enjoys a 36.4 percent share (down from 39 percent in November), and IBM is just behind HP with 35.2 percent (up from 33 percent on the previous list). Rounding out fourth and fifth place by list share, are SGI (with 3.8 percent) and Bull (with 3.4 percent). If you prefer to slice and dice based on performance as opposed to system share, the ordering stays the same: IBM, HP, Cray, SGI and Bull.

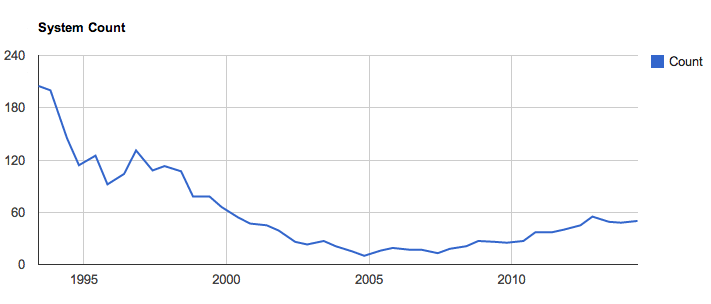

Cray’s TOP500 system count

Perhaps the most interesting data point of all, though, is Cray’s historical system count (depicted above). As one of the first supercomputing vendors, established in the early 70s, Cray’s participation in the TOP500 list reaches all the way to the inaugural publication in June 1993, when the company enjoyed a short-lived but remarkable 41 percent list share with 205 systems. Since that ground-breaking debut, Cray has seen its list share drop steadily until a low point of 10 systems in November 2004. Since then, though, the company has managed to reverse course, rising to 50 systems with the current list – that’s an increase of 40 systems over 19 lists – in the same timeframe that Ungaro has been CEO.

For additional context, Fujitsu and TMC tied for second place with 54 systems each for a 10.8 percent share on the first official TOP500 list. Of course the pool of HPC-systems vendors was a lot smaller then and has since proliferated. The June 1993 list includes 14 vendors in total and the most recent has 32, including the “self-made” category, which refers to clusters made in the Amazon Web Services cloud. Also remember those were really were specialized machines back then, a different architecture set than what exists today.

These data points at the top of the HPC market along with the success of its XC line are signs that Cray is working hard to bring the company back to a profitable state. Recall that Cray is also peddling its big data-oriented Urika appliances, but these customers tend to be the close-to-the-vest sort who like to remain anonymous.

Despite having well-received products and strong customer retention, the supercomputing company has in recent years struggled to meet Wall Street expectations – but that’s often the way it goes in this space. It’s clear that Cray is working hard to reverse this tide and with several multi-million system deals under its belt this year, the company can bank on steady revenues for a couple years. Shares of Cray are currently up more than 2 percent today to 25.78 at publication, giving the company a valuation just north of one billion dollars.

Further Cray ISC14 news

Cray also revealed today that it joined the OpenStack Foundation as a Corporate Sponsor. Cray says it will contribute to OpenStack and work to integrate open source capabilities into future Cray products and services to “benefit the supercomputing industry.”

The OpenStack news continues the open source theme from the previous day, when Cray announced that it would be extending its Tiered Adaptive Storage (TAS) to the Lustre user community.

“The Cray TAS connector for Lustre provides customers with a simplified way to both protect and move data across storage tiers and locations, from high-performance storage to deep-tape archives,” explained Cray.

“A key strategy for Cray is building on open systems,” remarked company vice president of storage and data management Barry Bolding. “While other tiered storage systems are proprietary, we continue to invest in Linux and open format data movement technologies. The Cray TAS connector for Lustre will work on any Linux or Lustre environment, regardless of vendor.”