As we move into the pre-exascale era, issues of power consumption, network bandwidth, I/O and other issues will continue to push increasing integration. This was a key theme during the International Supercomputing Conference this week in Germany where Intel’s Raj Hazra provided an early look into how it will integrate various pieces of the future Knight’s Landing processor, which they’re hoping will hit the market by 2015.

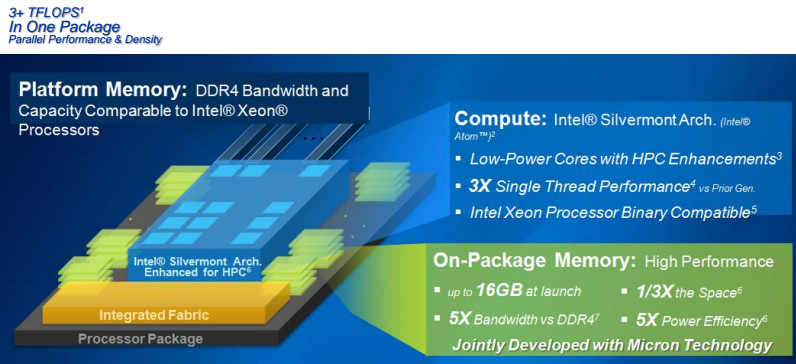

There’s been quite a bit of mystery around what the Knight’s Landing chips will offer in terms of the on-package memory, although there’s been ample speculation about a potential tie in with Micron and its hybrid memory cube. Intel today finally shed some light on this, noting that there will be 16 GB of on-package memory with 5x the bandwidth of DDR4 that would take up 1/3 of the space of the current Xeon Phi and offer 5x the efficiency. Micron followed on immediately after this with the news that indeed, their HMC technology will be central to the Knight’s Landing memory capability.

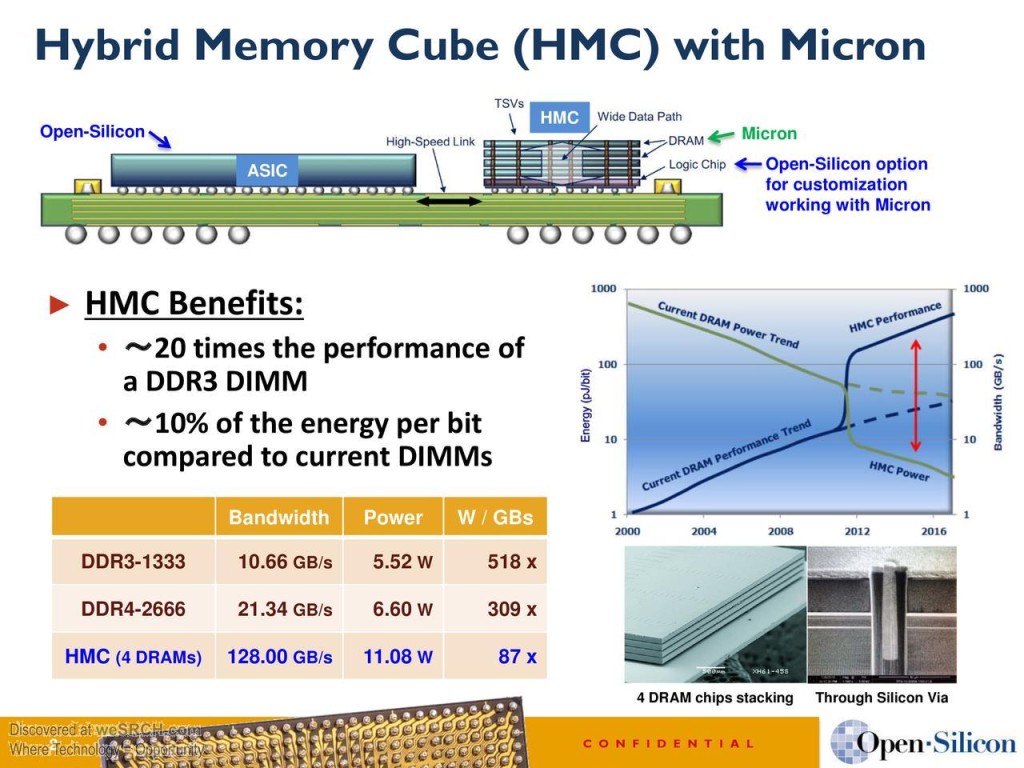

We’ve been hearing quite a bit about the HMC product from Micron, which was announced at the Intel Developer Forum back in 2011. For a refresher, take a look at one of the more recent slides that highlights the overall benefits and details.

The slide below from Micron lays out a few details about the expected Knight’s Landing chips. According to Micron, the two teams have been collaborating for a number of years and the matched capabilities to “innovate, design, assemble and manufacture a high performance stacked memory solution that incorporates both logic and DRAM is an exceptional combination.” Micron notes that Intel’s memory interface marries processor to memory system to deliver optimal performance and efficiency with 5x the bandwidth of DDR4 at the space reduction and ½ the energy per bit.

As we’ve noted already, there are centers already gearing up for Knight’s Landing machines to arrive, including the “Cori” supercomputer at NERSC. Intel says that beyond what is already released under NDA to the centers, the HPC community has been pushing them to release as many details as possible to better prepare the future application developers and end users of their upcoming architecture. Understanding the fabric, memory, and programming requirements is essential, hence they’ve unveiled a few new details this week.

Back when we spoke to Katie Antypas at NERSC about the 2016 Cori system, she emphasized the importance of on-package memory that is expected in the Knight’s Landing-based Cray supercomputer. the real appeal has a lot less to do with compute horsepower than it does real application requirements. “This is critical for our workloads because we’ve found that most of our applications aren’t limited by compute—they’re held back because of memory bandwidth.” As she stated, “While performance and efficiency are key, for users, having this self-hosted architecture means there is no need to worry about moving data on and off a coprocessor.”

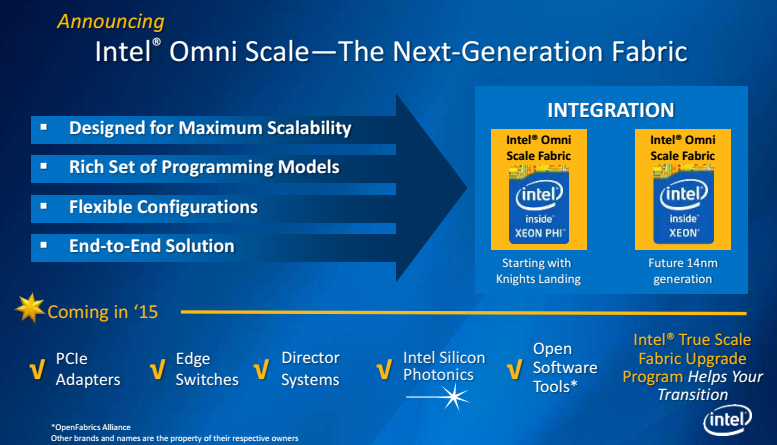

In addition to the news that filtered out from Intel and Micron separately, we were also given some new details on the fabric for the next-generation Knight’s Landing product. Intel has let loose the new name of the product, called OmniScale, which while still shrouded in a great detail of mystery on the technical details front, will be rather different than their current TrueScale product. The new OmniScale fabric will not be Infiniband, says Hazra, but will leverage all the prior history and software stack that’s been proven with TrueScale. When it is formally launched in 2015, it will be offered as a full suite with a companion upgrade package (where the guts have been changed with minimal impact on users, at least that’s what’s we’re being told). Interestingly, we do know that OmniScale is not based on the Aries assets acquired from Cray, but is leveraging that team’s work and the TrueScale developments. While it’s not Infiniband, Hazra says it will be compatible with existing solutions.

Hazra and Wuishpard have hinted at some very big system deal announcements for the next-generation Xeon Phi coming in the next several months. They’re stepping up their investments accordingly with fresh pushes for both Knight’s Landing and the software environment work required via their Parallel Computing Centers, which as we reported earlier this summer, will be extended to include new locations.

Intel is seeing HPC as a rapidly growing part of the overall datacenter market and expects 20% growth in the segment. While that might sound high, admitted Intel’s Charles Wuishpard, that figure represents not just general market expansion, but also the momentum around a range of compute-intensive “big data” problems that span across research, academia and a range of enterprise environments. He and his team in the technical computing group anticipate that many of the developments, including those announced this week at the International Supercomputing Conference, will eventually find their way into the general enterprise datacenter segment. For example, as he noted, “if you look at the future Knight’s Landing processor, we’ll be able to offer 3 teraflops of aggregate performance in a single socket. It was only 15 years ago that such capability was found in the very top supercomputer in the world.”