In many ways, Bull has been a mirror, reflecting companies like Cray from across the Atlantic. One on the one hand, both companies have deep roots in supercomputing, with particularly strong continental bases. Conversely, the arrival of the big data phenomenon has marked a chance for both companies to wrench free from strict supercomputing affiliations and find a new path to deliver high-end, high performance systems to an entirely new and rapidly class of potential enterprise users—customers who might not consider what they do to be exactly “HPC” but who have serious computational and data-driven needs that vanilla servers or database approaches can’t tackle.

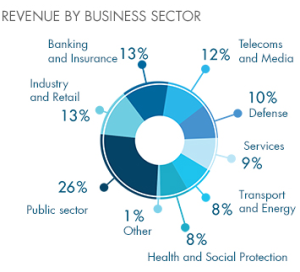

While Cray, SGI, and a few other HPC-oriented companies have focused on rolling out appliances directed at the larger big data market (consider the Urika appliance from Cray as an example) Bull has seen the same opportunities in Europe (where it does 85% of its overall business) and taken similar steps to bake their reputation for large-scale computing for science and industrial supercomputing into new offerings aimed at more mainstream enterprise big data problems. Their “OneBull” vision, which was spelled out at the beginning of this year, is aimed at integrating the new business around big data and cloud computing as a top priority with 6% of all revenues being pushed into research and development, where they fit HPC and security together.

Representative of this newly weighted approach to the extreme scale market (which can include both supercomputing and large-scale enterprise) is today’s announcement from Bull of their new line of servers. Called the Bullion S, these are more designed for the big data and cloud spaces. The new severs are designed to support up to 240 Xeon E7-based nodes with up to 24 TB of memory, making them a fit for in-memory, data-intensive workloads, but also those that require significant compute. Earlier in the year the company echoed what other HPC-rooted companies are doing to extend their customer base into the mainstream enterprise market with a big data appliance in conjunction with real-time search company Sinequa.

Although Bull is making moves to shake free from the narrower supercomputing distinction, the company is not leaving its HPC segment in the enterprise big data dust. The company had a strong showing at ISC last week in Leipzig, Germany in terms of news around fresh deployments and new technologies that will be integrated into future supercomputers. Still, the company has only a total of 17 systems on the Top500, with the top site at CEA (#26) and all but one of their systems in Europe (the exception being the Helios machine at the International Fusion Energy Research Center). This is an improvement for Bull, which had 10 systems total on the Top 500 in 2011 and a mere 5 machines in 2009.

Among new additions to their coming Top500 growth, the French national high performance computing organization, GENCI, is set to acquire a 2,106-node warm water-cooled system from Bull to boost a wide range of research projects. The new supercomputer, which is expected to come online in January 2015, will be called OCCIGEN and will be housed at CINES in Montpellier. It will be equipped with what Bull calls “next-generation Xeon processors” but there is no confirmation about which processors they’re referring to, but the logical assumption, since no one expects the real next-generation Xeon for HPC (Knight’s Landing) to emerge in time for an early 2015-slated system. Is that this means Xeon E7 v2 and/or Xeon Phi. Bull says only that at this point, the system has been designed to “accommodate hybrid technologies.”

The 2-petaflop OCCIGEN machine is expected to boast 200 TB of memory with an IO system to allow it to push data to task at over 100GB/s to meet the demands of large-scale scientific simulation and data-intensive research. The cold plate, warm water cooling system, which is designed to deliver component-level chilling, will push the PUE of the system to an expected 1.1 rating.

The National Center for Genome Analysis in Barcelona, which is one of the top genetic research sites in Europe, has also tapped Bull for a new system that will target large-scale gene sequencing projects. While details about the node count or type of system that was purchased are not public, the center’s director says that their problems demand more than “traditional computing” they’ve had to rely on in the past. “The solution is not to increase the number of sequencers. The key lies in the balance between sequencing and HPC. It’s not only about increasing sequencing capacity by acquiring new hardware, but designing and appropriate computing infrastructure…it is essential to choose a flexible architecture that can grow without limits the keep pace with genome projects,” he remarked.

In addition to the user stories we learned about during International Supercomputing Conference Week, Bull also announced a strategic partnership with another recently acquired company. Bull can now resell Xyratex (now part of Seagate) can resell ClusterStor offerings as part of their future system. The deal was inked with news about a technical partnership between the two for a contract at Deutsches Klimarechenzentrum (DKRZ) wherein Bull designed and installed a 3-petaflop Bullx B720 super outfitted with 45 petabytes of ClusterStor CS9000 parallel storage capable of 1TB/s of performance, which will help the climate simulation facility better handle its growing volumes of data.

With the One Bull plan in full effect and a recent acquisition by a company that is solely focused on enterprise big data and cloud computing, it will be interesting to see how the company’s investments in supercomputing will persist. At ISC there were no signs of reduced investment and the news of new wins show that there is still value in pursuing large-scale HPC sites.