There has been little doubt that the convergence of traditional high performance computing with advanced analytics has been steadily underway, fed in part by a rush of new tools, frameworks and platforms targeting the big data deluge.

And when one thinks about the old guard of supercomputing, Cray is one of the first vendors to come to mind, although they’ve been steadily ramping up efforts to mesh into the broader world of enterprise systems with their own slant on the big data phenomenon. First came the company’s Urika graph analytics appliance just a little over two years ago, which has powered everything from large-scale life sciences applications to the big leagues. As of this morning, Cray will be adding a second machine to their lineup of big data-geared systems with the Urika-XA platform—a Cloudera-based Hadoop appliance, which happens to sport some of the best the HPC world has to offer in terms of hardware.

Hadoop appliances are nothing new, with a stream of vendors from well outside of the entrenched HPC vendor list (and plenty within it) offering their own hardware wrapped around the framework. However, what Cray has done is putting the emphasis on actual performance, with some additional benefits in terms of integration and manageability. While a 1500-plus-core Haswell variant will be made available in the first part of 2015, the 48-node Urika-XA appliance sports Ivy Bridge processors in its first-run stage available now, as well as 36 TB of memory, 38 TB of SSD (200 TB storage total with HDD and Lustre via their Sonexion 900), Infiniband, and the Lustre file system, which will complement access to the native HDFS that still pushes many MapReduce and Hadoop applications.

Hadoop appliances are nothing new, with a stream of vendors from well outside of the entrenched HPC vendor list (and plenty within it) offering their own hardware wrapped around the framework. However, what Cray has done is putting the emphasis on actual performance, with some additional benefits in terms of integration and manageability. While a 1500-plus-core Haswell variant will be made available in the first part of 2015, the 48-node Urika-XA appliance sports Ivy Bridge processors in its first-run stage available now, as well as 36 TB of memory, 38 TB of SSD (200 TB storage total with HDD and Lustre via their Sonexion 900), Infiniband, and the Lustre file system, which will complement access to the native HDFS that still pushes many MapReduce and Hadoop applications.

Before we touch on the specific hardware choices, one of the more fascinating features of the appliance is its reliance on the large-scale data processing engine, Apache Spark, which while still only a tentative topic for HPC, offers a great deal of promise in terms of emerging use cases for Hadoop. The early production workloads around Spark are best highlighted by the handling of interactive querying of data, iterative data mining, and tackling streaming data. There has been a great deal of attention around Spark, fed in part by a push from Hadoop distribution giant, Cloudera, and it has gained significant momentum and development over the last 12-18 months, according to Cray’s VP of Analytics Products, Ramesh Menon.

It was difficult not to start with the toughest question for Ramesh first…”Why throw so much high performance hardware (and software) at Hadoop, which itself was never designed for anything but large-scale batch jobs?” The fact is, he says, Hadoop and the use cases around it have evolved, especially with more enterprise and HPC workloads moving from experimentation to production who are looking for more integration than most appliances allow and fewer failures than commodity approaches. These aspects, along with their own tailoring to ensure existing MapReduce jobs are supported and sped through the addition of handling the intermediary steps of that code with the SSD and their Cray Adaptive Runtime software, they can boost the old and make performance room for the new.

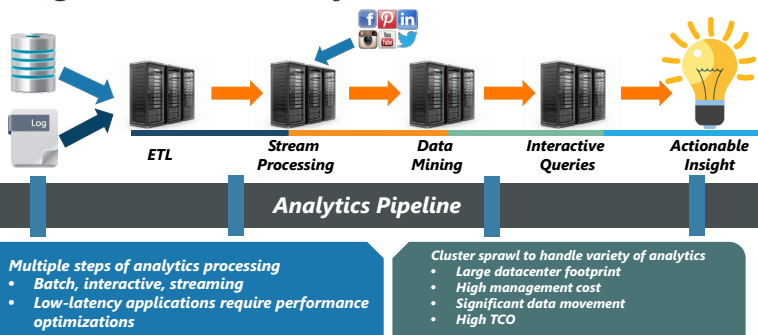

Aside from the performance angle, the real benefit for production Hadoop users is that it replaces the long chain of systems in the data analysis pipeline. While this is the goal of any appliance, Menon says that as they talked to existing users of advanced analytics in enterprise HPC, they were doing a great deal of work on multiple clusters, which had its own costs, but when they did take the appliance route, they found themselves locked in and unable to innovate using new ecosystem tools. To highlight this TCO chain, Menon provided the following:

There has been some exciting research on Infiniband for Hadoop (this in particular) but just as Lustre tends to lag behind native HDFS for Hadoop deployments, Cray is taking a chance on the fact that customers who are seeing actual real-world big value out of the ecosystem around Hadoop are also experiencing the performance lag that makes it unsuitable for anything but what it was designed for. We’ve understood that Lustre is growing in prominence in enterprise, but where does this fit in when most jobs are built to run on HDFS or other ecosystem-spurred alternatives (via Cassandra/DataStax, Ceph, Tachyon, etc.). According to Menon, HDFS and Lustre are both supported in order to enable the old and new to work together. For existing MapReduce code, HDFS is present and sped by the SSD layer with Lustre serving as the ideal file system for the new breed of streaming in-memory applications. This is backed by the Cray Adaptive Runtime for Hadoop, which integrates the whole of both generations of applications.

Unlike the questions some might have around the addition of Lustre and Inifiniband, there has been a great deal of work around the use of SSDs for Hadoop and MapReduce workloads. For users of the appliance who wish to continue using existing MapReduce code, this is especially beneficial because it can significantly speed the intermediate steps around the shuffle stages, where a lot of the congestion happens.

As Menon stressed, “Infiniband is only in the platform because part of the challenge is to make sure we have all the benefits of an appliance where we can optimize to a known stack. The different direction from our current Hadoop appliance users is that they’re locked in on the software side. If there’s a new project in the Hadoop ecosystem it’s hard if not impossible to run it on the appliance. With the appliance approach, we provide pre-integrated package so they know the foundational elements in that high performance work with Lustre, the SSDs, and so forth and can let them scale all of that as they need.”

Cray will be releasing benchmarks in the future that demonstrate the value of the high performance technologies like Infiniband and Lustre for Hadoop environments, which we will be looking forward to. Menon says the typical microbenchmarks that serve the Hadoop community well aren’t representative of what they want to demonstrate but we’ll certainly stay tuned for those. As with the first Urika machines, any pricing details are a shrouded in mystery. But with Inifiniband, SSDs, Haswell, Lustre, and their own unique software integrating the whole package, it’s safe to assume this is not for the Hadoop hobbyist.

“We see use cases in a lot of the traditional HPC areas, of course,” said Menon, “but enterprise adoption of Hadoop is creating a new set of requirements that this targets specifically and very well.”