Many nations are racing to cross the exascale computing finish line by roughly 2020. Yet the challenges are such that establishing useful exascale computers some 50-100 times faster than today’s leadership machines requires the coordinated efforts of a vast array of stakeholders. At Supercomputing 2014 (SC14), an industry collaboration called InfiniCortex launched with the goal of providing a key part of the exascale foundation.

Led by Singapore’s Agency for Science, Technology and Research (A*Star) in partnership with Obsidian Strategic, Tata Communications and Rutgers University, InfiniCortex refers to a set of geographically distributed high performance computing and storage resources based on InfiniBand technology.

The project received further attention at the recent Big Data and Extreme-scale Computing (BDEC) event in Barcelona, a major conference for reporting ground-breaking research at the intersection of big compute and big data. In a position paper for the 3rd annual BDEC event, a team of researchers from A*Star’s Computational Resource Centre revealed further details about the implementation of InfiniCortex.

“The approach is not a grid or cloud based,” they write, “but utilises extremely efficient, lossless and encrypted InfiniBand transport technology over global distances allowing RDMA and straightforward implementation of both concurrent supercomputing over global distances and implemention of very efficient workflows – and here it serves as an ideal vehicle to serve both Big Data and Exascale computing requirements.”

They claim it has the ability to provide a level of concurrent supercomputing necessary for supporting exascale computing. They add that the concurrent and distributed fashion will address power and infrastructure challenges and data replication and disaster recovery issues associated with a centralized approach.

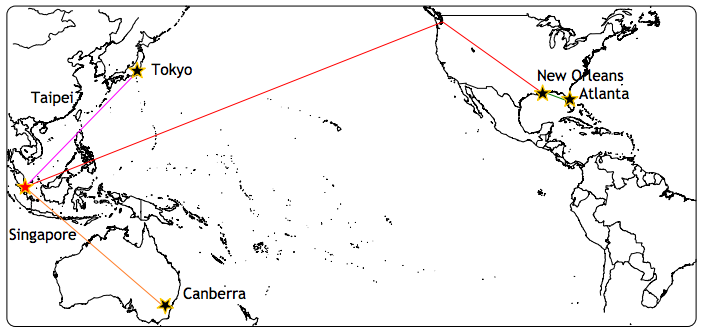

The distributed supercomputing concept took off at SC14 with the demonstration 100 Gbits/s data transmission across the Pacific via subsea optical cables to the show floor. The record-breaking event heralded a ten-fold boost over previously recorded transmission speeds between Asia and North America, say its organizers. The distances were achieved using Obsidian Strategics range extenders including routing and BGFC based sub-netting.

The platform linked three continents (Asia, Australia and North America); four countries (Singapore, Australia, Japan and the US); seven universities and two large research organizations (A*Star in Singapore and Oak Ridge National Laboratory in Oak Ridge, Tenn.). The organizers employed InfiniBand sub-nets with different net topologies to create a single topologically optimized computational resource, a so-called Galaxy of Supercomputers.

As with any HPC resource, though, what concerns most researchers is the application layer. To assess its feasibility, collaborators, including Japan’s Tokyo Institute of Technology (TITECH), Australia’s National Computational Infrastructure (NCI), Oak Ridge National Laboratory (ORNL), Princeton, Stony Brook and Georgia Institute of Technology ran a mix of workflows and applications over the InfiniCortex platform, including:

- RDMA-based HPC cloud workflows for intercontinental genetic sequencing (NCI)

- File migration with Lustre and dsync+ (TITECH / Georgia Tech)

- Near real-time plasma disruption detection using ADIOS (Princeton Plasma Research Lab / ORNL)

- Automated microscopy image analysis for cancer detection, also using ADIOS (Stony Brook University / ORNL)

Researchers who are accustomed to TCP/IP based file transfer (FTP) will want to note the major increase in data throughput enabled by long distance InfiniBand. According to the A*Star team, the time it took to send a 1.143 terabyte file of genomics data from Australia to Singapore via Seattle, was reduced from 12 hours 33 minutes to 24 minutes, a 3100% speedup.

As it continues to seek partners, the initiative is especially focused on the addition of new and relevant applications that illustrate the capabilities of InfiniBand and the InfiniCortex platform. A preview of upcoming projects includes GPGPU applications with Reims University in France, asynchronious linear solvers with University of Lille, and globally distributed weather and climate modeling together with real-time visualisation of the workflow progress with ICM Warsaw, Poland.

The authors also reveal that the Singapore NSCC (National SuperComputing Centre), a key collaborator, just got clearance to begin acquisition of a supercomputer in the 1-3 petaflops range. Expected to be operational in the third quarter of 2015, the resource will be linked with Europe, Japan and the US to pursue HPC research relevant to this initiative.

Senior director of the A*Star Computational Resource Center Marek Michalewiczin, a co-author of the position paper, provides additional information in this presentation from the HPC Advisory Council workshop in Singapore.