Although the focus of this year’s GPU Technology Conference keynote wasn’t particularly HPC-centric, references to supercomputing abounded throughout the two-hour presentation delivered by charismatic NVIDIA CEO Jen-Hsun Huang to a crowd of 4,000 this morning inside the packed San Jose McEnery Convention Center.

In addition to the requisite-but-very-cool GPU-enabled visual demonstrations meant to showcase this year’s theme of deep learning, attendees also heard about the progress GPU computing has made in the last six years. And, as has become custom at the annual event, NVIDIA debuted a new GPU and revealed key pieces of its graphics computing roadmap out to 2018.

As for that next generation of NVIDIA GPU that just dropped, the honor goes to Titan X, a variant of the Titan chip that NVIDIA launched in 2013 in homage to its flagship supercomputer win of the same name at the Oak Ridge National Laboratory. Titan X, though, offers little in the way of double-precision floating point performance — just 0.2 teraflops — so obviously not a great fit for most HPC workloads. But with 7 teraflops of single-precision performance, Titan X *is* a boon to deep learning workloads (natch).

To FP64 performance-seekers, Huang pointed to Titan Z, which has 2.6 teraflops DP (and 8.0 teraflops SP). “The Titan X,” said Huang, “is designed for single-precision. For people who want double-precision, we still have Titan Z, the fastest single-card double-precision GPU we have. Titan X is the highest performing single-precision with the largest frame buffer [12BG] and most advanced GPU architecture we have created, all based on Maxwell.”

In an press forum after the keynote, Senior Vice President of GPU engineering at NVIDIA Jonah Alben addressed the dearth of double-precision floating point performance, noting:

“NVIDIA has one common GPU architecture, but we make different choices depending on which particular customers we are targeting given chip for. Titan X is based on a GPU that’s part of the family of the GM20x chips [second-generation Maxwell] therefore it has the same properties as those chips and it’s targeted for a deep learning type customer… and we have other products that are great for double-precision.”

The keynote was also an opportunity for Jen-Hsun Huang to highlight the growth of GPU computing since 2008 (CUDA debuted in 2007). In that fledgling year, there were 150,000 CUDA downloads, 27 CUDA apps, 4,000 relevant academic papers, 60 universities starting to teach CUDA-accelerated computing, and 6,000 Tesla GPUs shipped — the equivalent of 77 teraflops GPU-accelerated supercomputing power.

Moving ahead to the present day reflects roughly 10X jump in NVIDIA-backed GPU computing with 3 million CUDA downloads, 319 CUDA applications, 800 universities around the world teaching CUDA and GPU acceleration, 60,000 papers citing the use of GPUs for research, and 450,000 Tesla GPUs shipped providing a whopping 54 petabytes of accelerated computing to supercomputers and high-performance computing centers globally.

“What we enable is the world’s most popular, world’s most accessible supercomputing platform,” Huang effused. “Any researcher, any student, any engineer can reach out very easily and get a GPU that’s powered by CUDA to accelerate their research.”

“Most of the applications we serve are really about speed,” he later continued. “Without the speed, it is simply impossible for you to do your work. One of my favorite quotes was when a researcher came to me and said ‘Because of your work I’m now able to do my life’s work in my lifetime.’”

Looking Ahead

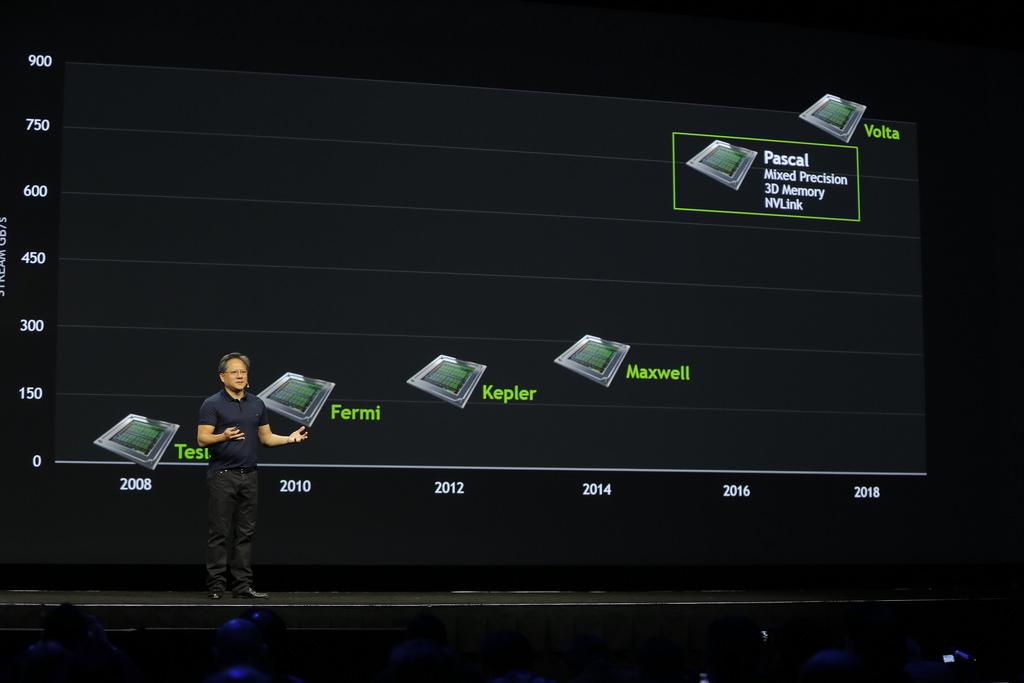

A refreshed graphics processor roadmap was also on the agenda for the morning talk, providing a glimpse at the upcoming Pascal GPUs and Volta parts. Not much has changed with regard to Pascal in the last twelve months, but Huang did confirm key elements, notably: mixed precision computing for greater accuracy, 3D memory with 3X the bandwidth and nearly 3X the frame buffer capacity of Maxwell, and of course NVLINK, which is on track to provide a 5-to-12 times speedup in data movement between GPUs and CPUs compared with today’s current standard, PCI-Express.

Volta returns to the lineup after NVIDIA switched up some key pieces of the roadmap last year putting Pascal in the place earlier reserved for Volta, leaving many wondering about the fate of that architecture. The 3D stacked memory and NVLINK technology originally planned for a Volta debut were moved over to Pascal, enabling them to still make the 2016 schedule. No details of Volta were disclosed today other than its projected 2018 launch.

GTC15 roadmap above — GTC14 roadmap below (note the return of Volta, which was pulled from last year’s roadmap)

Huang also shared some figures that show Pascal getting 10X better performance over Maxwell.

It was later clarified in the press Q&A that this significant speedup was specifically referencing applications that benefit from FP16 computation, like deep learning and imaging in general.