When the going gets tough, the tough join together to innovate. This is the message we are hearing from HPC stakeholders across the government and vendor landscape. It would an oversimplification to say that big data is responsible for driving this deeper partner integration but the technology trend has something to with it. More precisely, the challenge is coming from the juxtaposition of the Moore’s law slow down, the increased use of HPC for business needs and the shift to more data-intensive workloads.

In a move that was motivated by all of these factors, HP and longtime partner Intel are taking their strategic alliance to the next level. HP explains that the joint High Performance Computing (HPC) alliance was “created to advance customer innovation and help expand accessibility of HPC to enterprises of all sizes.”

The announcement can be distilled down to two parts, reflecting a development level and a go-to-market level:

- Customized industry-specific solutions for HP Apollo systems, integrated with Intel’s Scalable System Framework.

- Expanded Centers of Excellence to facilitate customer access to HPC.

The proliferation of HPC means that it is no longer relegated to academia, government and a handful of enterprise verticals (you know the ones). Conceive for a moment the size of the traditional HPC space and contrast that with the addressable market space that is enterprise. As Intersect360 has tracked, enterprise HPC now makes up slightly more than one-quarter of the total HPC market, and it is growing more quickly than traditional HPC. HPC vendors are dialed in to this growth and potential, which is further bolstered by big data applications driving need for HPC solutions.

So it makes sense that a major aim of the HP-Intel alliance is expanding into the enterprise. To support that positioning, HP is moving from a product and features perspective to a solutions perspective with an emphasis on financial services, life science, and oil and gas. The partners will work closely on customized industry-specific solutions for HP Apollo systems based on Intel’s Scalable System Framework.

HP saw in Intel a partner that would bring expertise as well as market knowledge to support their effort to build solutions into customers.

“Intel has announced a lot of IP in HPC-specific products – e.g., Phi, Omni-Path, Intel Enterprise Edition Lustre, NVRAM capability, and SSDs – and we will be working to integrate these elements into our Apollo server line, which is optimized for high-performance computing and big data,” said Bill Mannel, vice president and general manager, HPC and Big Data, HP Servers, in an interview with HPCwire.

HP and Intel are enhancing capabilities at the HPC Center of Excellence in Grenoble, France, which was established two years ago to facilitate HP relationships in the European market. Further, HP is opening a second Center of Excellence in Houston, Texas, to better support the North American market. Both centers provide customers with access to best-of-breed Intel and HP technology, industry-optimized HPC solutions from HP and the opportunity to work with ISVs and HP/Intel engineers to modernize code and optimize infrastructure for HPC-related workloads.

“As data explodes in volume, velocity and variety, and the processing requirements to address business challenges become more sophisticated, the line between traditional and high performance computing is blurring,” said Bill Mannel, vice president and general manager, HPC and Big Data, HP Servers. “With this alliance, we are giving customers access to the technologies and solutions as well as the intellectual property, portfolio services and engineering support needed to evolve their compute infrastructure to capitalize on a data driven environment.”

Mannel draws a line between the tighter partnership and the proliferation of computing technologies and the fact that the standard x86 architecture is challenged with providing generation over generation performance improvements for many applications. “This gets people looking at other technologies, accelerators, coprocessors, FPGAs, creating this body of interested people that want to engage these new technologies but to get to them is requiring in some cases a new way of developing code, code modernization aimed at extracting parallelism,” he commented.

“When x86 does not satisfy user needs, they are having to heavily engage in other ways of exploiting performance,” Mannel continued. “As HPC becomes a method for getting value from big data, there are a greater number of customers not familiar with HPC and related techniques from an administrative and programming standpoint. As customers embrace their big data they are finding challenge in how to get value out of it, and HPC is one of the ways they can do that.”

Behind the solution-oriented focus and the Centers of Excellence is the notion that along with performance, access is becoming increasingly important in this era of democratized HPC. Access to HP means allowing customers to consume HPC in the way and time that they wish to. Mannel referenced HP’s Performance Optimized Datacenter (POD) as providing this capability. Under this arrangement, HP sets up PODs near the customer site and the customer essentially pays by the sip.

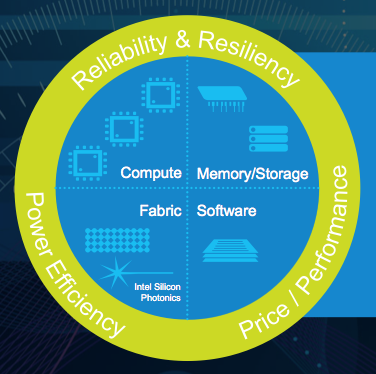

A t a pre-ISC press conference held last Friday, Intel’s Charlie Wuischpard referred to the Scalable System Framework as “the organizing principle for Intel and its OEM partners.” With this underlying blueprint – the compute, the fabric, the memory/storage and the software – Intel has created a template for building solutions across a range of scales.

t a pre-ISC press conference held last Friday, Intel’s Charlie Wuischpard referred to the Scalable System Framework as “the organizing principle for Intel and its OEM partners.” With this underlying blueprint – the compute, the fabric, the memory/storage and the software – Intel has created a template for building solutions across a range of scales.

Wuischpard, vice president of Intel’s data center group and general manager, said the tighter collaboration began as a conversation between the respective company CEOs on the cusp of HP’s restructuring plans to split into two separate companies. As HPC has grown from its roots in academic and research circles, HP sees opportunities to expand its presence in both traditional and newer enterprise and big data-oriented markets.

“They want to put their dollars where we want to put our dollars,” said Wuischpard. “We see HP as a big scale partner. The size of their field organization and channel reach is greater than ours in this part of the industry.”

“There’s actually a multi-phase, three-generation at least mapped out through 2020,” Wuischpard continued, “starting from [alignment within] our current road maps with further intersections taking place as R&D drives greater levels of differentiation and benefit.”

This slide from HP and Intel depicts this layered approach:

Intel expects that the framework will enable not just HP, but all of its OEM partners, to provide differentiation within their respective markets and customers. Intel has a similar partnership in place with Cray, which in April announced that it is basing its future Shasta architecture on the Intel framework.